Examples of FFmpeg problem recording video and audio decoding encounter (b)

Recent project development requires the use FFmpeg to decode audio and video, encountered some problems in the course of its history.

FFmpeg version: FFmpeg3.4.1 download the following address:

1, audio decoding:

- av_register_all (); traversing register all components, including various codecs, the demultiplexer and the like;

- AVFormatContext * pFormatCtx = avformat_alloc_context (); depends mainly stores information package comprising audio format, this is very important variables, almost always through the decoding process, after which the main variable bit pFormatCtx allocate memory;

- avformat_open_input (& pFormatCtx, pInput_test, NULL, NULL); open the input file, read the file header information, to read the file header information structure pFormatCtx vivo, in preparation for subsequent decoding;

- avformat_find_stream_info (pFormatCtx, NULL); acquired stream information file, the main body structure according to the existing information pFormatCtx assignment information pFormatCtx stream flow field structures in the body, i.e., can be seen as further pFormatCtx assigned structure;

- AVCodecParameters * pAudioCodecCtx = pFormatCtx-> streams [a_stream_idx] -> codecpar; pFormatCtx extracting structures in the body corresponding to the information stream encoder

- AVCodec * pAudioCodec = avcodec_find_decoder (pAudioCodecCtx-> codec_id); find the information corresponding to the audio decoder according to a codec encoding id number

- AVCodecContext * pAudioEnc = avcodec_alloc_context3 (pAudioCodec); the AVCodecContext according to the initialization, allocation only, not open

- avcodec_parameters_to_context (pAudioEnc, pFormatCtx-> streams [a_stream_idx] -> codecpar); CodecContext padding information, this step is important for the decoded audio, Without this step, it is not normal audio decoding

- avcodec_open2 (pAudioEnc, pAudioCodec, NULL); open decoder

- av_read_frame (pFormatCtx, packet); pFormatCtx read from the video file structure within the flow stream packet compression field variables

- The packet stream is introduced into the decoder corresponds to the compressed file; avcodec_send_packet (pAudioEnc, packet)

- avcodec_receive_frame (pAudioEnc, pFrame); pAudioEnc from the decoder decodes the compressed flow into pFrame variable (stored audio and video data decompression)

- pFrame structures in the body uint8_t * data [AV_NUM_DATA_POINTERS]; the members of the stored audio and video data stream decoding, the corresponding operation may be made (the image processing and the like may be saved).

The following is an example of the code (from the project due to the debiting, the process substantially no problem, not validated may be some minor problems, are first recorded and then a subsequent verification):

#include <windows.h>

#include <stdint.h>

#include <iostream>

extern "C"

{

#include "libavcodec/avcodec.h"

#include "libavformat/avformat.h"

#include "libavutil/channel_layout.h"

#include "libavutil/common.h"

#include "libavutil/imgutils.h"

#include "libswscale/swscale.h"

#include "libavutil/imgutils.h"

#include "libavutil/opt.h"

#include "libavutil/mathematics.h"

#include "libavutil/samplefmt.h"

};

#define ES_STREAM_VIDEO 1

#define ES_STREAM_AUDIO 2

#pragma comment(lib, "FFmpeg/lib/avcodec.lib")

#pragma comment(lib, "FFmpeg/lib/avformat.lib")

#pragma comment(lib, "FFmpeg/lib/avdevice.lib")

#pragma comment(lib, "FFmpeg/lib/avfilter.lib")

#pragma comment(lib, "FFmpeg/lib/avutil.lib")

#pragma comment(lib, "FFmpeg/lib/swresample.lib")

#pragma comment(lib, "FFmpeg/lib/swscale.lib")

typedef struct AVMediaPacket

{

BYTE* m_data[TL_NUM_DATA_POINTERS];

int m_linesize[TL_NUM_DATA_POINTERS];

BYTE* m_pBuf;

int m_buf_size;

int m_max_size;

int m_cur_size; // ES packet using

int m_packet_type; // 0ES package 1Frame

//enum AVPixelFormat for video frames

//enum AVSampleFormat for audio

int m_pixel_format;

int m_channel_count;

LONGLONG m_channel_layout;

int m_nb_samples; // number of audio samples of a single channel

int m_sample_rate; // audio sampling rate

int m_width;

int m_height;

/**

* The content of the picture is interlaced.

* - encoding: Set by user.

* - decoding: Set by libavcodec. (default 0)

*/

int m_interlaced_frame; // 0 progressive frames, an interlaced frame

/**

* If the content is interlaced, is top field displayed first.

* - encoding: Set by user.

* - decoding: Set by libavcodec.

*/

int m_top_field_first; // 0 bottom field first even, odd field first on a 1

int m_pict_type;

int m_es_stream_type; // video frames or audio frames

LONGLONG m_pos;

LONGLONG m_origin_size; // number of bytes of the original packet ES

LONGLONG m_dts; // ES packet using

LONGLONG m_pts;

LONGLONG m_origin_ts;

LONGLONG m_sys_pts; // pts converted into system time (ms)

LONGLONG m_duration; // the frame length

LONGLONG m_sys_duration; // converted into system time length (msec)

int m_align; // data alignment

int m_scale_mod; // conversion method used in a video frame (the frame is obtained through the conversion to the embodiment)

int m_stream_index; // same AVPacket of stream_index

int m_flags; // same AVPacket of flags

}AVMediaPacket;

/ ********** query FFmpeg codec support ************ /

void CheckEncoderDecoder()

{

char *info = (char *)malloc(40000);

memset(info, 0, 40000);

AVCodec *c_temp = av_codec_next(NULL);

while (c_temp != NULL)

{

if (c_temp->decode != NULL)

{

strcat(info, "[Decode]");

}

else

{

strcat(info, "[Encode]");

}

switch (c_temp->type)

{

case AVMEDIA_TYPE_VIDEO:

strcat(info, "[Video]");

break;

case AVMEDIA_TYPE_AUDIO:

strcat(info, "[Audeo]");

break;

default:

strcat(info, "[Other]");

break;

}

sprintf(info, "%s %10s\n", info, c_temp->name);

c_temp = c_temp->next;

}

puts(info);

free(info);

}

void InitMediaPacket(AVMediaPacket* pFrame,int nPacketType, int nEsStreamType)

{

for (int i = 0; i < TL_NUM_DATA_POINTERS; i++)

{

pFrame-> m_data [i] = NULL; // audio and video data

pFrame-> m_linesize [i] = 0; // size of each row of data

}

pFrame-> m_pBuf = NULL; // pointer to audio and video data

pFrame->m_buf_size = 0;

pFrame->m_max_size = 0;

pFrame-> m_cur_size = 0; // ES packet using

pFrame-> m_packet_type = nPacketType; // 0ES package 1Frame

//enum AVPixelFormat for video frames

//enum AVSampleFormat for audio

pFrame->m_pixel_format = AV_PIX_FMT_NONE;

pFrame->m_channel_count = -1;

pFrame->m_channel_layout = -1;

// the number of audio samples of a single channel; pFrame-> m_nb_samples = -1

pFrame-> m_sample_rate = -1; // audio sample rate

pFrame->m_width = -1;

pFrame->m_height = -1;

/**

* The content of the picture is interlaced.

* - encoding: Set by user.

* - decoding: Set by libavcodec. (default 0)

*/

pFrame-> m_interlaced_frame = -1; // 0 progressive frames, an interlaced frame

/**

* If the content is interlaced, is top field displayed first.

* - encoding: Set by user.

* - decoding: Set by libavcodec.

*/

pFrame-> m_top_field_first = -1; // 0 even bottom field first, the odd field first 1

pFrame->m_pict_type = -1;

pFrame-> m_es_stream_type = nEsStreamType; // is a video frame or an audio frame

pFrame->m_pos = -1;

pFrame-> m_origin_size = -1; // number of bytes of the original packet ES

pFrame-> m_dts = -1; // ES packet using

pFrame->m_pts = -1;

pFrame->m_origin_ts = -1;

pFrame-> m_sys_pts = -1; // pts converted into system time (ms)

// length frames; pFrame-> m_duration = -1

pFrame-> m_sys_duration = -1; // converted into system time length (msec)

pFrame-> m_align = -1; // data alignment

pFrame-> m_scale_mod = -1; // conversion method used in a video frame (the frame is through the conversion scheme come)

pFrame-> m_stream_index = -1; // with the stream_index AVPacket

pFrame-> m_flags = -1; // with the flags AVPacket

}

BOOL MallocMediaFrameBuf(AVMediaPacket* pFrame, int nBufLen)

{

if (nBufLen <= 0)

return FALSE;

for (int i = 0; i < TL_NUM_DATA_POINTERS; i++)

{

pFrame-> m_data [i] = NULL; // audio and video data

pFrame-> m_linesize [i] = 0; // size of each row of data

}

pFrame->m_pBuf = (BYTE*)malloc(nBufLen*sizeof(BYTE));

pFrame->m_buf_size = nBufLen;

if (pFrame->m_pBuf)

return TRUE;

else

return FALSE;

}

void ReleaseMediaPacket(AVMediaPacket* pFrame)

{

if (pFrame->m_pBuf)

{

free(pFrame->m_pBuf);

pFrame->m_pBuf = NULL;

}

}

int main()

{

const char * pInput_test = "E: \\ C ++ \\ VideoExaminationSystem \\ \\ color of the wind field test 1.gxf";

FILE *fout = fopen("E://test.yuv", "wb+");

AVMediaPacket src_AudioFrame;

// 1. All components registered

av_register_all();

CheckEncoderDecoder (); // check this version supports FFmpeg codec

// encapsulation context, overall command structure, the information stored video file format encapsulation

AVFormatContext * pFormatCtx = avformat_alloc_context (); // depends primarily stores information included in the audio package format

// 2. Open the input video file, read the file header information

if (avformat_open_input(&pFormatCtx, pInput_test, NULL, NULL) != 0)

{

printf ( "% s", "Can not open input video file");

return -1;

}

// 3. Getting video file information

if (avformat_find_stream_info(pFormatCtx, NULL) < 0)

{

printf ( "% s", "Unable to get video file information");

return -1;

}

// Gets the index position of the video stream

// loop through all types of streams (audio streams, video streams, subtitle streams), find the video stream

int a_stream_idx = -1; // audio stream

//number of streams

for (int i = 0; i < pFormatCtx->nb_streams; i++)

{

// type of flow

if (pFormatCtx->streams[i]->codecpar->codec_type == AVMEDIA_TYPE_AUDIO)

{

a_stream_idx = i; // record the audio stream index

break;

}

}

if (a_stream_idx == -1 && (AUDIO_DETECT_ONLY == nDetectFlag || AV_DETECT == nDetectFlag))

{

printf ( "% s", "can not find the audio stream \ n");

return -1;

}

// only know the encoding of the video to be able to find a decoder according to an encoding

// Get the context of the codec the video stream

AVCodecParameters *pAudioCodecCtx = NULL;

AVCodec *pAudioCodec = NULL;

AVCodecContext *pAudioEnc = NULL;

AVPacket *packet = NULL;

AVFrame *pFrame = NULL;

int src_audio_buf_size = -1;

int nb_planes = -1;

pAudioCodecCtx = pFormatCtx->streams[a_stream_idx]->codecpar;

// 4. The encoded codec context id to find the corresponding decoder

pAudioCodec = avcodec_find_decoder(pAudioCodecCtx->codec_id);

if (pAudioCodec == NULL)

{

printf ( "% s", "can not find the audio decoder \ n");

return -1;

}

pAudioEnc = avcodec_alloc_context3 (pAudioCodec); // initialize the AVCodecContext, just assigned, not open

/ ****************** START -> This step is very important: not set does not work correctly decode *************** * /

// fill CodecContext information

if (avcodec_parameters_to_context(pAudioEnc, pFormatCtx->streams[a_stream_idx]->codecpar) < 0)

{

printf("Failed to copy codec parameters to decoder context!\n");

return -1;

}

/ ****************** END -> This step is very important: not set does not work correctly decode *************** * /

5 // Open decoder

if (avcodec_open2(pAudioEnc, pAudioCodec, NULL)<0)

{

printf ( "% s", "the audio decoder can not open \ n");

return -1;

}

// output audio information

printf ( "Audio Sample Rate:% d \ n", pAudioCodecCtx-> sample_rate);

// printf ( "Audio frame size:% d \ n", pAudioCodecCtx-> frame_size);

printf ( "Audio channels:% d \ n", pAudioCodecCtx-> channels);

printf ( "Name of the audio decoder:% s \ n", pAudioCodec-> name);

std::cout << std::endl;

src_AudioFrame.m_pixel_format = pAudioCodecCtx->format;

src_AudioFrame.m_channel_count = pAudioCodecCtx->channels;

src_AudioFrame.m_sample_rate = pAudioCodecCtx->sample_rate;

//src_AudioFrame.m_nb_samples = pAudioCodecCtx->frame_size;

nb_planes = av_sample_fmt_is_planar ((AVSampleFormat) pAudioCodecCtx-> format) pAudioCodecCtx-> channels:? 1; // if the planar type is necessary to assign an array of pointers, pointing to each element of a channel

src_audio_buf_size = AVCODEC_MAX_AUDIO_FRAME_SIZE * 4;

if (!MallocMediaFrameBuf(&src_AudioFrame, src_audio_buf_size))

{

return NULL;

}

@ The filling structure associated audio format field

//av_samples_fill_arrays(src_AudioFrame.m_data, src_AudioFrame.m_linesize, src_AudioFrame.m_pBuf, src_AudioFrame.m_channel_count, src_AudioFrame.m_nb_samples, (AVSampleFormat)src_AudioFrame.m_pixel_format, 1);

// ready to read

// buffer, open space, AVPacket for storing compressed data (the H264) a frame

packet = (AVPacket*)av_malloc(sizeof(AVPacket));

// memory allocation for storing the decoded pixel data AVFrame after (the YUV)

pFrame = av_frame_alloc();

int ret = -1;

int audio_frame_count = 0;

// 6. A a compressed data read

while (av_read_frame(pFormatCtx, packet) >= 0)

{

As long as the audio compression data // (index determined according to the position of the stream)

if (packet->stream_index == a_stream_idx && (AUDIO_DETECT_ONLY == nDetectFlag || AV_DETECT == nDetectFlag))

{

// 7 decoding a video compressed data, video pixel data to obtain

ret = avcodec_send_packet(pAudioEnc, packet);

if (ret < 0)

{

printf ( "% s \ n", "audio decoding error");

return -1;

}

ret = avcodec_receive_frame(pAudioEnc, pFrame);

src_AudioFrame.m_nb_samples = pFrame->nb_samples;

@ The filling structure associated audio format field

av_samples_fill_arrays(src_AudioFrame.m_data, src_AudioFrame.m_linesize, src_AudioFrame.m_pBuf, src_AudioFrame.m_channel_count, src_AudioFrame.m_nb_samples, (AVSampleFormat)src_AudioFrame.m_pixel_format, 1);

if (! ret) // 0 represents decoding success

{

for (int j = 0; j < nb_planes; j++)

{

memcpy(src_AudioFrame.m_pBuf, pFrame->data[j], pFrame->linesize[0] * sizeof(BYTE));

fwrite(src_AudioFrame.m_data[j], pFrame->width*pFrame->height/(radio*radio), 1, fout);

}

audio_frame_count++;

printf("Decode Audio Frame Number : %d\n", audio_frame_count);

}

}

// release resources

av_packet_unref(packet);

}

// Free the YUV frame

av_frame_free(&pFrame);

// Close the codecs

avcodec_close(pAudioEnc);

// Close the video file

avformat_close_input(&pFormatCtx);

ReleaseMediaPacket(&src_AudioFrame);

std::cout << "I: Finish Video Scale." << std::endl;

system("pause");

return 0;

}

The following problems encountered during commissioning:

- For audio decoding process, the need to pay attention to whether the audio data planar format, if the format of the decoded audio data planar AVFrame structural body in a plurality of channels, and if not for the planar format, the decoded audio data stored in the AVFrame structure of data [0] in. Planar format is determined whether the following manner nb_planes = av_sample_fmt_is_planar ((AVSampleFormat) pAudioCodecCtx-> format) pAudioCodecCtx-> channels:? 1; // if the planar type is necessary to assign an array of pointers, pointing to each element of a channel

- Usually in the video decoding, by avcodec_open2 () function to obtain the frame_size the (frame data size); the audio decoding process, found avcodec_open2 () function to get the frame_size = 0; This is clearly a problem during debugging indeed encountered in the case can not be decoded audio data. Solution is: by avcodec_receive_frame (pAudioEnc, pFrame); after the function, acquiring the frame_size (frame data size), i.e. src_AudioFrame.m_nb_samples = pFrame-> nb_samples; re-filling structure according to the audio format the relevant fields av_samples_fill_arrays ();

Intelligent Recommendation

FFMPEG content introduction audio and video decoding and playback

Foreword Ffmpeg is an open source computer program that can be used to record, convert digital audio, video, and convert it into streams. Using LGPL or GPL license. It provides a complete solution for...

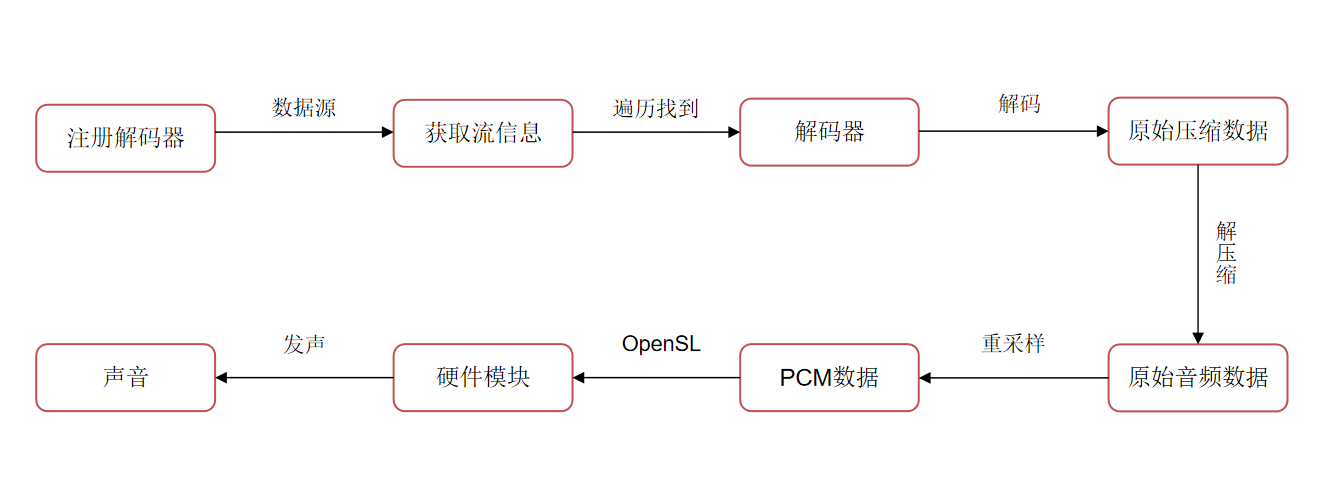

[Android audio and video development] FFmpeg decoding process

1. Introduction to video file packaging format and encoding format Video: The product of encoding the original video stream and then encapsulating it Encapsulation format: mp4, mkv, avi, mp3, m...

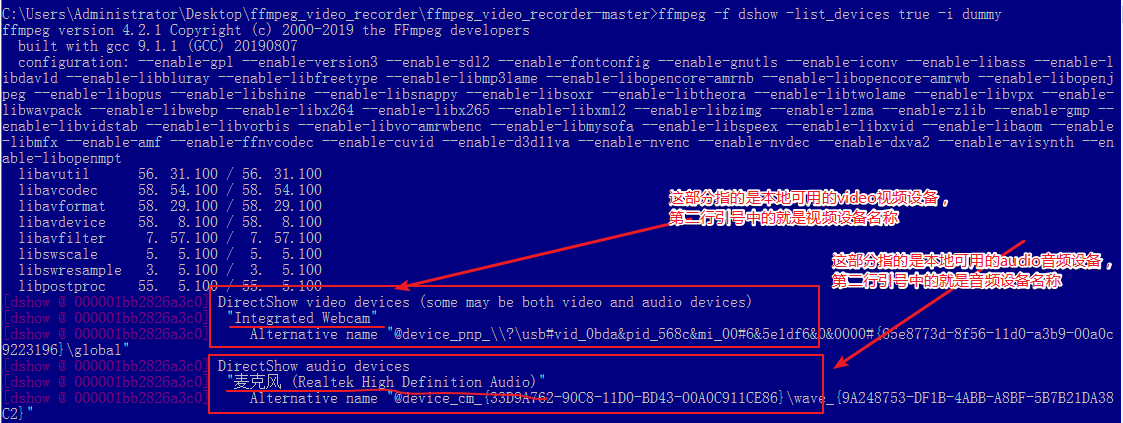

window system to realize FFmpeg recording audio and video

1. Download and install FFmpeg software: You can refer to this article: 2. Start recording audio and video: 1. First execute the following statement to see what your window system supportsDirectShow A...

(Audio and video learning notes): ffmpeg command audio and video recording

[Description] Course learning address: contents Ffmpeg Command Video Recording Audio and video recording View optional parameters for video recording View audio device optional parameters Specify para...

FFMPEG recording desktop video and microphone audio (audio video synchronization)

VS version: 2017 FFMPEG version number: ffmpeg version N-102642-g864d1ef2fc Copyright © 2000-2021 the FFmpeg developers built with gcc 8.1.0 (x86_64-win32-seh-rev0, Built by MinGW-W64 project) co...

More Recommendation

Video decoding, audio decoding, playback, etc. based on ffmpeg

Introduction to FFmpeg library avcodec: codec, including avformate: package format processing avfilter: filter special effects processing avdevice: input and output device avutil: tool library swresam...

FFMPEG audio decoding use encountered problem summary

WAV audio channel_Layout is 0 When using FFMPEG to decode WAV data, the number of channels is correct, but stream-> codecpar-> Channel_Layout is 0, if this time When performing audio data conver...

Introduction to audio and video (learning FFmpeg tutorial for iOS audio decoding and playback)

After a long time. . . . In fact, ffmpeg has special tutorials, but many of them are out of date, and I just use them as a learning process, and then record them. The knowledge points required for aud...

Some commands commonly used by ffmpeg: audio recording, video recording

1. Video and audio capture separately If you specify input formats and devices, FFMPEG can directly capture video and audio. The data captured by the camera under Linux is saved into video files: Para...

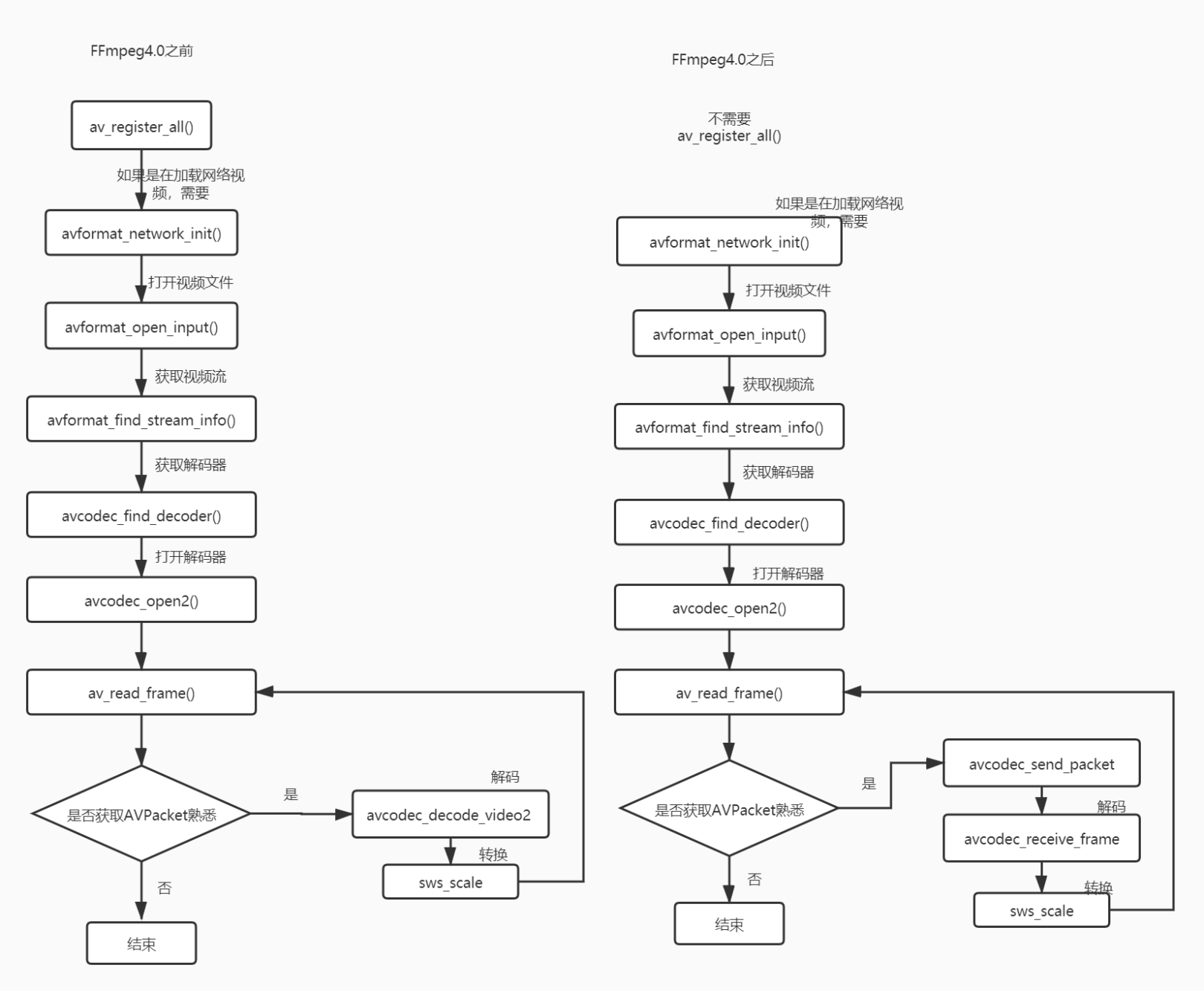

Introduction to audio and video (learn ffmpeg tutorial iOS video decoding and display)

The process of decoding using ffmpeg is fixed. Just like iOS development, from viewDidLoad, viewWillApear, viewDidAppear, Apple has already told us the order of the methods that need to be called. Wha...