[Thesis Interpretation] FASTER R-CNN Real Time Target Detection

tags: artificial intelligence Neural Networks Faster R-CNN Target Detection Computer vision

Foreword

The highlight of the Faster R-CNN is to extract candidate boxes using RPN; RPN is full of region proposal network, and it is also understood as a zone generated network, or a region candidate network; it is usedExtract candidate boxof. RPN features are time consuming.

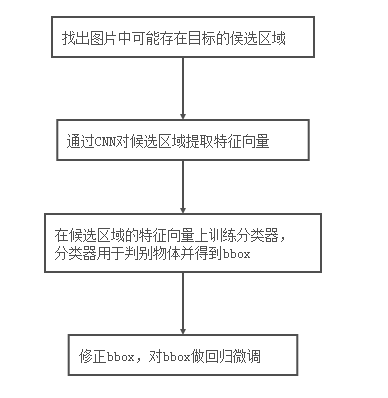

FASTER R-CNN is an outstanding product of the "RCNN series algorithm" and is also a classic object detection algorithm in Two-Stage. The TWO-Stage process is:

- In the first phase, you can first identify the Anchor rectangular box to be detected in the object. (For the background, the object to be tested for two categories)

- The second phase is classified in the anchor box to be tested.

Simply: first produce some to be detected, and then classify the detection box. The key point is how to find the "To Detection", which contain the target object in the box, although it doesn't know its category for the time being.

Paper address:Faster R-CNN: Towards Real-Time Object Detection with Region Proposal Network

open source code:https://github.com/endernewton/tf-faster-rcnn

content

Third, CNN extraction characteristics

Fourth, RPN network extraction candidate box

4.2, Feature Maps with anchor box Anchor Boxes

4.3. Judging whether anchor boxes contains objects

4.5, Proposal (most likely to contain an object)

6. Classification of the designated area

Seven, FASTER RCNN loss functions

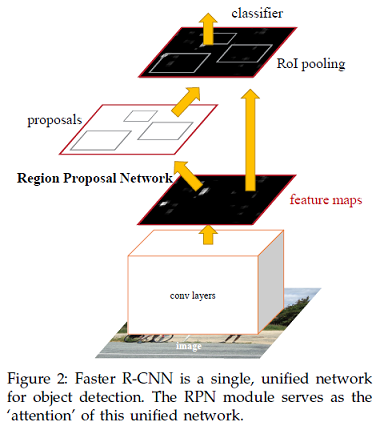

First, network framework

FASTER RCNN is mainly divided into 4 main contents:

- CNN extraction characteristics, generate Feature Maps;

- RPN network extraction candidate box;

- ROI POOLING determines Feature Maps with object area;

- The specified area is classified.

PS: ROI Poloing word above the picture is wrong, correct ROI POOLING

Fast 4 main contents of Faster RCNN:

- CNN extraction characteristics, generate features of Feature Maps.Sharing the base convolution layer for extracting the characteristics of the entire picture. For example, VGG16 removes the full connecting layer, leaving leaving only the convolution layer, and outputs the Sampling Feature Maps. The Feature Maps are shared for subsequent RPN layers and full connectivity.

- RPN network extraction candidate box.This layer determines by SoftMAX to get Positive or Negative, and then use the Bounding Box Regression corrects Anchors to get precise proposals.

- ROI POOLING determines Feature Maps with object area. This layer collects input Feature Maps and Proposals, and extracts Proposal Feature Maps after integrated this information, and feeds the subsequent full connection layer to determine the target category.

- Specified area. Classify the candidate detection box and fine tune the candidate frame coordinates. Use the Proposal Feature Maps to calculate the category of Proposal, and then the Bounding Box Regression gets the final exact location of the detection box. (In the RPN, the network will adjust according to the ANCHOR box set by the previous person, so this is the second adjustment)

Second, the idea process

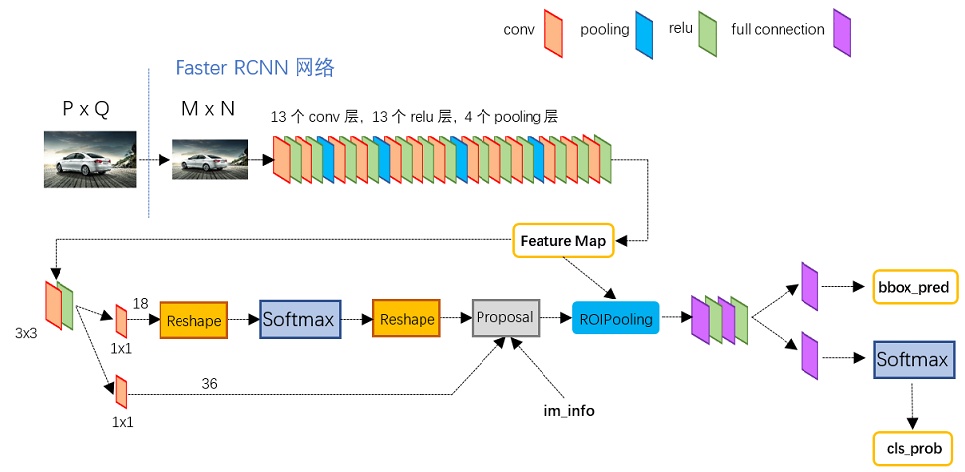

Reference Model of "One Wen Read Faster RCNN":

The input is an image of any size PXQ; the process steps are as follows:

- First zoom to the fixed size MXN, then send the MXN image to the network;

- CONV LAYERS is based on the VGG-16 model extraction characteristics, which contains 13 CONV layers +13 RELU layers +4 pooling layers;

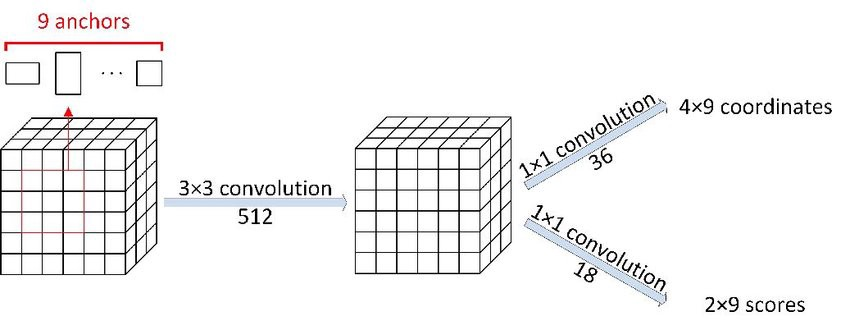

- The RPN network first passes through 3x3 convolution, and then generates POSTIVE ANCHORS, and the boundary box containing objects, and the corresponding Bounding Box Regression offset, then proposals (most likely to contain an object);

- The ROI POOLING layer extracts ProPosal Feature from Feature Map from Feature Map to fed to subsequent allocation and SoftMax network as classification (ie, category proposal is Object).

three,CNN extraction characteristics

The backbone network of Faster R-CNN can be based on the VGG16 model, remove the full connect layer, leaving only a convolution layer, and extracts the characteristics of the entire picture.

The backbone network will often be referred to in the paper. The role of the backbone network is used to extract pictures feature, this is not a constant, can be replaced, such as using the residual network RESNET.

The meaning of 16 representatives in the VGG-16 network is 16 layers containing parameters, respectively, 13 convolution layers +3 full connecting layers. Let's take a look at the network structure of VGG-16:

Among them, the 13-layer convolution layer is constantly extracting the characteristics, the pool layer is to make the size of the picture constantly become small.

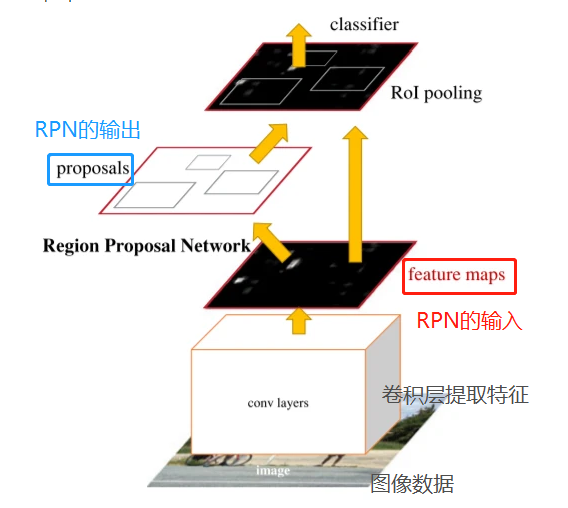

Fourth, RPN network extraction candidate box

The RPN is also known as the Region ProPosal Network, and it is also understood to be a zone generated network, or a regional candidate network; it is usedExtract candidate boxof.

4.1, RPN Idea Process

The RPN network task is to find Proposals. Enter: Feature Map. Output: Proposal.

RPNOverall process:

- Generate Anchors (Anchor Boxes).

- To determine that each Anchor Box is Foreground (including object) or Background, the second classification; the SoftMAX classifier extracts the Positvie Anchors.

- Bounding Box Regression fine-tunes the ANChor Box, making the Positive Truth Box more close to the Positive Anchor and the true box.

- Proposal Layer Generates ProPOSALS.

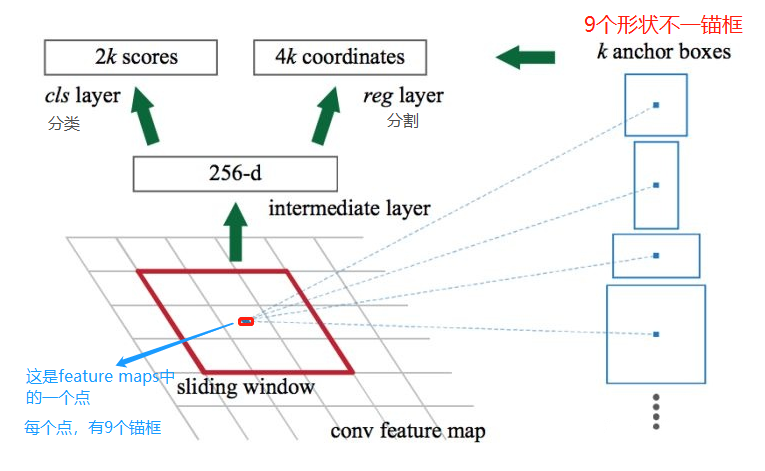

4.2, Feature Maps with anchor box Anchor Boxes

Each point of Feature Maps is equipped with 9 anchors as an initial detection box. It is important to say three times:

Anchor frame as an initial detection box! , Anchor frame as an initial detection box! , Anchor frame as an initial detection box!

Although the detection frame thus obtained is very inaccurate, the position of the detection box can be corrected by Bounding Box Regression.

Let's introduce the nine Anchor Boxes anchors, first look at its shape:

There are a total of 9 frames, 3 greens, 3 red, 3 in blue. There are three shapes, and the aspect ratios are 1: 1, 1: 2, 2: 1, respectively.

4.3, judgmentDoes Anchor Boxes contain objects

On the Feature Map, a candidate anchor Boxes anchor box for dense Ma Ma is set. Why is there so much? Because each point of Feature Maps is equipped with 9 anchors, if there are 1900 points, there are 1900 * 9 = 17100 anchor boxes.

The size of the Feature Maps is w * h, then there is a total of W * h * 9 anchor boxes. (W: The width of Feature Maps; H: feature maps.)

Then use the CNN to determine which Anchor Box is the target of Positive Anchor, which is no target NEGATIVE ANCHOR. Therefore, RPN is only a second class.

About the model structure of CNN, you can refer to the figure below:

4.4, correction boundary box

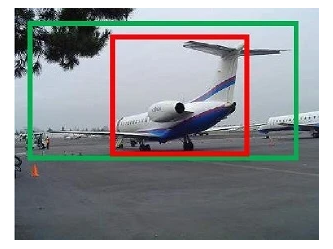

It is known that the Anchor Box contains objects called Positive Anchors, how to adjust, in order to make Anchor Box closer to Ground Truth?

The red frame in the picture is Positive Anchors, the green box is the real box (Ground Truth Box, referred to as GT)

The gradients of Positive Anchors and GTs can have DX, DY, DW, DH four transformations, Bounding Box Regression learns this four gradients through linear regression, making Positive Anchors constantly approaching GT, giving more precise Proposal.

Bounding Box Regression thinking, simple, you can do pan, then make zoom, and finally contain object Anchor Box and the real box very close.

4.5, Proposal (most likely to contain an object)

By judging whether the anchor boxes contains an object, anhenor Boxes with an object is corrected by regression, and ultimately containing object Anchor Box and the real box are very close. RPNs will output some boxes, and the probability of these frames contains objects.

Summary, ProPosal has three:

- SoftMAX classification matrix

- Bounding box regression coordinate matrix

- IM_INFO saves zoom information

The output is:

- rpn_rois: RPN generated ROIS (Region of Interests, INTERESTS, INTERESTS)

- rpn_roi_probs: Indicates the probability of ROI contains objects

The RPN selects only the area (rpn_rois) that may contain objects and the probability of which it contains objects (rpn_roi_probs). In subsequent processing, a threshold threshold is set. If a ROI contains the probability of the probability of an object is greater than the threshold, it is again determined; otherwise it is directly ignored.

The role of the entire RPN is:

- useNeural network regression detection boxAnd on the detection boxSecond classification(positive、negative);

- Outputs the filtered POSITIVE detection box (ROI).

RPN output proposals, a matrix represents N × 5 of all ROI, where n represents the number of ROIs. The first column indicates the image index, and the remaining four columns indicate the remaining left upper corners and the lower right corner coordinate, the coordinate information is the absolute coordinate of the corresponding original map.

5th, ROI Pooling

ROI is Region of Interest, refers to the region of interest; if the input is the original picture, ROI is the target; if the input is Feature Maps, ROI is the target of the target image. Pool is poolized.

The ROI POOLING layer collects the input Feature Maps and proposals (most likely to contain an object, integrating this information extracts the Proposal Feature Map, and enters the rear, you can use full connection operation to make target identification and positioning.

There are 2 inputs in the ROL POOLING layer:

- Original Feature Maps; (CNN consolidation after the feature of sharing)

- The proposal Boxes (Proposals) output from the RPN is different).

The RPN generates a size zone region in the feature map, and in the FASTER RCNN, the subsequent classification network input size is fixed to 7 * 7, so that the original area is covered with 7 * 7 grid for any size input.

As shown in the figure below, you can see the red box, the green box although their size is not the same, but they are 7 * 7;

On the 7 * 7 in each lattice, take the maximum value in the front lattice coverage area; the corresponding purple portion.

6. Classification of the designated area

This section is input from ROI Pool to 7x7 = 49-size Proposal Feature Maps (characteristics of the object)

Classification of "One Wen Read Faster RCNN" Figure:

Process:

- Calculate each Proposal specifically on that category through full connectivity layer, outputs a CLS_PROB probability vector.

- At the same time, the positional offset BBOX_PRED of each Proposal is again used to return to more accurate target detection boxes.

Seven, FASTER RCNN loss functions

Loss function

RPN network and classification network, the output is "coordinate regression value" and "classification value", so the LOSS function of the two networks can be used as the following submitting:

in, Is a classification loss functionUsing a classification loss function in the RNP network, multi-class loss functions are used in the classified network.

Is a classification loss functionUsing a classification loss function in the RNP network, multi-class loss functions are used in the classified network.

It is a factor used to balance the classification and regression of the LOSS ratio.

It is a factor used to balance the classification and regression of the LOSS ratio. front

front Controlled: Only the correct case will generate a detection frame to return to LOSS. (Master case: Box containing objects)

Controlled: Only the correct case will generate a detection frame to return to LOSS. (Master case: Box containing objects)

There are two cases: (1) IOU value is greater than 0.7; this can find a lot of normal case. (2) IOU's highest Anchor Box; is used to prevent some of the rare detection boxes that are small in small IOU; if IOU is less than 0.6, then select the highest box of IOU.

Is the return loss functionThe author uses the Robust Loss (Smooth L1) in the original text, the expression is as follows:

Is the return loss functionThe author uses the Robust Loss (Smooth L1) in the original text, the expression is as follows:

Eight, model effect

Model data:

Model effect 1 is as follows:

Model effect 2 is as follows:

Nine, open source code

open source code:https://github.com/endernewton/tf-faster-rcnn

FASTER RCNN for target detection. This version of code supportVGG16、Resnet V1andMobilenet V1Model.

Code operating environment:

system:ubuntu 16.04(x64)

Language:Python3.5

Depth frame: Tensorflow1.0 (GPU version)

Other dependency library: CV2, Cython, EasyDict = 1.6, NUMPY, etc.

This article reference:

Target detection-Faster R-CNN paper reading

Paper address:Faster R-CNN: Towards Real-Time Object Detection with Region Proposal Network

open source code:https://github.com/endernewton/tf-faster-rcnn

This article only provides reference learning, thank you.

Intelligent Recommendation

Object Detection---R-CNN / fast-RCNN / faster-RCNN (Thesis Interpretation 7)

As a masterpiece in the field of target detection, the R-CNN series is of great significance for understanding the field of target detection. Title:R-CNN:Rice feature hierarchies for accurate object d...

Target Detection Series (4): Faster R-CNN

Target Detection Series (4): Faster R-CNN References: STAR S: R-CNN solves the target detection problem with the classification + bounding box. SPP-Net solves the convolution sharing problem. Fast-RCN...

Faster R-CNN for target detection algorithm

Code download:https://github.com/rbgirshick/py-faster-rcnn Paper download:http://arxiv.org/abs/1506.01497 original:Target Detection - Faster R-CNN Detailed [translation] - AIUAI+Faster R-CNN: Down the...

Revisit Target Detection--Faster R-CNN

Faster R-CNN: Towards Real-Time Object Detection with Region Proposal Networks NIPS2015 https://github.com/ShaoqingRen/faster_rcnn In this paper, based on Fast R-CNN, we propose RPN to improve candida...

More Recommendation

Target detection (4) Faster R-CNN

Disclaimer: This series of target detection Most of the content transferred from the link below, bloggers made slightly modified, if the violation of rights, please delete the contact, thank you! 1)cs...

Target Detection: faster r-cnn learning

From RCNN to fast RCNN, then four basic steps described herein faster RCNN, target detection (candidate region generated, feature extraction, classification, finishing position) finallyUnified into a ...

Target Detection (iv) Faster R-CNN

Article Directory I. Background Second, the inspection process Three, RPN understand I. Background About Fast R-CNN has a drawback, even if the prediction is very fast, but in the extraction candidate...

Development of target detection and improvement of Faster R-CNN

Target detection development Dry goods first:The paper and source code of the target detection algorithm I summarize the basic development of deep learning target detection and the main advantages and...

Faster R-CNN-Detailed target detection

[Reprinted statement] OF: AIHGF link: link:https://www.aiuai.cn/aifarm192.html has been authorized to reprint. ————————————&mdas...