Ceph Async network communication source code analysis (1)

tags: Ceph Async Msgr Telecommunication

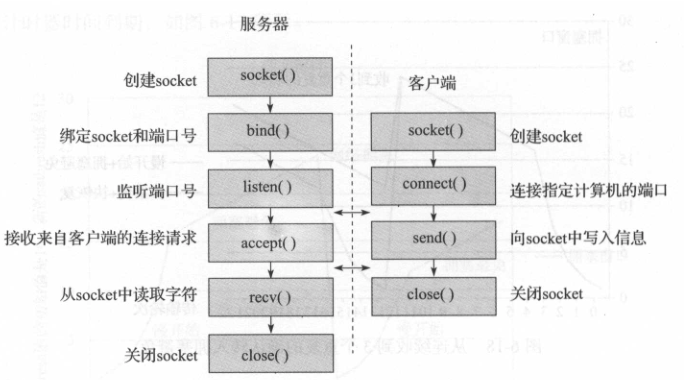

In Ceph's network communication module, Simple has been used in the early days. Because of its simple implementation, it was first adopted by ceph and used in the production environment. Its biggest flaw is: Create two threads for each Connection, one for receiving messages and one for sending messages. In a large-scale cluster environment, as the number of connections increases, a large number of threads for communication will be generated, which greatly affects performance.

Ceph uses the Async network communication model as the default communication method in the L version. Async implements IO multiplexing, and uses a shared thread pool to implement asynchronous sending and receiving tasks.

This article mainly introduces the implementation model of Async's Epol + thread pool, mainly introduces the basic framework and key implementations. The idea of this article is to first give an overview of related classes, and focus on their data structure and related operations when introducing classes. Secondly, introduce the core process of network communication: monitoring and accepting connections of the server end sock, how the client actively initiates the connection. The main flow of message sending and receiving.

Basic class introduction

NetHandler

Class NetHandler encapsulates the basic functions of Socket.

class NetHandler {

int generic_connect(const entity_addr_t& addr,

const entity_addr_t& bind_addr,

bool nonblock);

CephContext *cct;

public:

explicit NetHandler(CephContext *c): cct(c) {}

//Create socket

int create_socket(int domain, bool reuse_addr=false);

//Set the socket as non-blocking

int set_nonblock(int sd);

//When using exec to start the child process: set the socket to close

void set_close_on_exec(int sd);

//Set the socket options: nodelay, buffer size

int set_socket_options(int sd, bool nodelay, int size);

//connect

int connect(const entity_addr_t &addr, const entity_addr_t& bind_addr);

//Reconnection

int reconnect(const entity_addr_t &addr, int sd);

//Non-blocking connect

int nonblock_connect(const entity_addr_t &addr, const entity_addr_t& bind_addr);

//Set priority

void set_priority(int sd, int priority);

}Worker class

The Worker class is an abstract interface for worker threads. At the same time, listen and connect interfaces are added for network processing on the server and client. An EventCenter class is created internally, which stores related processing events.

class Worker {

std::atomic_uint references;

EventCenter center; //Event processing center, processing all events of the center

// server side

virtual int listen(entity_addr_t &addr,

const SocketOptions &opts, ServerSocket *) = 0;

// client actively connects

virtual int connect(const entity_addr_t &addr,

const SocketOptions &opts, ConnectedSocket *socket) = 0;

}

PosixWorker

The PosixWorker class implements the Worker interface.

class PosixWorker : public Worker {

NetHandler net;

int listen(entity_addr_t &sa,

const SocketOptions

&opt,ServerSocket *socks) override;

int connect(const entity_addr_t &addr,

const SocketOptions &opts,

ConnectedSocket *socket) override;

}

int PosixWorker::listen(entity_addr_t &sa, const SocketOptions &opt,ServerSocket *sock)

The function PosixWorker::listen implements the sock function on the Server side: the bottom layer calls the NetHandler function, realizes the bind, listen and other operations of the socket, and finally returns the ServerSocket object.

int PosixWorker::connect(const entity_addr_t &addr, const SocketOptions &opts, ConnectedSocket *socket)

The function PosixWorker::connect implements the active connection request. Return the ConnectedSocket object.

NetworkStack

class NetworkStack : public CephContext::ForkWatcher {

std::string type; //Type of NetworkStack

ceph::spinlock pool_spin;

bool started = false;

//Worker work queue

unsigned num_workers = 0;

vector<Worker*> workers;

}

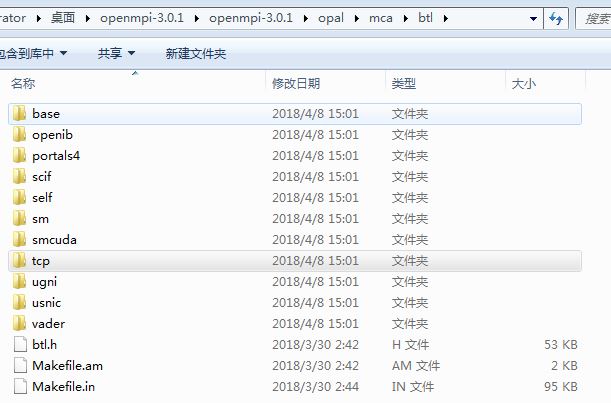

Class NetworkStack is the interface of the network protocol stack. PosixNetworkStack implements the tcp/ip protocol interface of linux. DPDKStack implements the interface of DPDK. RDMAStack implements the IB interface.

class PosixNetworkStack : public NetworkStack {

vector<int> coreids;

vector<std::thread> threads; //Thread Pool

}Worker can be understood as a worker thread, which corresponds to a thread thread one by one. In order to be compatible with the design of other protocols, the corresponding thread is defined in the PosixNetworkStack class.

Through the above analysis, it can be seen that a Worker corresponds to a thread, and at the same time corresponds to an Event Center class of event processing center.

EventDriver

EventDriver is an abstract interface that defines an interface for adding event listeners, deleting event listeners, and obtaining triggered events.

class EventDriver {

public:

virtual ~EventDriver() {} // we want a virtual destructor!!!

virtual int init(EventCenter *center, int nevent) = 0;

virtual int add_event(int fd, int cur_mask, int mask) = 0;

virtual int del_event(int fd, int cur_mask, int del_mask) = 0;

virtual int event_wait(vector<FiredFileEvent> &fired_events, struct timeval *tp) = 0;

virtual int resize_events(int newsize) = 0;

virtual bool need_wakeup() { return true; }

};

For different IO multiplexing mechanisms, different classes are implemented. SelectDriver implements the select method. EpollDriver implements epoll's network event processing method. KqueueDriver is a FreeBSD implementation of kqueue event processing model.

EventCenter

The EventCenter class saves all events and provides related functions for handling events.

FileEvent

The FileEvent event is the event corresponding to the socket.

struct FileEvent {

int mask; //Sign

EventCallbackRef read_cb; //Callback function for processing read operations

EventCallbackRef write_cb; //Callback function for processing write operations

FileEvent(): mask(0), read_cb(NULL), write_cb(NULL) {}

};TimeEvent

struct TimeEvent {

uint64_t id; //ID number of time event

EventCallbackRef time_cb; //Callback function for event processing

TimeEvent(): id(0), time_cb(NULL) {}

};

The Poller class is used for polling events, mainly used in DPDK mode. It is useless in PosixStack mode.

EventCenter

Class EventCenter {

//External events

std::mutex external_lock;

std::atomic_ulong external_num_events;

deque<EventCallbackRef> external_events;

//socket event, its subscript is the fd corresponding to the socket

vector<FileEvent> file_events;

//Time event [expire time point, TimeEvent]

std::multimap<clock_type::time_point, TimeEvent> time_events;

//Map of time events [id, iterator of [expire time point, time_event]]

std::map<uint64_t,

std::multimap<clock_type::time_point, TimeEvent>::iterator> event_map;

//Fd that triggers execution of external events

int notify_receive_fd;

int notify_send_fd;

EventCallbackRef notify_handler;

//The underlying event monitoring mechanism

EventDriver *driver;

NetHandler net;

// Used by internal thread

//Create file event

int create_file_event(int fd, int mask, EventCallbackRef ctxt);

//Create time event

uint64_t create_time_event(uint64_t milliseconds, EventCallbackRef ctxt);

//Delete file event

void delete_file_event(int fd, int mask);

//Delete time event

void delete_time_event(uint64_t id);

//Handle the event

int process_events(int timeout_microseconds);

//Wake up the processing thread

void wakeup();

// Used by external thread

void dispatch_event_external(EventCallbackRef e);

}The EventCenter class mainly saves events (including fileevent, timeevent, and external events) and related functions for handling events.

Handling events

int EventCenter::process_events(int timeout_microseconds, ceph::timespan *working_dur)

The function process_event processes related events, and its processing flow is as follows:

- If there is an external event, or poller mode, the blocking time is set to 0, which is the timeout time of epoll_wait.

- The default timeout is the timeout_microseconds set by the parameter. If there is a time event recently and the expect time is less than the timeout_microseconds, the timeout is set to the interval between expect time and the current time, and trigger_time is set to true to trigger subsequent processing Time event.

- Call epoll_wait to get the event, and cyclically call the corresponding callback function to process the corresponding event.

- Handling expiration time events

- Handle all external events

Here, internal events refer to events obtained through epoll_wait. External events are other delivery events, such as handling active connections and triggering events by sending new messages.

Two methods are defined in the EventCenter class to deliver external events to EventCenter:

//Directly post EventCallback type event processing function

void EventCenter::dispatch_event_external(EventCallbackRef e)

//Process event handler function of type func

void submit_to(int i, func &&f, bool nowait = false)

AsyncMessenger

Class AsyncMessenger mainly completes the management of AsyncConnection. All Connection-related information is stored inside.

class AsyncMessenger : public SimplePolicyMessenger {

//Current Connection

ceph::unordered_map<entity_addr_t, AsyncConnectionRef> conns;

//Connection being accepted

set<AsyncConnectionRef> accepting_conns;

//Connection to be deleted

set<AsyncConnectionRef> deleted_conns;

}

Processor class

class Processor {

NetHandler net;

Worker *worker;

ServerSocket listen_socket; //Listening socket

EventCallbackRef listen_handler; //acceptProcessing function

Corresponding C_processor_accept, Its correspondingProcessor::accept() function

}AsyncConnection

class AsyncConnection : public Connection {

//Corresponding socket

ConnectedSocket cs;

//Send message queue

map<int, list<pair<bufferlist, Message*> > > out_q;

//The corresponding worker thread

Worker *worker;

//The corresponding event center, that is, all of this Connection actually has center processing

EventCenter *center;

}

Intelligent Recommendation

[Ceph] Simplemanssager communication code analysis

2.1 Server end code Instance code: CEPH-12.0.0 \ SRC \ Test \ Messenger \ Simple_Server.cc Resolve the address string to the address of the entity_addr_t class Create SimpleMessen...

OpenMPI source code analysis: network communication principle (1)

The principle of network communication in MPI needs to solve the following problems: 1. What network protocol does MPI use for communication? 2. On which machine is the central database stored? 3. If ...

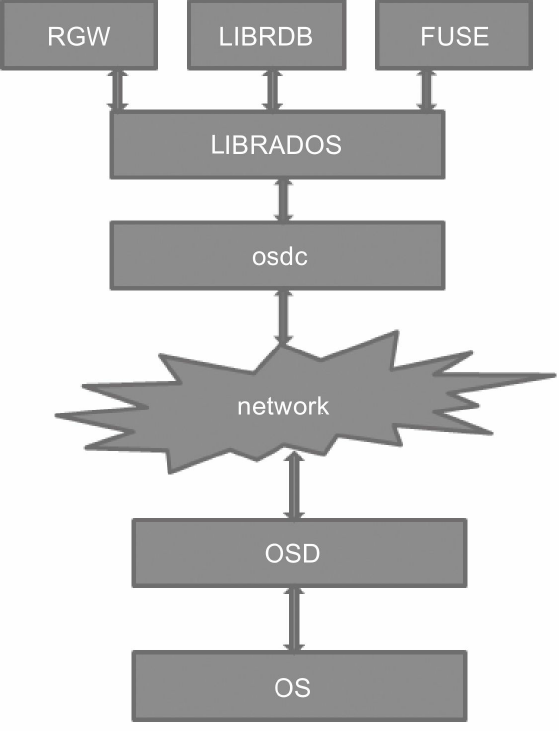

Ceph source code series (3): Ceph OSDC source code analysis (1 of 2)

Reprinted:Ceph OSDC source code analysis 1. What is OSDC OSDC is actually aosd cThe abbreviation of the lient module is used in both rbd and cephfs applications. This module is mainly used to interact...

"Ceph source code analysis" - Chapter 2, Section 1 Object

This section of the book is from Chapter 2 of the chapter "Ceph Source Analysis" by Huazhang Publishing House. Section 2.1 Object, author Chang Tao, more chapters can be accessed by the Huaq...

"Ceph source code analysis"-Chapter 1, Section 5 RADOS

This section is an excerpt from Chapter 1, Section 1.5 RADOS in the book "Ceph Source Code Analysis" by Huazhang Publishing House, author Chang Tao. For more chapters, please visit the publi...

More Recommendation

Ceph learning-CRUSH algorithm and source code analysis (1)

The CRUSH algorithm solves the problem of how PG copies are distributed on the cluster OSD. This article first introduces the basic principles of the CRUSH algorithm and related data structures, mainl...

Ceph Timer source code analysis

Ceph Timer source code analysis The ceph timer is mainly used to implement certain timing tasks, such as heartbeat between osd and heartbeat between monitors. source file: src/common/timer.h src/commo...

Syntax analysis in ceph source code

Article Directory 1. MDSContext::vec Object code Key words Parsing 2. C_IO_Wrapper Object code Key words Parsing 3. MDSGatherBuilder Object code Key words Parsing to sum up This article selects severa...

"Ceph Source Code Analysis"-Guide

The excerpt of this section comes from the introduction in the book "Ceph Source Code Analysis" by Huazhang Publishing House, author Chang Tao. For more chapters, please visit the public acc...

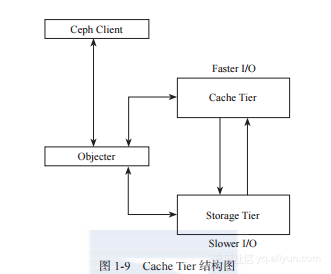

Ceph source code analysis-librados

Why can't 80% of programmers be architects? >>> The module of librados is used on the client to access the rados object storage device, and its structure is as follows: As shown i...