OpenStack container network project Kuryr (libnetwork)

Transfer from https://zhuanlan.zhihu.com/p/24554386

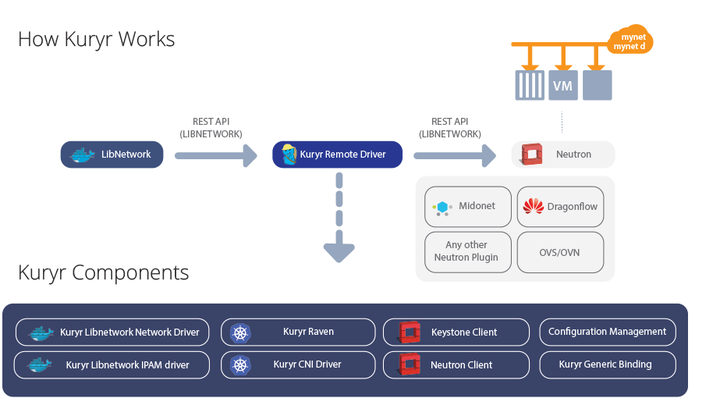

Containers have become very popular in recent years, and many projects have considered combining containers with SDN. Kuryr is one of these projects. Under the OpenStack big tent, the Kuryr project aims to connect the container network with OpenStack Neutron. Kuryr's first impression is: This is another project under the Neutron framework, which can control the SDN project of the container network through Neutron's unified northbound interface. But in fact, Kuryr uses Neutron as a southbound interface to connect to the container network. Kuryr's northbound is the container network interface, and the southbound is OpenStack Neutron.

Kuryr background introduction

Before the formal introduction, let's talk about the word Kuryr. Kuryr is a Czech word kurýr, which corresponds to courier in English, and the corresponding Chinese means messenger, person who delivers letters. It can be seen from the name that Kuryr does not produce information, but is just a porter in the online world. This can also be seen from the icon of the project. In addition, since they are all Latin, it can be said irresponsibly that Kuryr should be pronounced similarly to courier.

When Kuryr was first founded, its purpose was to provide a connection between Docker and Neutron. Bring Neutron's network services to Docker. With the development of containers, the development of container networks has also diverged. There are two main groups, one is Docker's native CNM (Container Network Model), and the other is CNM (Container Network Interface) with better compatibility. Kuryr also has two branches correspondingly, one is kuryr-libnetwork (CNM) and the other is kuryr-kubernetes (CNI).

The above is the background introduction of the Kuryr project, let's take a look at kuryr-libnetwork.

How Kuryr works in Libnetwork

kuryr-libnetwork is a plugin running under the Libnetwork framework. To understand how kuryr-libnetwork works, first look at Libnetwork. Libnetwork is an independent project after modularizing the network logic from Docker Engine and libcontainer, and replaces the original Docker Engine network subsystem. Libnetwork defines a flexible model that uses local or remote drivers to provide network services to the container. kuryr-libnetwork is a remote driver implementation of Libnetwork, now it has become DockerOfficial website recommendationOne of the remote driver.

The driver of Libnetwork can be seen as a plugin of Docker, sharing a set of plugin management framework with other plugins of Docker. In other words, Libnetwork's remote driver is activated in the same way as other plugins in Docker Engine and uses the same protocol. The interfaces that need to be implemented by Libnetwork remote driver are inLibnetworkThe git has a detailed description.

All kuryr-libnetwork needs to do is to implement these interfaces. Available from kuryr-libnetworkCodeLook out. Libnetwork calls the Plugin.Activate interface of the remote driver to see what the remote driver implements. As you can see from the code of kuryr-libnetwork, it implements two functions: NetworkDriver, IPAMDriver.

@app.route('/Plugin.Activate', methods=['POST'])

def plugin_activate():

"""Returns the list of the implemented drivers.

This function returns the list of the implemented drivers defaults to

``[NetworkDriver, IpamDriver]`` in the handshake of the remote driver,

which happens right before the first request against Kuryr.

See the following link for more details about the spec:

docker/libnetwork # noqa

"""

app.logger.debug("Received /Plugin.Activate")

return flask.jsonify(const.SCHEMA['PLUGIN_ACTIVATE'])

How is Kuryr registered in Libnetwork as a remote driver? This question should be viewed this way, how did Libnetwork discover Kuryr? This depends on Dockerplugin discoverymechanism. When a user or container needs to use the Docker plugin, he/it only needs to specify the name of the plugin. Docker will look for a file with the same name as the plugin in the corresponding directory. The file defines how to connect to the plugin.

If you use devstack to install kuryr-libnetwork, the devstack script will be in/usr/lib/docker/plugins/kuryrCreate a folder, the content of the file is also very simple, the default is:http://127.0.0.1:23750. In other words, kuryr-libnetwork actually sets up an http server, which provides all the interfaces required by Libnetwork. After Docker finds such a file, it communicates with Kuryr through the content of the file.

So the interaction between Libnetwork and Kuryr is like this:

- Libnetwork: Someone wants to use a plugin called Kuryr, let me find it. Oh, hello Kuryr, what function do you have?

- Kuryr: I have functions like NetworkDriver and IpamDriver. How are you happy?

How Kuryr connects with Neutron

How to connect Kuryr with Docker Libnetwork mentioned above. Let's take a look at how Kuryr connects with OpenStack Neutron. Since it is a project under the OpenStack camp and both are developed in python language, there is no suspense. Kuryr uses neutronclient to connect with Neutron. So overall, Kuryr works as follows:

Since Kuryr is still separated by a Neutron from the actual L2 implementation below, Kuryr is not too dependent on the L2 implementation. The picture above shows some of the Neutron L2 implementations supported by Kuryr listed by Gal Sagie. In addition to this, I have tried the integration of kuryr-libnetwork and Dragonflow, and there is not much that needs attention. I have the opportunity to specifically talk about this.

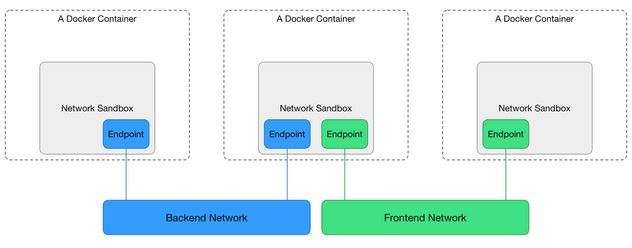

Next, let's see how Kuryr-libnetwork can be a courier between Neutron and Docker. Since the north direction is Libnetwork and the south direction is Neutron, it can be imagined that what kuryr-libnetwork does is to receive the resource model of Libnetwork and transform it into the resource model of Neutron. Let's first take a look at the resource model of Libnetwork, which is one of the two groups of container networks mentioned earlier, CNM. CNM consists of three data models:

- Network Sandbox: Defines the network configuration of the container

- Endpoint: The network card used by the container to connect to the network. It exists in the Sandbox. There can be multiple Endpoints in a Sandbox.

- Network: It is equivalent to a Switch, and the Endpoint is connected to the Network. Different networks are isolated.

Corresponding to Neutron, Endpoint is the Port in Neutron, and Network is the Subnet in Neutron. Why is Network not corresponding to Network in Neutron? It may be because of the difference in network definition between Libnetwork and Neutron, but at least when there is only one Subnet in a Neutron Network, the two can correspond in name.

In addition, Kuryr also relies on another feature in OpenStack Neutron: subnetpool. Subnetpool is a purely logical concept in Neutron. It can ensure that all subnets and IP address segments in the subnetpool do not overlap. Kuryr uses this feature to ensure that the IP address of the Docker Network provided by it is unique.

Kuryr converts the request from Libnetwork into the corresponding Neutron request and sends it to Neutron.

Kuryr connects container network and virtual machine network

However, the actual network connection cannot tell Neutron how to do it through Neutron's API. Neutron does not know how to connect to the container network, nor does it provide such an API. This part needs to be done by Kuryr himself, which is where Kuryr's Magic is (otherwise, what is the difference with an agent). Let's take a look at this part last.

When Docker creates a container and needs to create an Endpoint, the request is sent to Kuryr as the remote driver of Libnetwork. Upon receiving this request, Kuryr will first create a Neutron port:

neutron_port, subnets = _create_or_update_port(

neutron_network_id, endpoint_id, interface_cidrv4,

interface_cidrv6, interface_mac)

After that, the corresponding driver will be called according to the content of the configuration file. The currently supported driver has veth, which is used to connect to the host container network, and the other is nested, which is used to connect to the container network in the virtual machine. Of course, the host and virtual machine here are all relative to OpenStack. Strictly speaking, the host of OpenStack can also be a virtual machine, such as my development environment. Next, take the veth driver as an example. Look at the code first:

try:

with ip.create(ifname=host_ifname, kind=KIND,

reuse=True, peer=container_ifname) as host_veth:

if not utils.is_up(host_veth):

host_veth.up()

with ip.interfaces[container_ifname] as container_veth:

utils._configure_container_iface(

container_veth, subnets,

fixed_ips=port.get(utils.FIXED_IP_KEY),

mtu=mtu, hwaddr=port[utils.MAC_ADDRESS_KEY].lower())

except pyroute2.CreateException:

raise exceptions.VethCreationFailure(

'Virtual device creation failed.')

except pyroute2.CommitException:

raise exceptions.VethCreationFailure(

'Could not configure the container virtual device networking.')

try:

stdout, stderr = _configure_host_iface(

host_ifname, endpoint_id, port_id,

port['network_id'], port.get('project_id') or port['tenant_id'],

port[utils.MAC_ADDRESS_KEY],

kind=port.get(constants.VIF_TYPE_KEY),

details=port.get(constants.VIF_DETAILS_KEY))

except Exception:

with excutils.save_and_reraise_exception():

utils.remove_device(host_ifname)

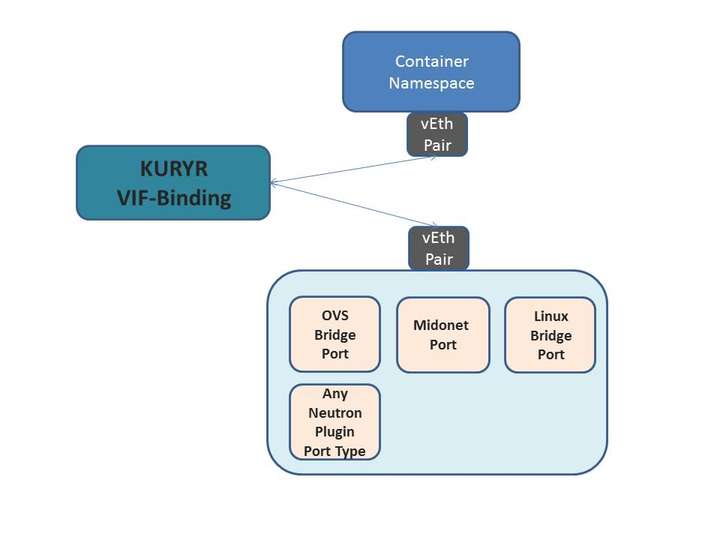

Similar to the bridge mode in the Docker network, the Driver first creates a veth pair pair, two network cards, one of which is the container interface, which is used to connect to the container's network namespace and configure it by calling _configure_container_iface; the other is the host interface, access to Neutron's L2 topology by calling _configure_host_iface.

The processing method of Host interface is specially customized for OpenStack Neutron. It should be noted here that the L2 topologies of different SDN bottom layers are different, such as OpenVswitch, LinuxBridge, Midonet, and so on. How does Kuryr support different L2 bottom layers? First of all, pay attention to the port information of OpenStack Neutron, you can find such an attribute:binding:vif_type. This attribute indicates what L2 bottom layer the port is in. Kuryr has implemented some shell scripts for different L2 to connect the specified network card to Neutron's L2 topology. These scripts are located in/usr/libexec/kuryrDirectory, they are the same asbinding:vif_typeThe value of is one to one. Therefore, what Kuryr has to do is to read the Neutron port information, find the corresponding shell script, and connect the host interface in the veth pair to the OpenStack Neutron L2 topology by calling the shell. After access, the container is actually in an L2 network with the virtual machine, and it can naturally communicate with the virtual machine. On the other hand, you can also use various services provided by Neutron, Security group, QoS, etc.

Currently the L2 network types supported by kuryr are: bridge iovisor midonet ovs tap unbound

Wait, is this similar to the way OpenStack Nova uses Neutron? Nova calls the Neutron API to create a port, and Nova actually creates a network card and binds it to the virtual machine.

Let's introduce kuryr-kubernetes next time

Intelligent Recommendation

Install kuryr devstack

The installation uses the centos 7.2 system. During the installation process, some software is used by other operating systems, so the installation process may not be successfully completed, but the s...

Libnetwork CNM framework and implementation

Introduction LibnetworkStarting from docker1.6, Lib is gradually extracted from the network part of the docker project to provide an abstract container network model for other applications (such as do...

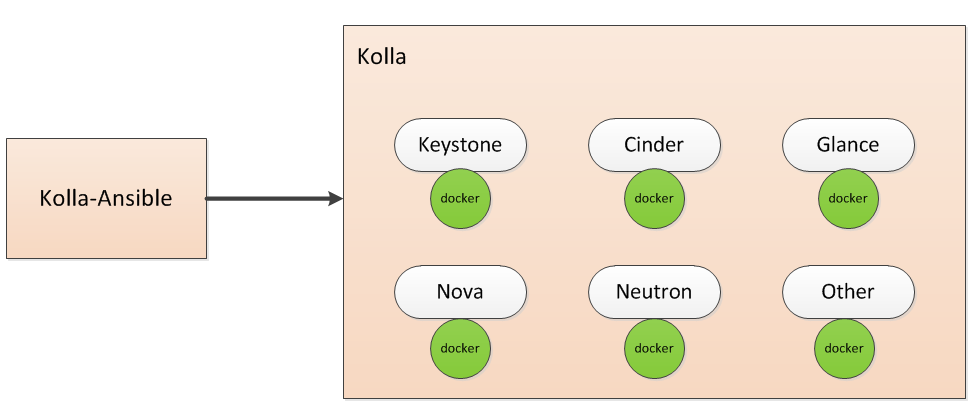

The correct posture for deploying OpenStack in a container

At present, IaaS open source technology represented by OpenStack and PaaS/CaaS container technology represented by Docker are becoming more and more mature. How to combine the two has been a focus are...

openstack rabbitmq container enable rabbitmq_tracing

openstack rabbitmq container enable rabbitmq_tracing MoveSmall stationGet a better experience. surroundings: OpenStack pike with kolla-ansible deployment os: centos7.3 operating: Enter rabbitmq contai...

Openstack network detailed

This article is basically a reference.http://www.mirantis.com/blog/Several English blogs, translated and organized, detailed the network model of the openstack Essex version. Although Quantum was laun...

More Recommendation

Openstack network debugging notes

Openstack network debugging notes surroundings controller 192.168.100.x/24 172.17.0.1/24 Intranet docker source: docker run -d -name docker-registry2 -p 1000:5000 registry Openstack: docker deployment...

Openstack (neutron network build)

Enter the database #mysql -uroot -proot Create a neutron database >create database neutron Set permissions on the neutron database >GRANT ALL PRIVILEGES ON neutron.* TO ‘neutron’@&ls...

Openstack network foundation

I. Overview Network virtualization is the most important part of cloud computing. This article details the principles, usage, and data flow of various network devices abstracted by Linux. In this arti...

Openstack network works

Openstack network works Examples are assigned to the subnet, to enable network connectivity Each project can have one or multiple subnets Red Hat's Openstack platform, Openstack network service is the...