5 Netty thread model

tags: # Explain in simple terms netty java netty

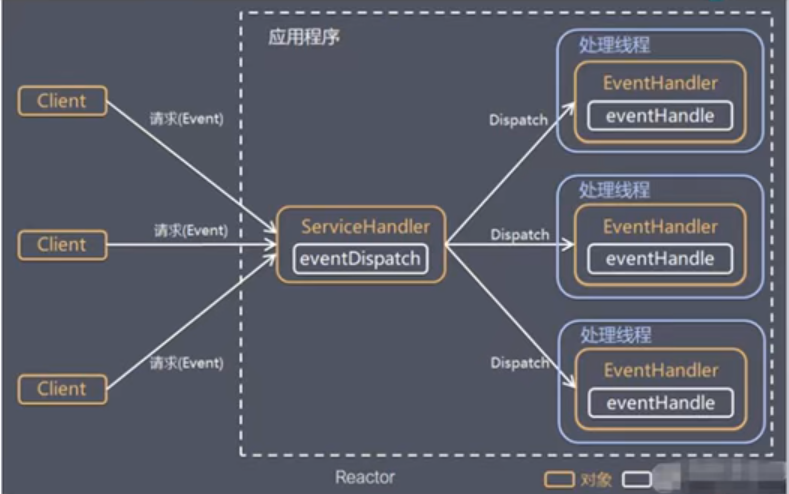

When we discuss the Netty threading model, we usually first think of the classic Reactor threading model. Although different NIO frameworks have different implementations of the Reactor mode, they essentially follow the basic threading model of Reactor.

Let us review the classic Reactor threading model together.

5.1 Reactor thread model

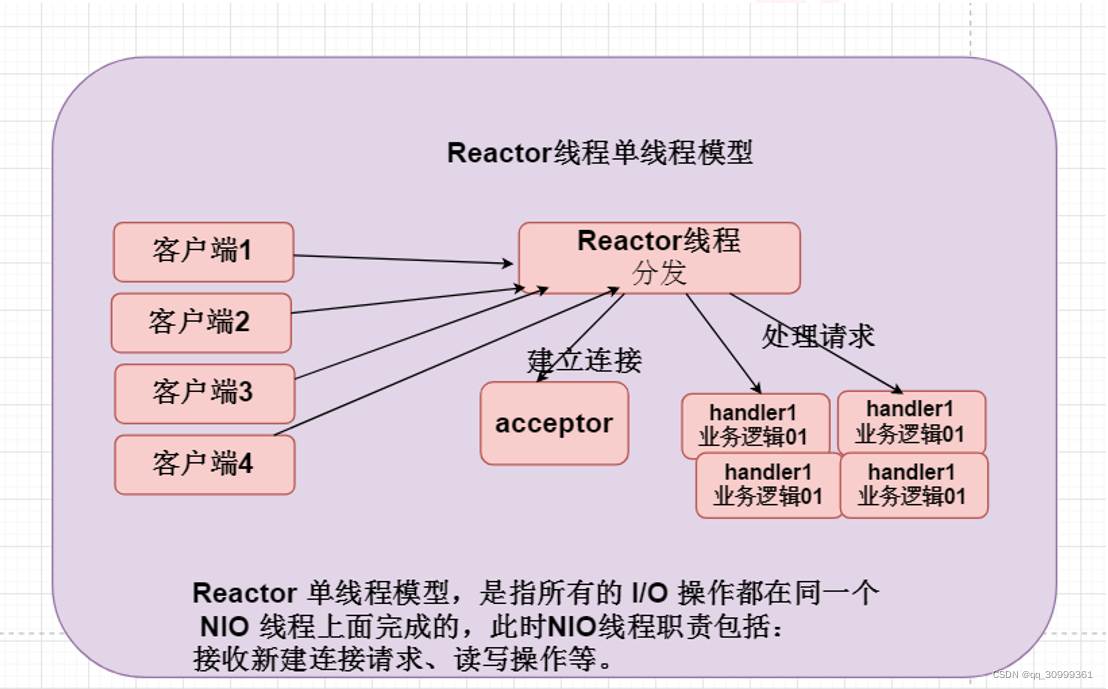

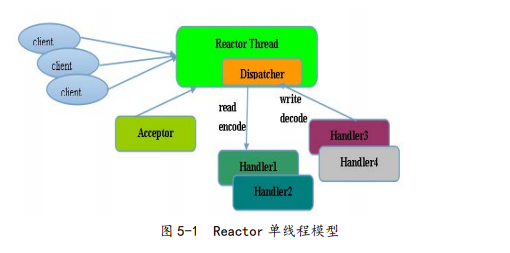

5.1.1 Reactor single-threaded model

The Reactor single-threaded model means that all I/O operations are completed on the same NIO thread. The responsibilities of the NIO thread are as follows.

• As a NIO server, receiving TCP connections from clients;

• As a NIO client, initiate a TCP connection to the server;

• Read the request or response message of the communication peer;

• Send a message request or response message to the communication peer.

The Reactor single-threaded model is shown in Figure 5-1.

Since Reactor mode uses asynchronous non-blocking I/O, all I/O operations will not cause blocking. In theory, a thread can independently handle all I/O-related operations. From an architectural perspective, a NIO thread can indeed fulfill its responsibilities. For example, through the Acceptor class to receive the client's TCP connection request message, when the link is established successfully, the corresponding ByteBuffer is dispatched to the designated Handler through Dispatch for message decoding. After the user thread message is encoded, the message is sent to the client through the NIO thread.

In some small-volume application scenarios, a single-threaded model can be used. However, this is not suitable for high-load, large-concurrency application scenarios. The main reasons are as follows:

• A NIO thread processes hundreds of thousands of links at the same time, and performance cannot be supported. Even if the CPU load of the NIO thread reaches 100%, it cannot meet the encoding, decoding, reading, and sending of massive messages.

• When the NIO thread is overloaded, the processing speed will slow down, which will cause a large number of client connections to time out, and retransmissions will often occur after the timeout. This will increase the load on the NIO thread and eventually cause a large number of message backlogs and processing timeouts , Become the performance bottleneck of the system.

• Reliability issues: Once the NIO thread runs away unexpectedly or enters an infinite loop, the communication module of the entire system will be unavailable, unable to receive and process external messages, and cause node failure. In order to solve these problems, the Reactor multi-threaded model was evolved. Let's study the Reactor multithreading model together.

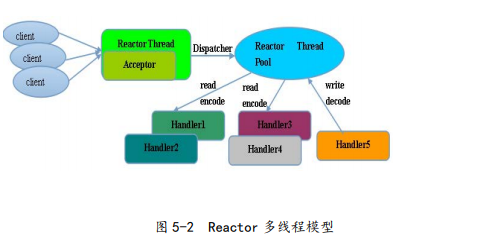

5.1.2 Rector multithreading model

The biggest difference between the Rector multi-threaded model and the single-threaded model is that there is a set of NIO threads to handle I/O operations. Its principle is shown in Figure 5-2.

The features of the Reactor multi-threaded model are as follows.

• There is a dedicated NIO thread-the Acceptor thread is used to monitor the server and receive TCP connection requests from the client.

• Network I/O operations—reading, writing, etc. are handled by a NIO thread pool. The thread pool can be implemented using a standard JDK thread pool. It contains a task queue and N available threads. These NIO threads are responsible for reading messages. Fetch, decode, encode and send.

• One NIO thread can process N links at the same time, but one link corresponds to only one NIO thread, preventing concurrent operation problems.

In most scenarios, the Reactor multi-threaded model can meet performance requirements. However, in some special scenarios, a NIO thread is responsible for monitoring and processing all client connections may have performance problems. For example, there are millions of concurrent client connections, or the server needs to authenticate the client handshake, but the authentication itself is very performance-loss. In such scenarios, a single Acceptor thread may have insufficient performance. In order to solve the performance problem, a third type of Reactor threading model-the master-slave Reactor multi-threading model was created.

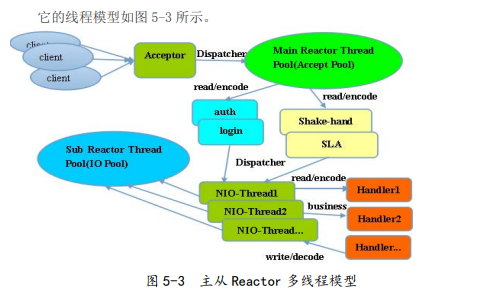

5.1.3 Master-slave Reactor threading model

The characteristic of the master-slave Reactor threading model is that the server is no longer a single NIO thread for receiving client connections, but an independent NIO thread pool. After Acceptor receives the client TCP connection request and completes the processing (may include access authentication, etc.), it registers the newly created SocketChannel to an I/O thread in the I/O thread pool (sub reactor thread pool). Responsible for SocketChannel reading and writing, encoding and decoding. The Acceptor thread pool is only used for client login, handshake and security authentication. Once the link is established successfully, the link is registered on the I/O thread of the backend subReactor thread pool, and the I/O thread is responsible for subsequent I/O operating.

Using the master-slave NIO thread model can solve the problem of insufficient performance of a server listening thread that cannot effectively handle all client connections. Therefore, in Netty's official demo, this threading model is recommended.

5.2 Netty thread model

Netty's threading model is not static, it actually depends on the user's startup parameter configuration. By setting different startup parameters, Netty can simultaneously support Reactor single-threaded model, multi-threaded model and master-slave Reactor multi-line layer model.

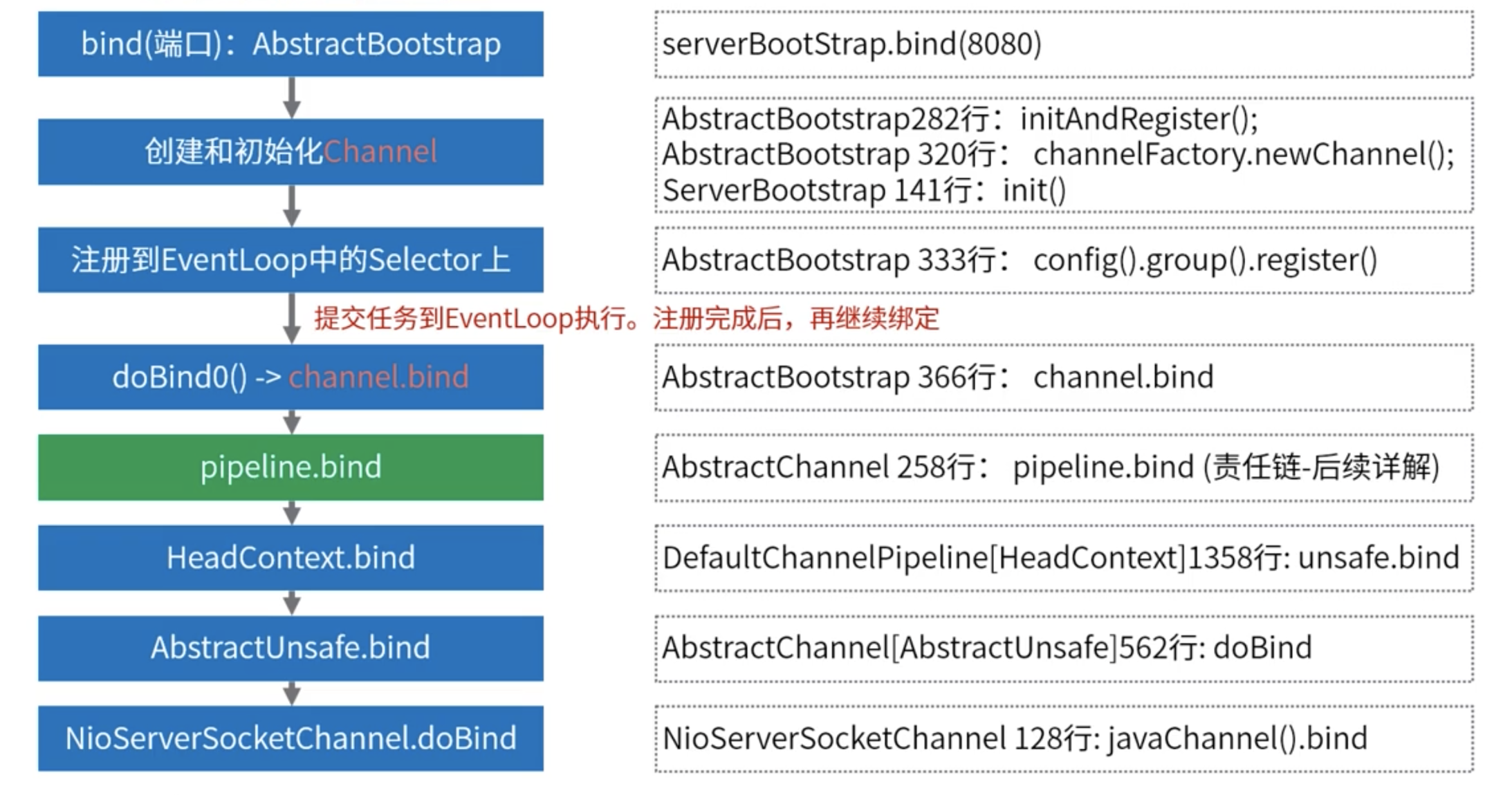

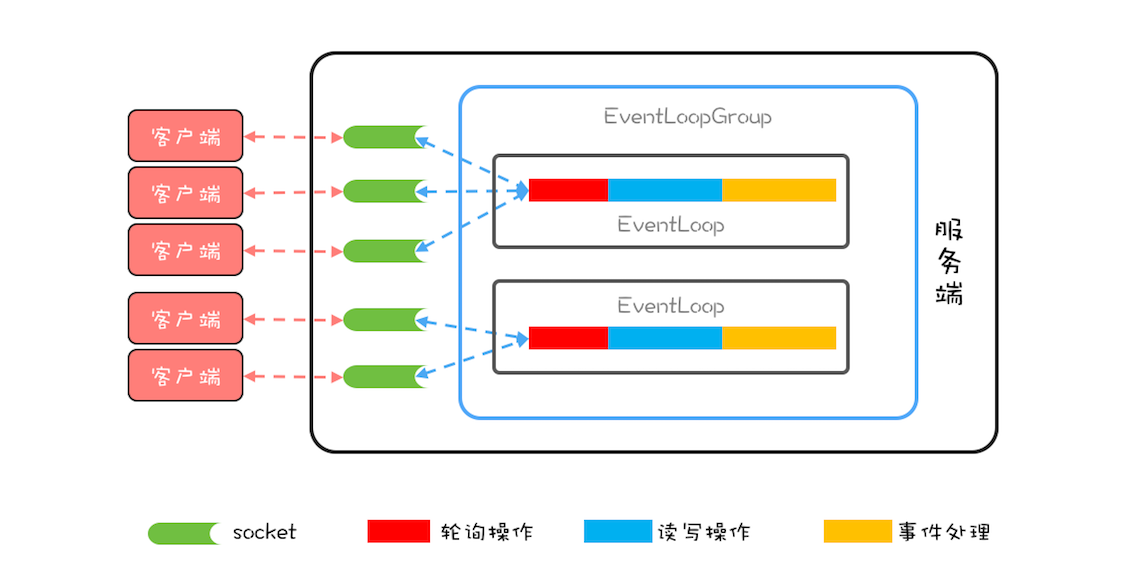

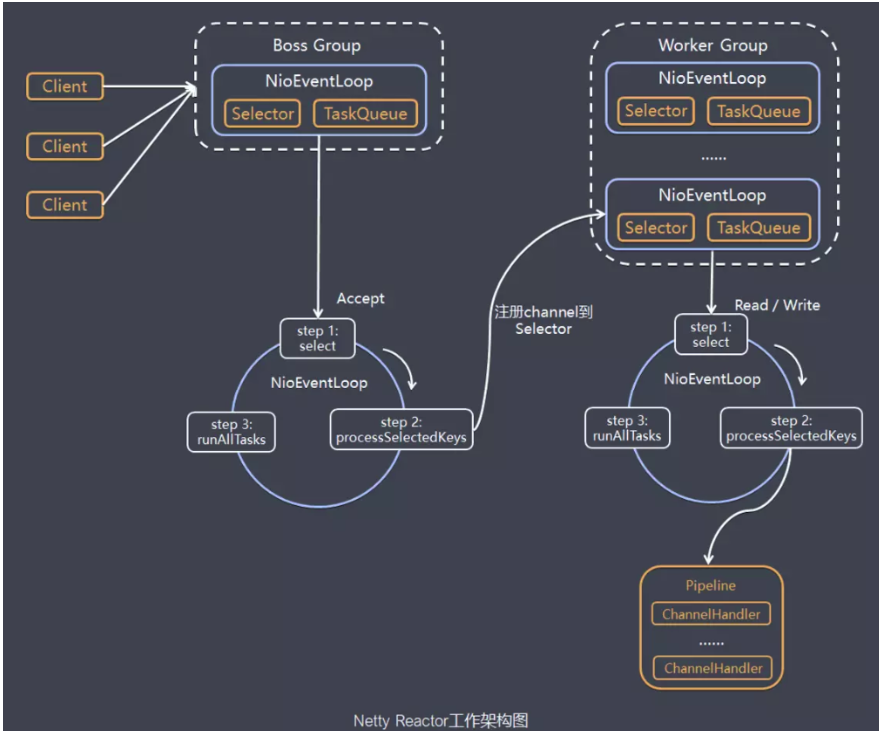

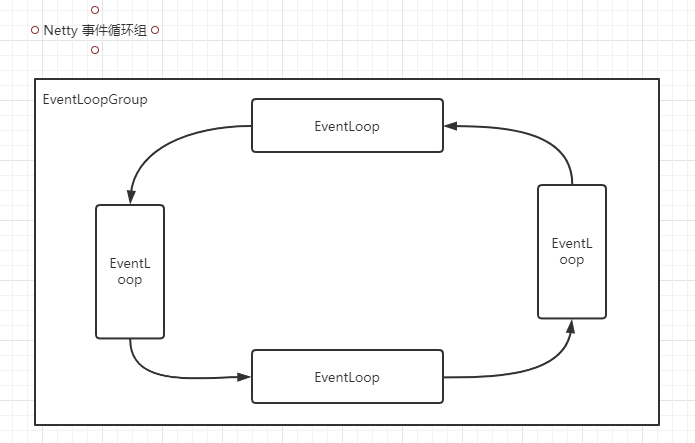

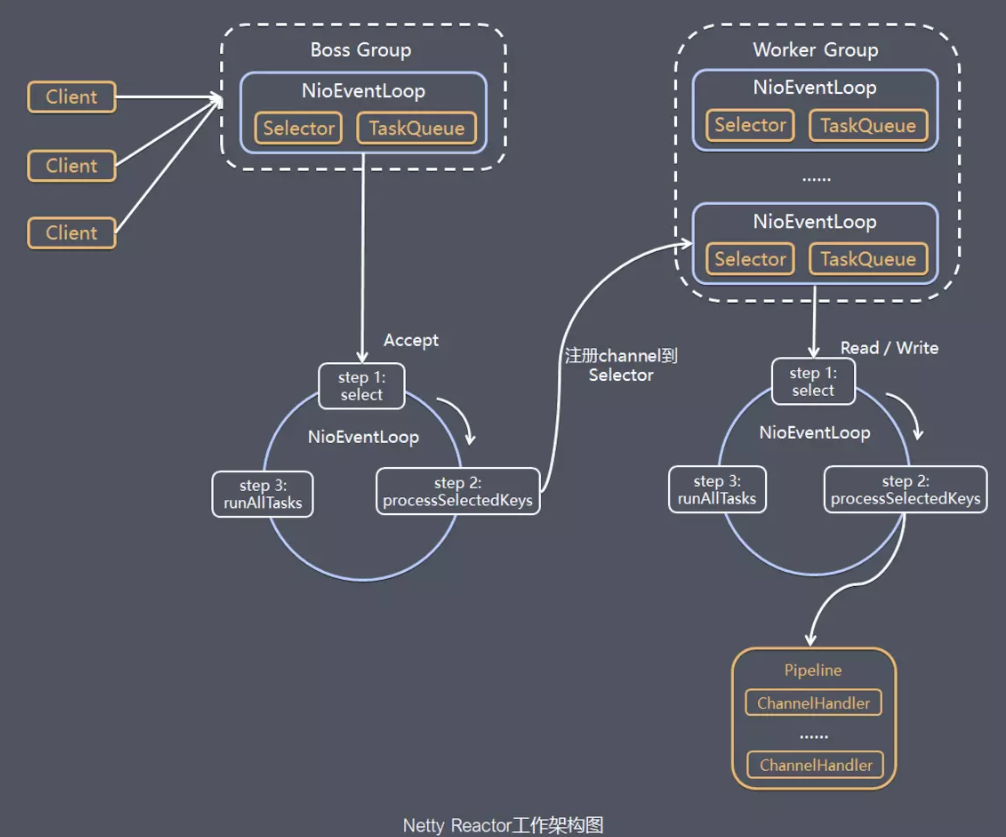

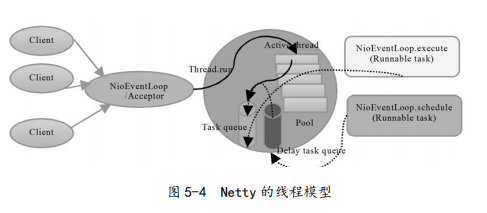

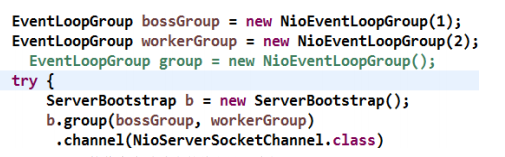

Let us quickly understand Netty's threading model through a schematic diagram (Figure 5-4): You can set its threading model by adjusting the Netty server startup parameters. When the server starts, two NioEventLoopGroups are created, which are actually two independent Reactor thread pools. One is used to receive the client's TCP connection, and the other is used to process I/O-related read and write operations, or execute system tasks, timing tasks, etc.

The responsibilities of the thread pool used by Netty to receive client requests are as follows.

(1) Receive client TCP connection and initialize Channel parameters;

(2) Notify ChannelPipeline of link state change events. The Reactor thread pool responsibilities of Netty handling I/O operations are as follows. (1) Asynchronously read the datagram of the communication peer and send the read event to the ChannelPipeline;

(2) Asynchronously send messages to the communication peer, and call the message sending interface of ChannelPipeline;

(3) Execute system call Task;

(4) Execute timing tasks, such as link idle state monitoring timing tasks.

By adjusting the number of threads in the thread pool, whether to share the thread pool, etc., Netty's Reactor thread model can be switched between single-thread, multi-thread and master-slave multi-thread. This flexible configuration method can meet the needs of different users to the greatest extent. Personalized customization.

In order to improve performance as much as possible, Netty has carried out a lock-free design in many places, such as serial operations within the I/O thread, to avoid performance degradation caused by multithreaded competition. On the surface, the serialization design seems to have low CPU utilization and insufficient concurrency. However, by adjusting the thread parameters of the NIO thread pool, multiple serialized threads can be started at the same time to run in parallel. This partial lock-free serial thread design has better performance than a queue-multiple worker thread model.

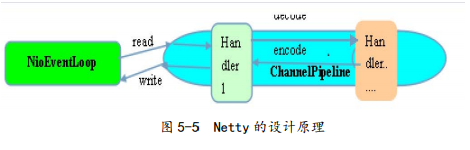

Its design principle is shown in Figure 5-5:

After Netty's NioEventLoop reads the message, it directly calls ChannelPipeline's fireChannelRead (Object msg). As long as the user does not actively switch threads, the user's Handler is always called by NioEventLoop, during which thread switching is not performed. This serialization method avoids lock competition caused by multi-threaded operations, and is optimal from a performance perspective.

5.3 Best practices

5.3.1 Simple business with controllable time is processed directly on the I/O thread

Simple business with controllable time is directly processed on the I/O thread. If the business is very simple, the execution time is very short, there is no need to interact with external network elements, access the database and disk, and do not need to wait for other resources, it is recommended to directly in the business ChannelHandler In execution, there is no need to start business threads or thread pools. Avoid thread context switching, and there is no thread concurrency problem.

5.3.2 Complicated and time-uncontrollable business recommendations are delivered to the back-end business thread pool for unified processing

Complex and time-uncontrollable services are recommended to be delivered to the back-end business thread pool for unified processing. For this type of business, it is not recommended to directly start threads or thread pool processing in the business ChannelHandler. It is recommended to package different businesses into Tasks and deliver them uniformly. The end of the business thread pool for processing. Too many business ChannelHandlers will bring development efficiency and maintainability issues. Do not treat Netty as a business container. For most complex business products, you still need to integrate or develop your own business container, and do a good job of layering with Netty architecture .

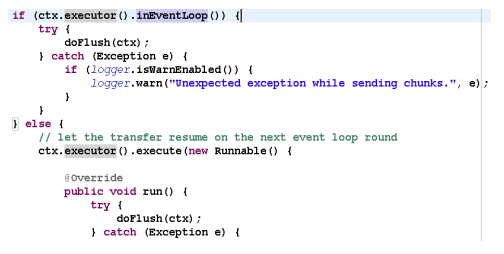

5.3.3 Business threads avoid direct manipulation of ChannelHandler

The business thread avoids directly operating the ChannelHandler. For the ChannelHandler, both the IO thread and the business thread may operate, because the business is usually a multi-threaded model, so there will be a multi-threaded operation ChannelHandler. In order to avoid the problem of multi-thread concurrency as much as possible, it is recommended to follow Netty's own practice, by encapsulating operations into independent Tasks and executing them by NioEventLoop instead of direct operations by business threads. The relevant code is as follows:

If you confirm that the concurrently accessed data or concurrent operations are safe, you don't need to do anything more. This needs to be judged and handled flexibly according to specific business scenarios.

5.3.4 Calculation of the number of threads

There are two recommended calculation formulas for the number of threads.

• Formula 1: Number of threads = (total thread time / bottleneck resource time) × the number of parallel threads of the bottleneck resource;

• Formula 2: QPS=1000/total thread time × number of threads. Due to the different user scenarios, it is actually difficult to calculate the optimal thread configuration for some complex systems. It can only be based on the test data and user scenarios, combined with formulas to give a relatively reasonable range, and then perform data on the range. Performance test, select the relatively optimal value.

NOTE: This article reference "in layman's language Netty", Author: Li Linfeng

Intelligent Recommendation

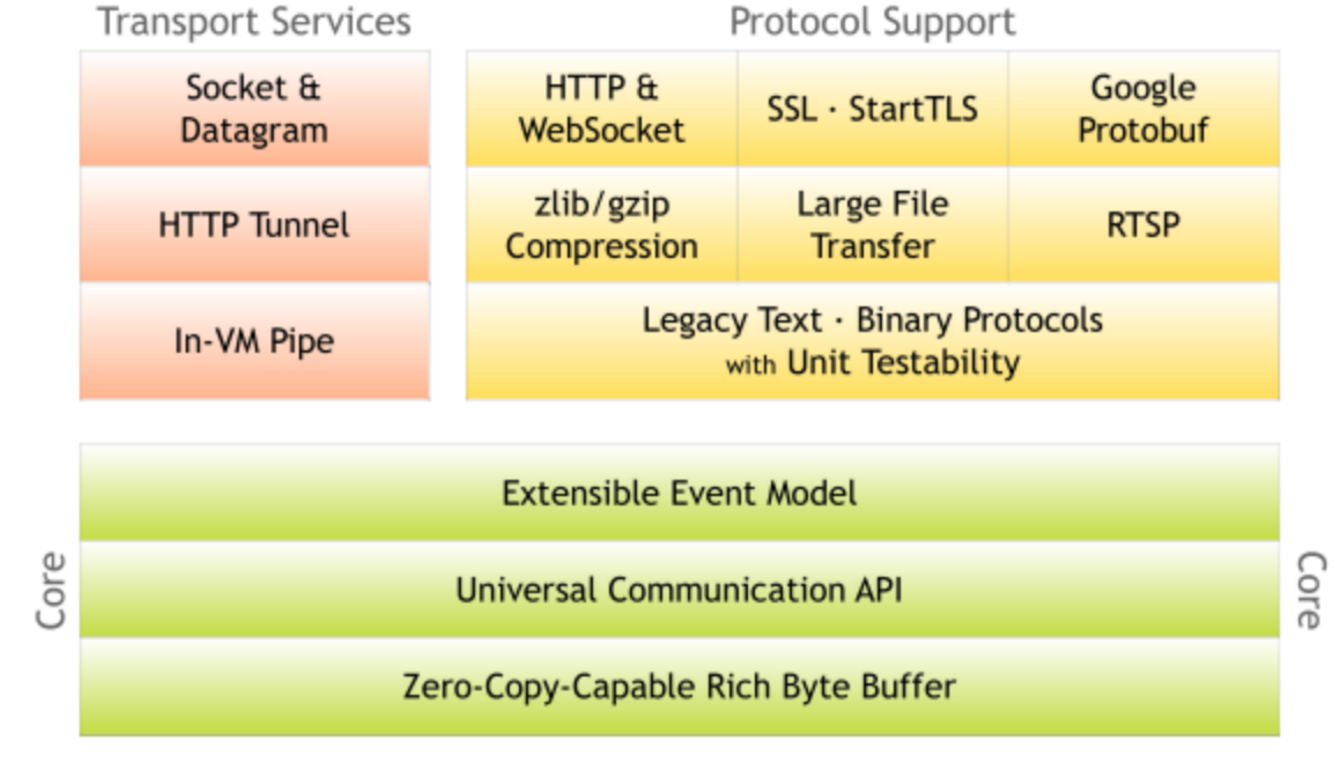

2.2.1 Netty thread model

Introduction to Netty Netty is a high-performance, highly scalable asynchronous event-driven network application framework, which greatly simplifies network programming such as TCP and UDP client and ...

Thread model in Netty

The core concept in Netty isEvent loop (EventLoop), Which is actually Reactor in Reactor mode,Responsible for monitoring network events and calling event handlers for processing.In the 4.x version of ...

Netty features and thread model

Zero copy hard driver - kernel buffer - protocol engine only DMA copy avoids cpu copy There was actually a cpu copy of kernel buffer - socket buffer, but the copied information can rarely be ignored; ...

013. NETTY thread model

Introduction to Netty Netty is a high-performance, high-scalable asynchronous event-driven network application framework, which greatly simplifies network programming such as TCP and UDP clients and s...

Netty thread model and basics

Why use Netty Netty is an asynchronous event-driven web application framework for rapid development of maintainable high-performance and high-profile servers and clients. Netty has the advantages of h...

More Recommendation

Netty thread model and gameplay

Event cycle group All I / O operations in Netty are asynchronous, and the asynchronous execution results are obtained by channelfuture. Asynchronously executes a thread pool EventLoopGroup, it ...

Netty - Thread Model Reactor

table of Contents Thread model 1, traditional IO service model 2, Reactor mode reactor Three modes: to sum up Netty model Excommissum Thread model 1, traditional IO service model Blocked IO mode Get i...

Netty thread model [next]

Hey everyone, I amJava small white 2021。 The programmer of the halfway is in the development of aircraft, and the opportunity to find a more interesting thing under the development of a surveying cour...

【Netty】 thread model

content 1. Single Reactor single thread 2. Single Reactor Multi -thread 3. Reactor Main Strike Model Single -threaded model (single Reactor single thread) Multi -threaded model (single Reactor multi -...

Netty entry thread model

Single-threaded model: the boss thread is responsible for connection and data reading and writing Hybrid model: the boss thread is responsible for connection and data reading and writing, and the work...