Keepalived+lvs combined with nginx stress test practice

tags: LVS Keepalived pressure test

demand

Recently done a kafka middleware, the demand is relatively simple, just one requirement: the supported throughput is 5WQps.

try

- The interface content is relatively simple: send a data to kafka according to topic, key, value. The code is smashed - unit test - deployment - single node pressure test, and then tested locally using the more familiar jmeter.

- Question 1: At this point, the first problem arises. No matter how many threads are opened and sent to visit, Qps is about 2000 or so. ! This is completely inconsistent with psychological expectations. Is this an ability to send kafka's interface asynchronously? Impossible, is the bottleneck that the kafka producer's producer parameter configuration is wrong?

- Question 2: When the total number of pressure gauges reaches about 5W, the following abnormalities will occur:

org.apache.kafka.common.errors.TimeoutException: Expiring 1 record(s) for t2-0: 30042 ms has passed since batch creation plus linger time

For the second problem, the request.timeout.ms configuration has been increased. The default is 30s. I modified it to 60s. (Kafka configuration referenceI have tossed for a long time and found that it is the bottleneck of the network.

The local to the server uses a vpn connection, the bandwidth is about 10Mbyte, which leads to the accumulation of the send queue, and eventually some data transmission timeout! At the same time, I think that the problem is caused by the same reason. Then replace the pressure initiator to the line, jmeter replaced with a more famous ab.

- Question 3: The ab test starts with 500 threads, and the throughput can reach 1WQps. It is a bit happy, but the effect of continuing the upward pressure is not significant. When the concurrent reaches more than 1000, ab will report an error:

apr_socket_recv: Connection reset by peer (104)

Modify the Linux kernel configuration:

Reference file: 1.Parameter meaning 2. Linux kernel tuning

kernel.panic=60

net.ipv4.ip_local_port_range=1024 65535

#net.ipv4.ip_local_reserved_ports=3306,4369,4444,4567,4568,5000,5001,5672,5900-6200,6789,6800-7100,8000,8004,8773-8777,8080,8090,9000,9191,9393,9292,9696,9898,15672,16509,25672,27017-27021,35357,49000,50000-59999

net.core.netdev_max_backlog=261144

net.ipv4.conf.default.arp_accept=1

net.ipv4.conf.all.arp_accept=1

net.ipv4.neigh.default.gc_thresh1=10240

net.ipv4.neigh.default.gc_thresh2=20480

net.ipv4.neigh.default.gc_thresh3=40960

net.ipv4.neigh.default.gc_stale_time=604800

net.ipv4.ip_forward=1

net.ipv4.conf.all.rp_filter=0

net.ipv4.conf.default.rp_filter=0

net.nf_conntrack_max=1048576

net.netfilter.nf_conntrack_tcp_timeout_established=900

net.ipv4.tcp_retries2=5

net.core.somaxconn=65535

net.core.rmem_max=16777216

net.core.wmem_max=16777216

net.ipv4.tcp_rmem=4096 87380 16777216

net.ipv4.tcp_wmem=4096 65536 16777216

net.ipv4.tcp_keepalive_intvl=3

net.ipv4.tcp_keepalive_time=20

net.ipv4.tcp_keepalive_probes=5

net.ipv4.tcp_fin_timeout=15

# is the maximum number of clients that can accept SYN sync packets

net.ipv4.tcp_max_syn_backlog=8192

# indicates whether to open the TCP synchronization tag (syncookie), the kernel must open the CONFIG_SYN_COOKIES item to compile, the synchronization tag can prevent a socket from causing overload when there are too many attempts to connect.

net.ipv4.tcp_syncookies = 1

# Indicates whether the socket in the TIME-WAIT state (the port of TIME-WAIT) is allowed to be used for the new TCP connection.

net.ipv4.tcp_tw_reuse = 1

# Can recycle TIME-WAIT sockets faster.

net.ipv4.tcp_tw_recycle = 1

net.ipv4.ip_nonlocal_bind=1

# max open file handle

fs.file-max=655350

vm.swappiness=0

net.unix.max_dgram_qlen=128

- Problem 4: After the kernel is tuned, a single kafka service processes 2Wqps requests on average, and occasionally it can reach 3W+, which seems to be very close to the result. . Start thinking about horizontal scaling and introduce nginx for load balancing.Nginx application summary (2) – breakthrough high concurrency performance optimizationMade a series of optimizations and found that the average throughput was still 2Wqps+, with no significant growth. Looking through books and materials, nginx uses seven layers of load balancing, which is inefficient and supports up to 4Wqps+. Can refer toThe difference between four-layer and seven-layer load balancingIn addition, nginx also supports four layers of load, interested in their own information. As lvs is more mature (more data), this time temporarily selected lvs as load balancing software. (Keepalived configuration file parameter detailed)

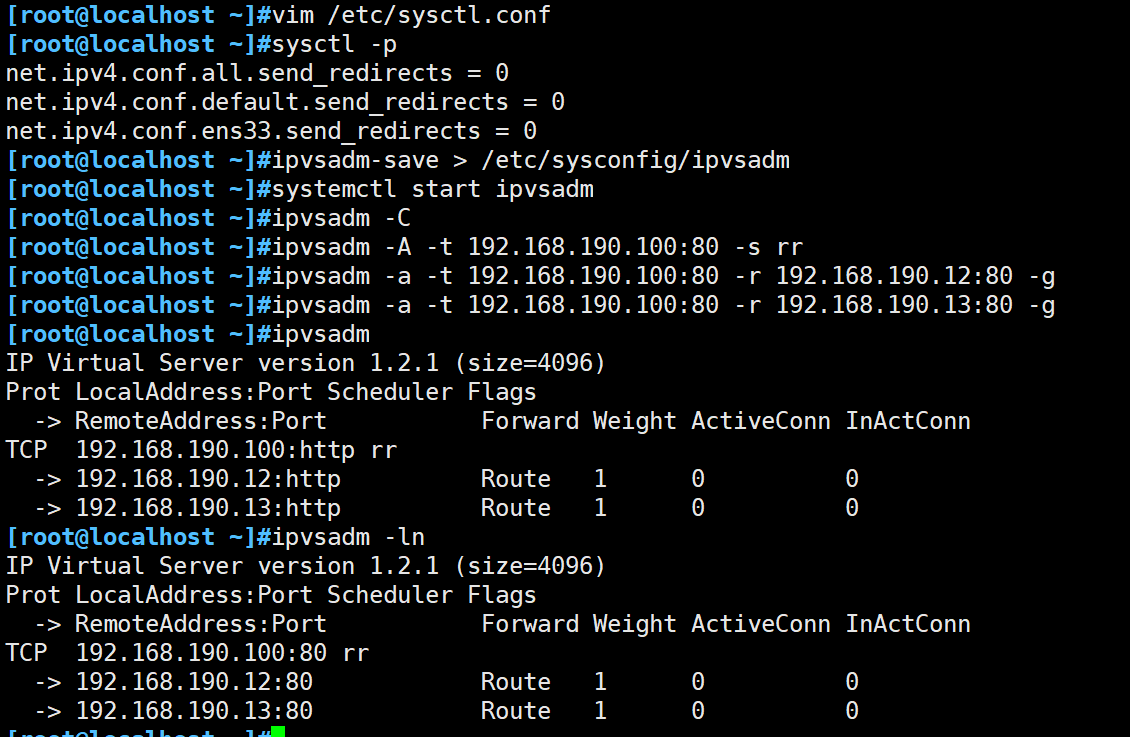

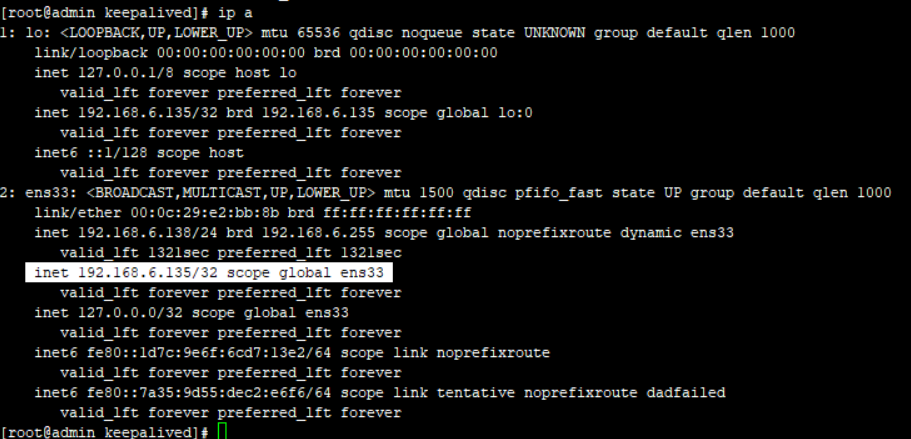

- Question 5: LVS is not successful. At first, the principle is not understood. Later, it is not understood by the keepalived configuration file. Later, with the help of Du Niang and colleagues, the LVS-DR model was successfully built.

referenceLVS installation and use detailedAnd [keepalived configuration details]

keepalived+LVS configuration: (ignores other configurations)

virtual_server 192.168.17.176 86 {

Delay_loop 1 #Detection interval

Lb_algo rr # polling schedule

Lb_kind DR #MAC routing mode

Persistence_timeout 0 #Schedule the same IP request to the same server within a certain period of time

Protocol TCP #TCP protocol

Real_server 192.168.17.57 86 { #definition backend server

Weight 1 #weight

Inhibit_on_failure #When the server health check fails, set its weight to 0 instead of removing it from Virtual Server.

HTTP_GET {

url {

Path /kafka/check # Specify the path to the URL to check. Such as path / or path /mrtg2

#digest <STRING> # Summary. Calculation method: genhash -s 172.17.100.1 -p 80 -u /index.html

Status_code 200 # Status code.

}

Connect_timeout 3 #detect timeout

Nb_get_retry 3 #retry times

Delay_before_retry 3 #Retry interval

}

}

Real_server 192.168.17.64 86 { #definition backend server

Weight 1 #weight

inhibit_on_failure

HTTP_GET {

url {

path /kafka/check

status_code 200

}

Connect_timeout 3 #detect timeout

Nb_get_retry 3 #retry times

Delay_before_retry 3 #Retry interval

}

}

Real_server 192.168.17.65 86 { #definition backend server

Weight 1 #weight

inhibit_on_failure

HTTP_GET {

url {

path /kafka/check

status_code 200

}

Connect_timeout 3 #detect timeout

Nb_get_retry 3 #retry times

Delay_before_retry 3 #Retry interval

}

}

}

The command that needs to be executed in real_server:

# ip addr add 192.168.17.176/32 dev lo

# echo 1 > /proc/sys/net/ipv4/conf/lo/arp_ignore

# echo 2 > /proc/sys/net/ipv4/conf/lo/arp_announce

# echo 1 > /proc/sys/net/ipv4/conf/all/arp_ignore

# echo 2 > /proc/sys/net/ipv4/conf/all/arp_announce

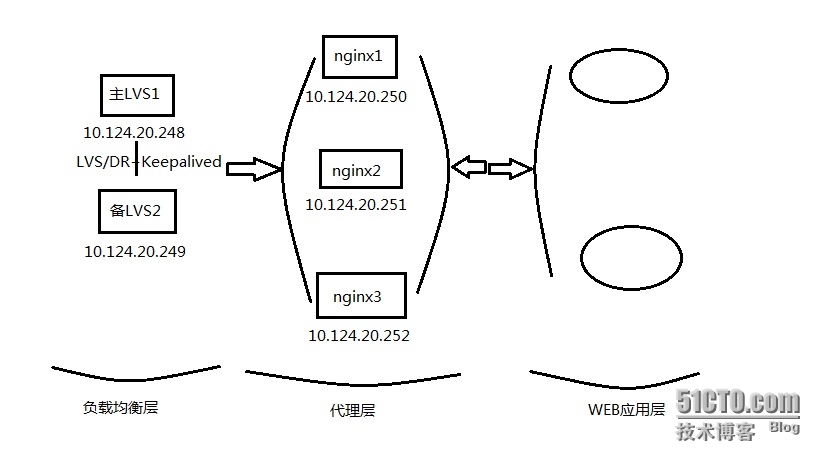

- Question 6: The LVS Layer 4 protocol only forwards the ip or changes the target mac address, that is, all kafka service port numbers need to be consistent! The company's resources are tight, only a few servers can be used, what to do, so I thought of using nginx to do another layer of proxy, so the following architecture was created:

Test Results

Deployment mode:

- Keepalived+lvs*2, active and standby

- keepalive+nginx2, active and standby, single node nginx1

- server62, server deployed six machines, each machine deploys two server nodes, each nginx agent 4 serve

- Stress test: Three test machines simultaneously use ab for stress testing:

- Short-term testing, total throughput up to 8Wqps

- Long-term pressure measurement, the throughput of each machine can reach 1.5Wqps, the total throughput is 4.5W

- Destruction test: The result of destructive testing using this architecture: (the total number of requests is 20,000, and the number of request threads is one)

- Suspend the master's keepalived in 1 and the number of request failures is 0.

- Suspend the master's keepalived in 2, the number of request failures is 0.

- Suspend 2 master nginx requests failed 1000-2000

- Suspended 2 no-keepalived nginx requests failed 1000-2000

- Suspend a server node, the number of request failures is 4-20

Conclusions and problems

Since other people are also using the machine during the test, the bottleneck analysis of some resources is not carried out during the final architecture test. The subsequent resources will be tested several times. The main reason is that the throughput has been scaled out from the results. (The information says that LVS is 10W level, and temporarily believes a letter.)

There are still problems: disconnection of services and disconnection of nginx in destructive testing will still affect user usage and will be resolved. . Not finished. .

Intelligent Recommendation

lvs+nginx+keepalived

Basic environment preparation Two servers: 192.168.199.101 (main), 192.168.199.102 (from), Nginx, Keepalived is installed on each server, respectively. Lord partition configuration: Node planning CPU ...

LVS load balancing combined KeePalived

LVS load balancing combined KeePalived First, LVS Second, Keepalived Third, the principle of Keepalived implementation Fourth, LVS + KeepaliveD High Access Cluster Deployment Experiment 1. Configure t...

LVS+Keepalived+Nginx implements HA

@(LVS) I. Introduction LVS is short for Linux Virtual Server, which means Linux virtual server, which is a virtual server cluster system. The project was established in May 1998 by Dr. Zhang Wenzhao a...

LVS/DR+Keepalived+nginx (transfer)

High availability cluster architecture configuration based on LVS/DR+Keepalived+nginx of cloud virtual machine Recently, the company asked me to deploy a cluster architecture, choose to choose DR-base...

keepalived + lvs + nginx high availability

Environment Description: IP addresses Deploying Applications 192.168.10.100 VIP0 192.168.10.101 VIP1 192.168.10.17 keepalived+lvs 192.168.10.16 keepalived+lvs 192.168.10.15 nginx 192.168.10.14 nginx k...

More Recommendation

lvs + keepalived + nginx step on mine

1.RS: Open Nginx server where the "routing" function, turn off the "ARP query" feature (this setting does not take effect, set lo, may cause the host not the original remote connec...

lvs+keepalived+nginx study notes

lvs principle reference: https://blog.csdn.net/Ki8Qzvka6Gz4n450m/article/details/79119665 https://blog.csdn.net/lupengfei1009/article/details/86514445 Build a demo in DR mode Demo software version (do...

lvs+keepalived+nginx environment construction

Install keepalived keepalived installed as a Linux system service keepalived common commands Use keepalived virtual VIP vi /etc/keepalived/keepalived.conf The content of the keepalived.conf file is as...

LVS+keepalived+nginx reverse proxy

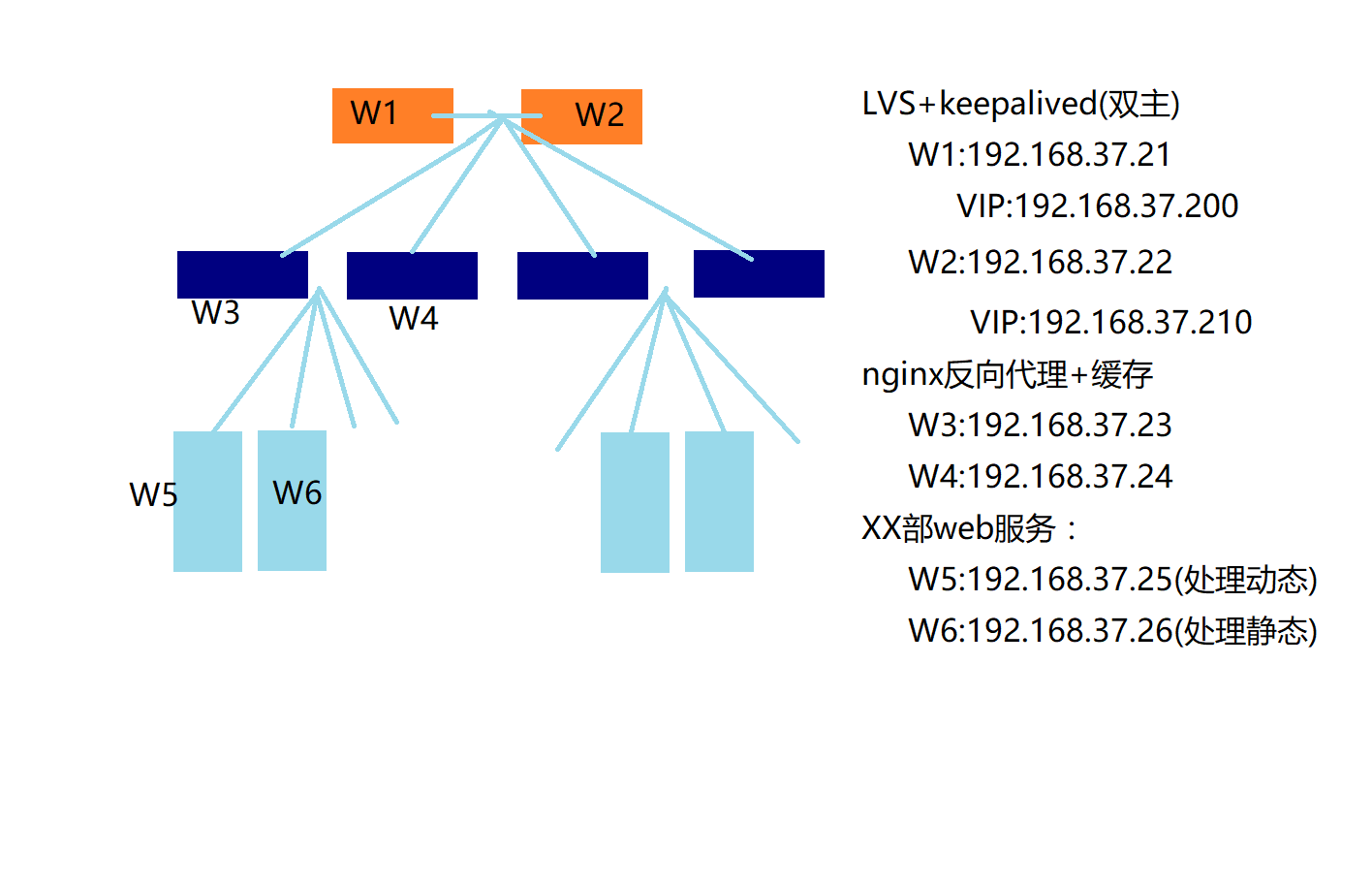

Build a simple [LVS+keepalived (dual master)] + [nginx reverse proxy + cache] architecture. 1. Basic environment Note: The following experiments were performed with firewalld and selinux turned off. 2...

LVS+Keepalived+nginx installation and configuration

Operating system Centos 6.4 X86_64 LVS-Master:10.0.80.205 LVS-Backup:10.0.80.206 VIP:10.0.80.210 RS01:10.0.80.199 RS02:10.0.80.200 2.1. Check if there is an IPVS module LVS is part of the Linux standa...