Machine Learning: How much training data do you need?

The author is a Google software engineer, Ph.D. in Electronic Information Engineering at Northwestern University, USA, specializing in large-scale distributed systems, compilers, and databases.

Learn from Google's machine learning code that one trillion training samples are currently needed

The nature and quantity of training data is the most important factor in determining the performance of a model. Once you have entered comprehensive training data for a model, usually for these training data, the model will produce corresponding results. However, the question is how much training data do you need? This depends on the tasks you are performing, the performance you ultimately want to achieve through the model, the existing input characteristics, the noise contained in the training data, the noise contained in the extracted features, and the complexity of the model. Therefore, it is found that all these variables have a relationship with each other. How to work is to train the model on a different number of training samples, and draw a learning curve of the model on each training sample set. You must have training data with obvious characteristics and appropriate quantity to learn the learning curve with interesting and outstanding performance through the training of the model. To achieve the above goals, you can't help but ask, what should you do when you are just training a model, or when you are training the model, when can you detect that the model has too little training data, and I want to estimate what problems exist in the entire model training process.

Therefore, in response to these above questions, instead of an absolutely accurate answer, we give a speculative, more practical rule of thumb. The general process is: automatically generate a lot of questions about logistic regression. For each generated logistic regression problem, learn some relationship between the number of training samples and the performance of the training model. Based on a series of questions, the relationship between the number of training samples and the performance of the training model is observed, resulting in a simple rule - the rule of thumb.

I am not sure how many training samples my model needs, I will build a model to figure out the number of training samples needed.

Here is the code that generates a series of training effects on the model for the logistic regression problem and the study of the number based gradient training samples. Execute the code by calling Google's open source toolkit Tensorflow. The code does not need to be applied to any software and hardware during the run, and I can run the entire experiment on my laptop. As the code runs, you will get the following learning curve, as shown in Figure (1)

In Figure (1), the x-axis represents the ratio of the number of training samples to the number of model parameters. The y-axis is the f-score value of the model. The curves of different colors in the figure correspond to training models with different numbers of parameters. For example, the red curve represents a model with 128 parameters with the number of training samples 128 X 1, 128 X 2When the change is such a change, the f-score value changes.

The first observation obtained is that the f-score value does not change with the parameter scale. From this observation, we can assume that the given model is linear, and it is gratifying that some of the hidden layers in the model are not mixed with nonlinearities. Of course, larger models require more training samples, but if the ratio of the number of training samples to the number of model parameters is given, you will get the same model performance. The second observation is that when the ratio of the number of training samples to the number of model parameters is 10:1, the f-score value fluctuates around 0.85. We can call the training model at this time a model with good performance. Through the above observations, we can get a 10-fold rule method—that is, to train a good performance model, the number of training samples required should be 10 times the number of model parameters.

Therefore, by the 10-fold rule method, the problem of estimating the number of training samples is converted into a model problem with good performance by knowing the number of parameters in the model. Based on this, this has caused some controversy:

(1) For linear models, such as logistic regression models. Based on each feature, the model is assigned the corresponding parameters, so that the number of parameters and the number of features input are equal, but there may be some problems here: your features may be sparse, so the number of features counted is not direct .

Translator's Note:I think the meaning of this sentence is that sparse features, such as the coding of sparse features, are 01001001. The features that can play a role in the training of the model are few, and the features that do not work are the majority. According to the above linear rule, if the model assigns corresponding parameters to each feature, that is, assigns corresponding parameters to the useless features, and then acquires a training sample set that is 10 times the number of model parameters according to the 10 times rule method. The number of training samples may be excessive for the best training model. Therefore, the training sample set obtained by the 10-fold rule method may not be able to truly derive a good training model.

(2) Due to the normalization and feature selection techniques, the number of features actually input in the training model is less than the number of original features.

Translator's Note:I think these two points are some limitations in explaining the above theory of using the 10-fold rule method to obtain a good performance model. This theory is complete with respect to the input features and each feature has certain training for the model. The extent of contribution. However, the use of sparse features and feature dimension reduction techniques described in (1) and (2) leads to a reduction in the number of features. The theory of obtaining a good performance model using the 10-fold rule method remains to be further discussed.

One way to solve the above problems (1) and (2) is to extract the features, you not only use the data with the category labels but also the data without the category labels to estimate the number of features. For example, given a text corpus, you can understand your feature space by counting the number of occurrences of each word to generate a histogram of word frequencies by counting the number of occurrences of each word. According to the word frequency histogram, you can remove the long tail words to get the real, main feature quantity, then you can use the 10 times rule method to estimate the number of training samples you need when getting a good performance model.

Compared to linear models like logistic regression, neural network models present a different set of problems. In order to get the number of parameters in the neural network you need to:

(1) If your input characteristics are sparse, calculate the number of parameters in the embedded layer (I think it is the hidden layer).

(2) Calculate the number of edges in the neural network model.

The fundamental problem is that the relationship between parameters in a neural network is no longer linear. Therefore, the learning experience summary based on logistic regression model is no longer applicable to the neural network model. In models such as neural networks, you can use the number of training samples acquired based on the 10-fold rule method as a lower bound on the amount of training samples entered in the model training.

Translator's Note:In a nonlinear model such as a neural network, in order to obtain a well-trained training model, the required training data is at least 10 times the model parameters. In fact, the training data required should be more than this.

Despite the above arguments, my 10-fold rule method has worked in most problems. However, with questions about the 10-fold rule, you can insert your own model into the open source toolbox Tensorflow and make a hypothesis about the relationship between the number of training samples and the training effect of the model, and study it through simulation experiments. The training effect of the model, if you have other insights during the running process, please feel free to share.

It's a simple rule, but sometimes it's a model

This paper mainly discusses how to get a good performance model by setting a reasonable training sample size. Here the author introduces us to a 10-fold rule that can reasonably set the training sample size—that is, the number of training samples is 10 times the number of model parameters. Based on this, two special cases are drawn: linear models such as logistic regression models and neural network models to obtain the confusion or inapplicability that may occur during the training of the model using this method, and for the linear model of logistic regression And how to improve the neural network model and how to combine the 10-fold rule method to obtain a good training model gives corresponding suggestions.

In the experiment of model training that I usually do, I have often encountered the problem of not knowing how to select the number of training samples. According to the experience of the papers I have read, the number of training data is set and I try to keep trying. I didn’t know before. With the existence of this method, this paper has gained some inspiration. The amount of training data and the degree of contribution of features are crucial for classifying or regressing a model.

Additional supplementIntroduction to F-score values

Accuracy and recall rate (Precision&Recall)

Accuracy and recall are two metrics that are widely used in the field of information retrieval and statistical classification to assess the quality of results.

The accuracy is the ratio of the number of related documents retrieved to the total number of documents retrieved, and the precision of the retrieval system is measured;

The recall rate is the ratio of the number of related documents retrieved to the number of related documents in the library, which is the retrieval rate of the retrieval system.

In general, Precision is how many of the retrieved items (such as documents, web pages, etc.) are accurate. Recall is how many accurate entries are retrieved.

The correct rate, recall rate and F value are important indicators for selecting targets among many training models.

1. Correct rate = number of correct pieces of information extracted / number of pieces of information extracted

2. Recall rate = the number of correct information extracted / the number of information in the sample

The value between the two is between 0 and 1, the closer to the value 1, the higher the precision or recall.

3. F value = correct rate * recall rate * 2 / (correct rate + recall rate)

That is, the F value is the average of the correct rate and the recall rate, and the better the F value, the better the performance of the model.

About google open source toolbox Tensorflow

Tensorflow is an open source library for numerical calculations based on popular data, similar to the libSVM toolkit we used for SVM training.

More attention can be paid to the public number Easy-soo, or you can log in to the site.easysoo

Intelligent Recommendation

What do you need to do in a machine learning project?

Click on "Super Brother's Grocery Shop" above to easily follow Machine learning is the process of training a model on existing data and then applying the trained model to unknown data. From ...

N Bit's DAC need at least how much do you need to send?

How much do N -bit DAC need to send at least? For example, how much point does 3 -bit DAC need to send to represent all CODE? From 000-111, there are three cubes of 2 = 8 CODE; how much do you need? S...

Do you know how much trouble you need to write a WIN32 window?

Win32 form creation Define the entry function winmain() Create a window Design window class WNDCLASS assignment (assign value to member variable Registration window class RegisterClass Display and upd...

layui--select use and drop-down box to achieve keyboard selection

Note a few points: 1. The select drop-down box must be placed under the layui-form category. This layui-form does not have to be placed on the form, it is also possible to place it in a div 2. Pay att...

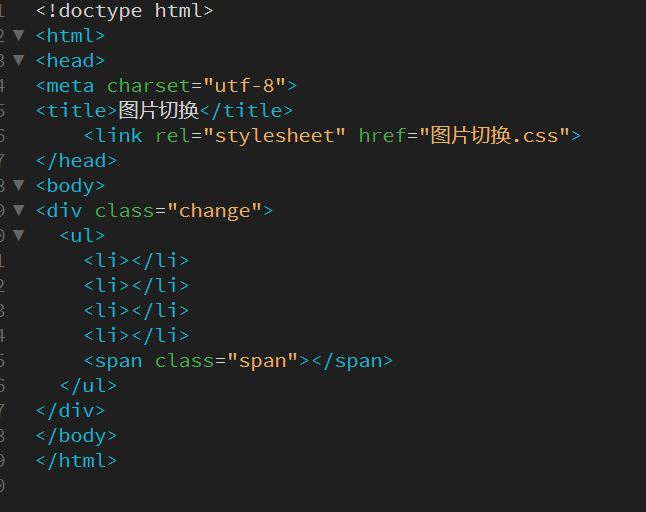

Picture switch

Just a few HTML codes Tag, there is also a span tag Take a look at the css code background-repeat specifies how to repeat the background image. Through the scale() method, the size of the element will...

More Recommendation

【Python Exercise】 Simulation Reporting Game

The code is as follows:...

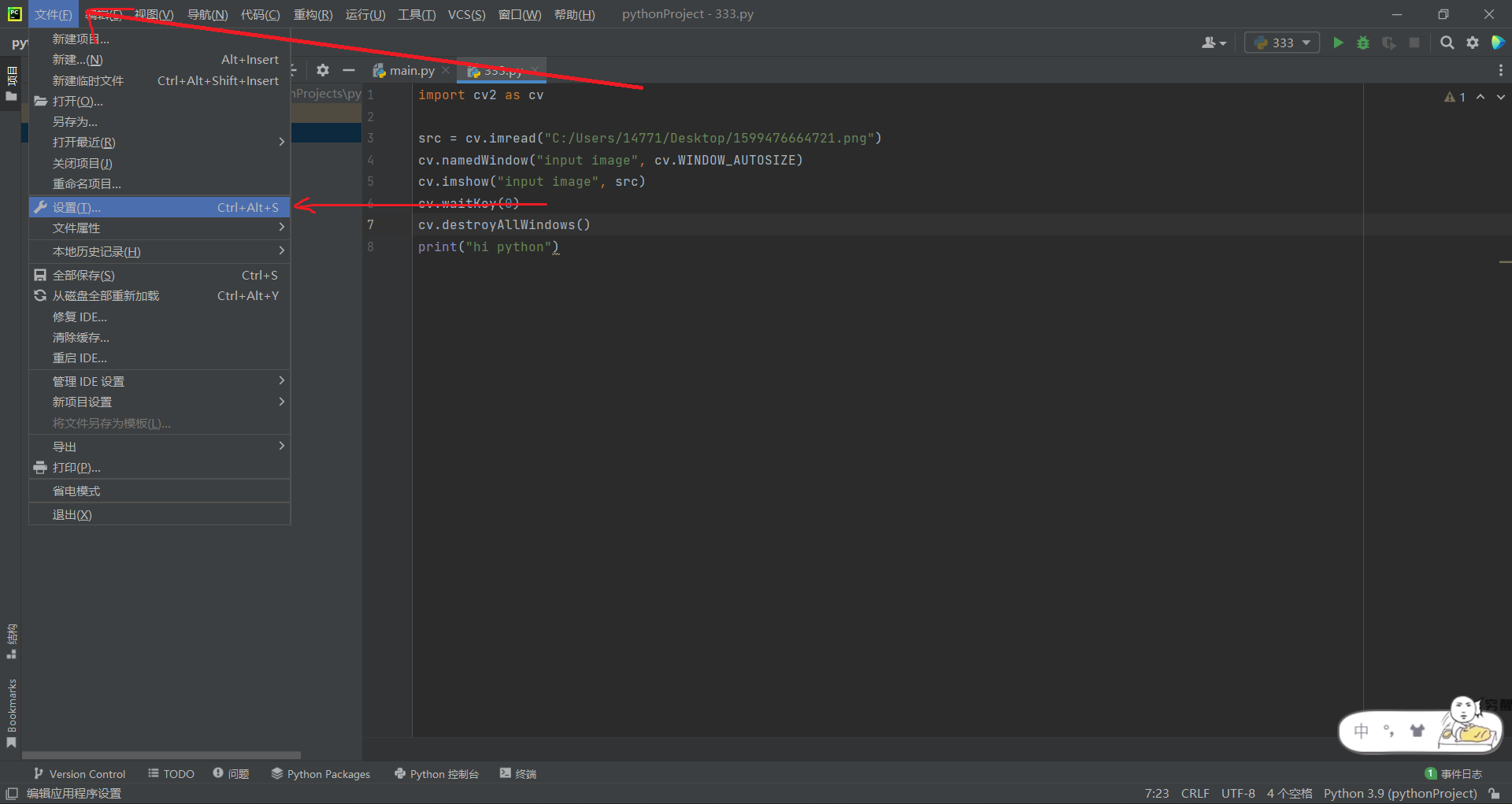

Pycharm Quickly Install OpenCV

Since the PIP source website that comes with pycharm is a foreign website, many domestic users have extremely slow downloading of other software packages in pycharm, and sometimes they will jump out o...

Use python to crawl the cover picture of Huya anchor live broadcast (scrapy)

Purpose: Use Scrapy framework to crawl the cover image of Huya anchor live broadcast Scrapy (install the Scrapy framework through pip pip install Scrapy) and Python3.x installation tutorial can find t...

Learning Record - ES6 Modularity and Node (2020-12-10)

1, what is Node? 2, Node and Java / PHP difference? 2.1, node.js and java distinction: 2.2, Node.js and PHP distinction: 3, ES6 modularization (Quote, extracted from) 3.1, Export usage: If you want to...