Netty thread model (middle)

1. background

1.1. Amazing performance dataData analysis and enterprise architectureData-driven personalized recommendation under JD 618 promotionHow to build an artificial intelligence product research and development system combining software and hardwareTechnological opportunities and challenges for Chinese innovative Internet companies going overseasBest Practices for Big Data Application of LinkedIn Member Classification Platform

1.2. Getting started with Netty basics2. Netty High Performance2.1. RPC call performance model analysis

2.1.1. Three sins of poor performance of traditional RPC calls

2.1.2. Three themes of high performance

2.2. Netty High Performance

2.2.1. Asynchronous non-blocking communication

2.2.2. Zero copy

2.2.3. Memory pool

2.2.4. Efficient Reactor thread model

2.2.5. Unlocked serialdesignidea

2.2.6. Efficient concurrent programming

2.2.7. High-performance serialization framework

2.2.8. Flexible TCP parameter configuration capabilities

2.3. Summary

3. About the author

1. background1.1. Amazing performance data

Recently, a friend in the circle told me via private message that by using Netty4 + Thrift compressed binary codec technology, they realized a 10W TPS (1K complex POJO object) cross-node remote service call. Compared to traditionaljavaThe serialization + BIO (synchronous blocking IO) communication framework has improved performance by more than 8 times.

In fact, I am not surprised by this data. Based on my more than 5 years of NIO programming experience, by choosing a suitable NIO framework, coupled with high-performance compressed binary codec technology, I carefully design the Reactor thread model to achieve the above performance Indicators are entirely possible.

Let's take a look at how Netty supports cross-node remote service calls with 10W TPS. Before officially starting to explain, let's briefly introduce Netty.

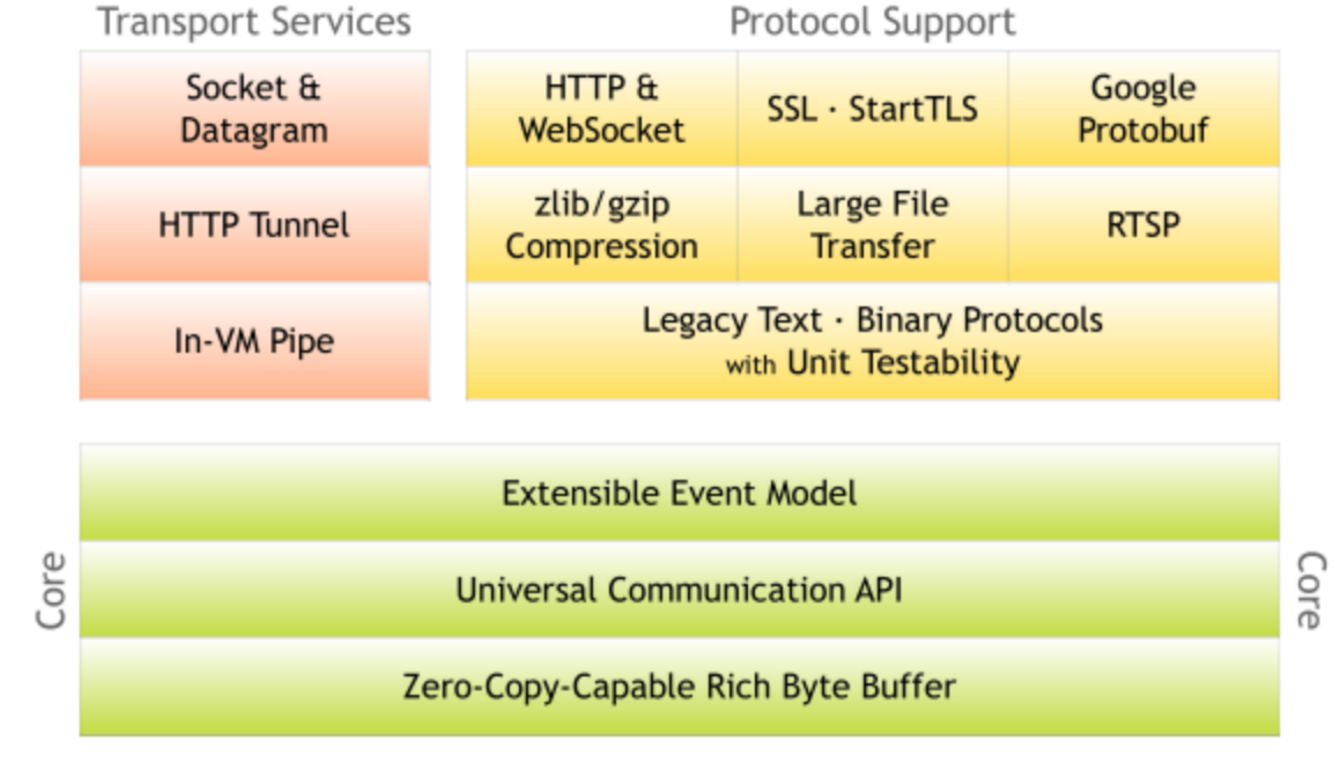

1.2. Getting started with Netty basicsNetty is a high-performance, asynchronous event-driven NIO framework. It provides support for TCP, UDP and file transfer. As an asynchronous NIO framework, all IO operations of Netty are asynchronous and non-blocking. Through the Future-Listener mechanism, Users can easily obtain the results of IO operations actively or through the notification mechanism.

As the most popular NIO framework, Netty has been widely used in the Internet field, big data distributed computing field, game industry, communication industry, etc. Some well-known open source components in the industry are also built on Netty's NIO framework.

2. Netty High Performance2.1. RPC call performance model analysis2.1.1. Three sins of poor performance of traditional RPC callsNetwork transmission problem: The traditional RPC framework or remote service (procedure) calls based on RMI and other methods use synchronous blocking IO. When the client's concurrency pressure or network delay increases, synchronous blocking IO will cause IO due to frequent wait Threads are frequently blocked. Because threads cannot work efficiently, IO processing capabilities naturally decrease.

Below, we look at the drawbacks of BIO communication through the BIO communication model diagram:

Figure 2-1 BIO communication model diagram

The server using the BIO communication model usually has an independent Acceptor thread responsible for monitoring the client connection. After receiving the client connection, a new thread is created for the client connection to process the request message. After the processing is completed, the response message is returned to the client. , Thread destruction, this is a typical one request one response model. The biggest problem with this architecture is that it does not have elastic scalability. When the number of concurrent accesses increases, the number of threads on the server side is linearly proportional to the number of concurrent accesses. Because threads are a very precious system resource of the JAVA virtual machine, when the number of threads expands, The performance of the system drops sharply. As the amount of concurrency continues to increase, problems such as handle overflow and thread stack overflow may occur, and the server will eventually go down.

Serialization problem: Java serialization has the following typical problems:

1) Java serialization mechanism is a kind of object encoding and decoding technology inside Java, which cannot be used across languages; for example, for the docking between heterogeneous systems, the code stream after Java serialization needs to be deserialized into original objects through other languages ( Copy), it is currently difficult to support;

2) Compared with other open source serialization frameworks, the code stream after Java serialization is too large, whether it is network transmission or persistence to disk, it will cause additional resource occupation;

3) Poor serialization performance (high CPU resource usage).

Thread model problem: due to the use of synchronous blocking IO, this will cause each TCP connection to occupy 1 thread. Because thread resources are very precious resources of the JVM virtual machine, when the IO read and write block causes the thread to be unable to release in time, it will cause the system The performance drops sharply, and it may even cause the virtual machine to fail to create new threads.

Figure 2-1 BIO communication model diagram

The server using the BIO communication model usually has an independent Acceptor thread responsible for monitoring the client connection. After receiving the client connection, a new thread is created for the client connection to process the request message. After the processing is completed, the response message is returned to the client. , Thread destruction, this is a typical one request one response model. The biggest problem with this architecture is that it does not have elastic scalability. When the number of concurrent accesses increases, the number of threads on the server side is linearly proportional to the number of concurrent accesses. Because threads are a very precious system resource of the JAVA virtual machine, when the number of threads expands, The performance of the system drops sharply. As the amount of concurrency continues to increase, problems such as handle overflow and thread stack overflow may occur, and the server will eventually go down.

Serialization problem: Java serialization has the following typical problems:

1) Java serialization mechanism is a kind of object encoding and decoding technology inside Java, which cannot be used across languages; for example, for the docking between heterogeneous systems, the code stream after Java serialization needs to be deserialized into original objects through other languages ( Copy), it is currently difficult to support;

2) Compared with other open source serialization frameworks, the code stream after Java serialization is too large, whether it is network transmission or persistence to disk, it will cause additional resource occupation;

3) Poor serialization performance (high CPU resource usage).

Thread model problem: due to the use of synchronous blocking IO, this will cause each TCP connection to occupy 1 thread. Because thread resources are very precious resources of the JVM virtual machine, when the IO read and write block causes the thread to be unable to release in time, it will cause the system The performance drops sharply, and it may even cause the virtual machine to fail to create new threads.

2.1.2. Three themes of high performance

1) Transmission: What kind of channel is used to send data to the other party, BIO, NIO or AIO. The IO model determines the performance of the framework to a large extent.

2) Protocol: What kind of communication protocol is used, HTTP or internal private protocol. The choice of protocol is different, the performance model is also different. Compared with the public agreement, the performance of the internal private agreement can usually be designed to be better.

3) Thread: How to read the datagram? In which thread the codec after reading is performed, how the message after codec is dispatched, and the different Reactor thread model has a great impact on performance.

Figure 2-2 Three elements of RPC call performance

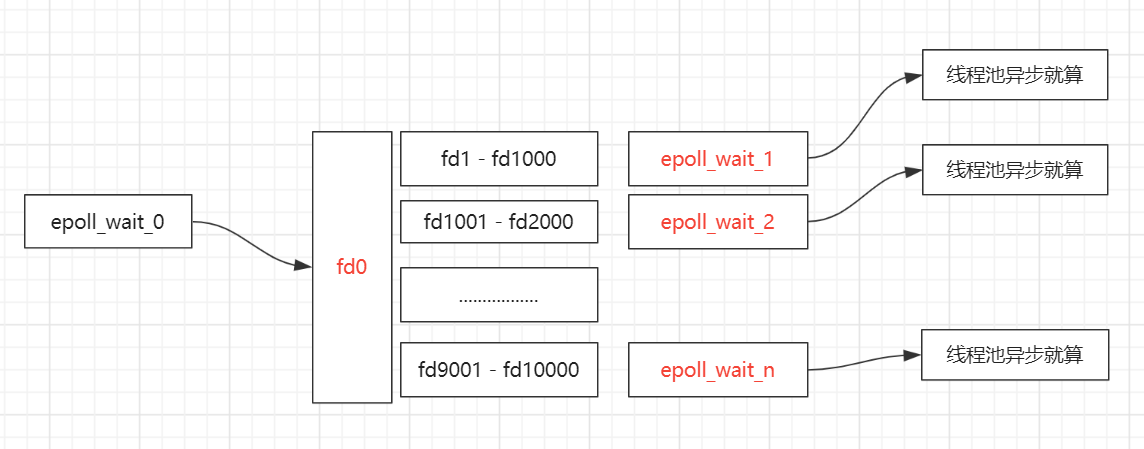

2.2. Netty High Performance2.2.1. Asynchronous non-blocking communication

In the IO programming process, when multiple client access requests need to be processed at the same time, multithreading or IO multiplexing technology can be used for processing. The IO multiplexing technology multiplexes the block of multiple IOs to the block of the same select, so that the system can process multiple client requests at the same time in the case of a single thread. Compared with the traditional multi-thread/multi-process model, the biggest advantage of I/O multiplexing is that the system overhead is small. The system does not need to create new additional processes or threads, and does not need to maintain the operation of these processes and threads, which reduces The maintenance workload of the system saves system resources.

JDK1.4 provides support for non-blocking IO (NIO). The JDK1.5_update10 version uses epoll to replace the traditional select/poll, which greatly improves the performance of NIO communication.

The JDK NIO communication model is as follows:

Figure 2-3 NIO multiplexing model diagram

Corresponding to the Socket class and the ServerSocket class, NIO also provides two different socket channel implementations, SocketChannel and ServerSocketChannel. Both of these two new channels support blocking and non-blocking modes. The blocking mode is very simple to use, but the performance and reliability are not good. The non-blocking mode is just the opposite. Developers can generally choose the appropriate mode according to their needs. Generally speaking, low-load, low-concurrency applications can choose to block IO synchronously to reduce programming complexity. However, for high-load, high-concurrency network applications, it is necessary to use NIO's non-blocking mode for development.

The Netty architecture is designed and implemented according to the Reactor model. Its server communication sequence diagram is as follows:

Figure 2-3 NIO server communication sequence diagram

The client communication sequence diagram is as follows:

Figure 2-4 NIO client communication sequence diagram

Netty's IO thread NioEventLoop can process hundreds or thousands of client Channels concurrently due to the aggregation of multiplexer selectors. Since the read and write operations are non-blocking, this can fully improve the efficiency of the IO thread and avoid Thread hangs caused by frequent IO blocking. In addition, because Netty adopts the asynchronous communication mode, one IO thread can concurrently process N client connections and read and write operations, which fundamentally solves the traditional synchronous blocking IO-connection-thread model, the performance of the architecture, the elastic scalability and Reliability has been greatly improved.

2.2.2. Zero copy

Many users have heard that Netty has a "zero copy" function, but it is not clear where it is specifically reflected. This section will explain in detail the "zero copy" function of Netty.

Netty's "zero copy" is mainly reflected in the following three aspects:

1) Netty uses DIRECT BUFFERS for receiving and sending ByteBuffers, using direct memory outside the heap for Socket read and write, and no secondary copy of the byte buffer is required. If you use traditional heap memory (HEAP BUFFERS) for Socket read and write, JVM will copy a copy of the heap memory Buffer to direct memory, and then write it to the Socket. Compared with the direct memory outside the heap, the message has one more buffer memory copy during the sending process.

2) Netty provides a combined Buffer object, which can aggregate multiple ByteBuffer objects. Users can operate the combined Buffer as easily as a Buffer, avoiding the traditional memory copy method to merge several small Buffers into one large Buffer .

3) Netty's file transfer uses the transferTo method, which can directly send the data in the file buffer to the target Channel, avoiding the memory copy problem caused by the traditional circular write method.

Below, we explain the above three kinds of "zero copy", first look at the creation of Netty receiving Buffer:

Figure 2-5 Asynchronous message reading "zero copy"

Every time a message is read in a loop, the ByteBuf object is obtained through the ioBuffer method of ByteBufAllocator. Let's continue to see its interface definition:

Figure 2-6 ByteBufAllocator allocates off-heap memory through ioBuffer

When reading and writing Socket IO, in order to avoid copying a copy from heap memory to direct memory, Netty's ByteBuf allocator directly creates non-heap memory to avoid secondary copies of buffers, and improves read and write performance through "zero copy" .

Let's continue to look at the second implementation of "zero copy" CompositeByteBuf, which externally encapsulates multiple ByteBuf into a ByteBuf, and provides a unified encapsulated ByteBuf interface. Its class definition is as follows:

Figure 2-7 CompositeByteBuf class inheritance relationship

Through the inheritance relationship, we can see that CompositeByteBuf is actually a wrapper for ByteBuf. It combines multiple ByteBuf into a set, and then provides a unified ByteBuf interface to the outside. The related definitions are as follows:

Figure 2-8 CompositeByteBuf class definition

Add ByteBuf, no need to do memory copy, the relevant code is as follows:

Figure 2-9 New "zero copy" of ByteBuf

Finally, let's look at the "zero copy" of file transfer:

Figure 2-10 File transfer "zero copy"

Netty file transfer DefaultFileRegion sends files to the target Channel through the transferTo method. The following focuses on the transferTo method of FileChannel. Its API DOC description is as follows:

Figure 2-11 File transfer "zero copy"

For many operating systems, it directly sends the contents of the file buffer to the target Channel without copying. This is a more efficient transmission method, which achieves "zero copy" of file transmission.

2.2.3. Memory Pool

With the development of JVM virtual machine and JIT just-in-time compilation technology, the allocation and recycling of objects is a very lightweight task. But for the buffer Buffer, the situation is slightly different, especially for the allocation and recovery of direct memory outside the heap, which is a time-consuming operation. In order to reuse the buffer as much as possible, Netty provides a buffer reuse mechanism based on the memory pool. Let's take a look at the implementation of Netty ByteBuf together:

Figure 2-12 Memory pool ByteBuf

Netty provides a variety of memory management strategies. Differentiated customization can be achieved by configuring related parameters in the startup auxiliary class.

Below through the performance test, we look at the performance difference between ByteBuf and ordinary ByteBuf based on memory pool recycling.

Use case one, use the memory pool allocator to create a direct memory buffer:

Figure 2-13 Non-heap memory buffer test case based on memory pool

Use case two, use the direct memory buffer created by the non-heap memory allocator:

Figure 2-14 Non-heap memory buffer test case created based on non-memory pool

The performance comparison results are as follows:

Figure 2-15 Comparison of buffer write performance between memory pool and non-memory pool

Performance tests show that the performance of ByteBuf that uses memory pool is about 23 times higher than that of ByteBuf, which is constantly changing (performance data is strongly related to usage scenarios).

Let's briefly analyze the memory allocation of the Netty memory pool together:

Figure 2-16 Buffer allocation of AbstractByteBufAllocator

Continuing to look at the newDirectBuffer method, we find that it is an abstract method, which is implemented by a subclass of AbstractByteBufAllocator. The code is as follows:

Figure 2-17 Different implementations of newDirectBuffer

The code jumps to the newDirectBuffer method of PooledByteBufAllocator, obtains the memory area PoolArena from the Cache, and calls its allocate method for memory allocation:

Figure 2-18 Memory allocation of PooledByteBufAllocator

The allocate method of PoolArena is as follows:

Figure 2-19 PoolArena's newByteBuf abstract method

Therefore, focus on the analysis of the implementation of DirectArena: if unsafe using sun is not turned on, then

Figure 2-20 Implementation of DirectArena's newByteBuf method

Execute the newInstance method of PooledDirectByteBuf, the code is as follows:

Figure 2-21 Implementation of the newInstance method of PooledDirectByteBuf

ByteBuf objects are recycled through the get method of RECYCLER. If it is a non-memory pool implementation, a new ByteBuf object is directly created. After getting the ByteBuf from the buffer pool, call the setRefCnt method of AbstractReferenceCountedByteBuf to set the reference counter, which is used for object reference counting and memory recycling (similar to the JVM garbage collection mechanism).

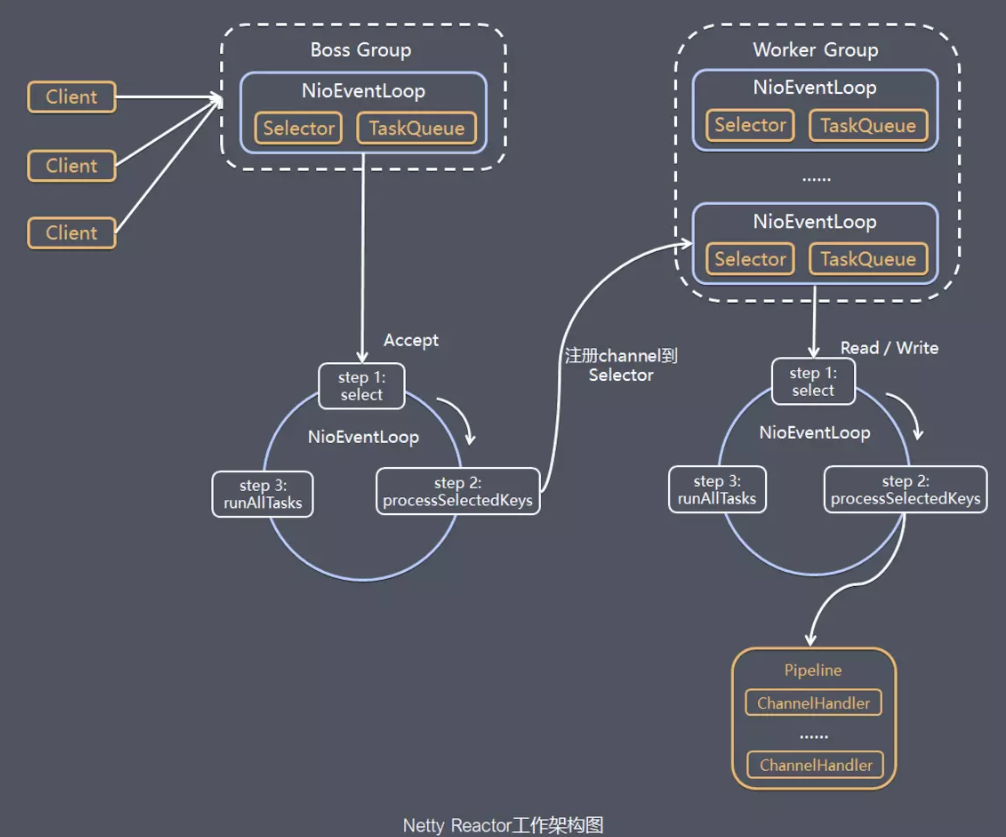

2.2.4. Efficient Reactor threading model

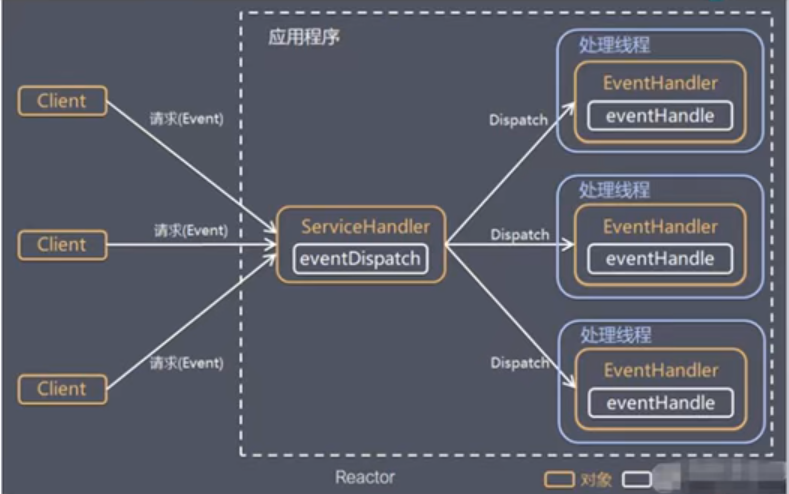

There are three commonly used Reactor thread models, as follows:

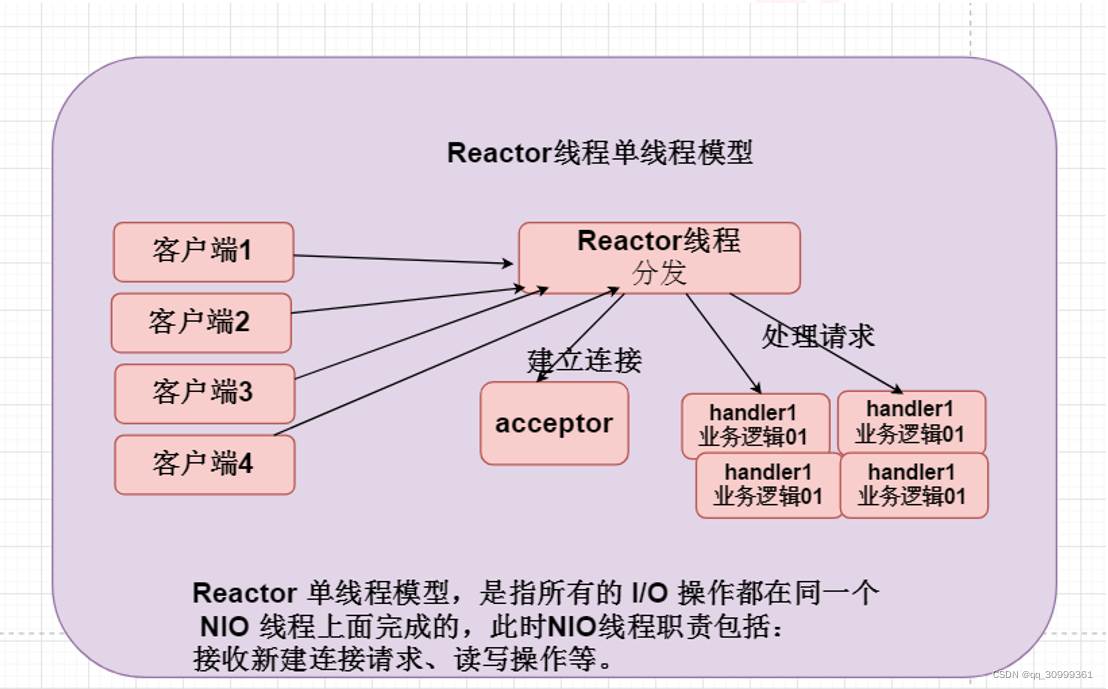

1) Reactor single-threaded model;

2) Reactor multi-threaded model;

3) Master-slave Reactor multithreading model

The Reactor single-threaded model means that all IO operations are completed on the same NIO thread. The responsibilities of the NIO thread are as follows:

1) As the NIO server, it receives the TCP connection of the client;

2) As a NIO client, initiate a TCP connection to the server;

3) Read the request or response message of the communication peer;

4) Send a message request or response message to the communication peer.

The schematic diagram of Reactor single-threaded model is as follows:

Figure 2-22 Reactor single-threaded model

Since the Reactor mode uses asynchronous non-blocking IO, all IO operations will not cause blocking. In theory, a thread can independently handle all IO-related operations. From an architectural perspective, a NIO thread can indeed fulfill its responsibilities. For example, receiving the client's TCP connection request message through the Acceptor, after the link is successfully established, the corresponding ByteBuffer is dispatched to the designated Handler through Dispatch for message decoding. The user Handler can send messages to the client through the NIO thread.

For some small-volume application scenarios, a single-threaded model can be used. However, it is not suitable for applications with high load and large concurrency. The main reasons are as follows:

1) A NIO thread processes hundreds of thousands of links at the same time, which cannot be supported in performance. Even if the CPU load of the NIO thread reaches 100%, it cannot meet the encoding, decoding, reading and sending of massive messages;

2) When the NIO thread is overloaded, the processing speed will slow down, which will cause a large number of client connections to time out, and retransmission will often occur after the timeout. This will increase the load on the NIO thread and eventually lead to a large amount of message backlog and processing Overtime, NIO thread will become the performance bottleneck of the system;

3) Reliability issues: Once the NIO thread runs away accidentally or enters an infinite loop, the communication module of the entire system will be unavailable, unable to receive and process external messages, and cause node failure.

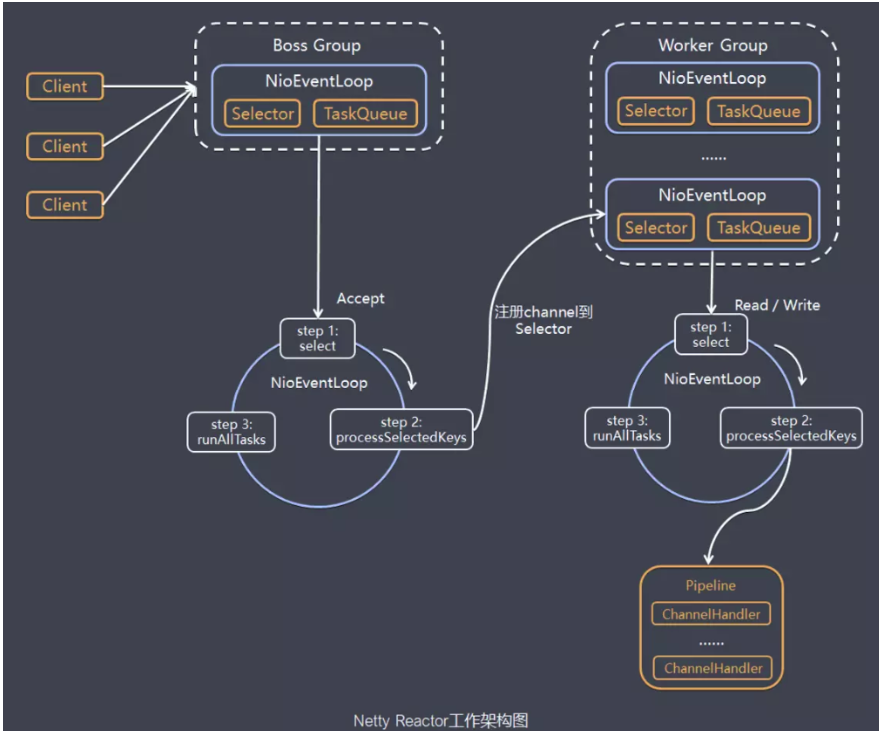

In order to solve these problems, the Reactor multi-threading model has evolved. Let's study the Reactor multi-threading model together.

The biggest difference between the Rector multi-threaded model and the single-threaded model is that there is a set of NIO threads to handle IO operations. Its schematic diagram is as follows:

Figure 2-23 Reactor multi-threaded model

Features of Reactor multi-threaded model:

1) There is a dedicated NIO thread-Acceptor thread to monitor the server and receive the client's TCP connection request;

2) Network IO operations-reading, writing, etc. are handled by a NIO thread pool. The thread pool can be implemented using a standard JDK thread pool. It contains a task queue and N available threads. These NIO threads are responsible for message reading, Decoding, encoding and sending;

3) One NIO thread can process N links at the same time, but one link only corresponds to one NIO thread, preventing concurrent operation problems.

In most scenarios, the Reactor multi-threaded model can meet performance requirements; however, in very special application scenarios, a NIO thread is responsible for monitoring and processing all client connections may have performance problems. For example, millions of clients connect concurrently, or the server needs to perform security authentication on the client's handshake message. The authentication itself is very lossy. In this type of scenario, a single Acceptor thread may have insufficient performance. In order to solve the performance problem, a third Reactor threading model-the master-slave Reactor multi-threading model was created.

The characteristic of the master-slave Reactor thread model is that the server used to receive client connections is no longer a single NIO thread, but a separate NIO thread pool. After Acceptor receives the client TCP connection request and completes processing (may include access authentication, etc.), it registers the newly created SocketChannel to an IO thread in the IO thread pool (sub reactor thread pool), and it is responsible for the read and write of the SocketChannel And codec work. The Acceptor thread pool is only used for client login, handshake and security authentication. Once the link is established successfully, the link is registered to the IO thread of the back-end subReactor thread pool, and the IO thread is responsible for subsequent IO operations.

Its threading model is shown below:

Figure 2-24 Reactor master-slave multithreading model

Using the master-slave NIO thread model can solve the problem of insufficient performance of one server listening thread that cannot effectively handle all client connections. Therefore, it is recommended to use this threading model in the official demo of Netty.

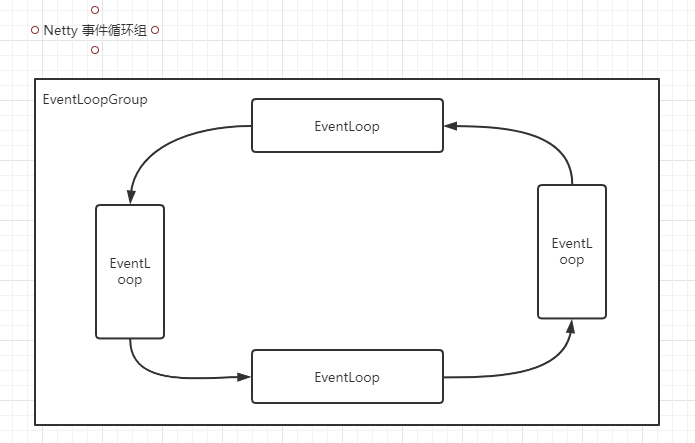

In fact, Netty's threading model is not fixed. By creating different EventLoopGroup instances in the startup auxiliary class and configuring them with appropriate parameters, the above three Reactor threading models can be supported. It is precisely because Netty's support for the Reactor threading model provides flexible customization capabilities, it can meet the performance requirements of different business scenarios.

2.2.5. Unlocked serial design concept

In most scenarios, parallel multithreading can improve the concurrent performance of the system. However, if the concurrent access to shared resources is handled improperly, serious lock contention will result, which will eventually lead to performance degradation. In order to avoid the performance loss caused by lock competition as much as possible, serialization design can be adopted, that is, the processing of messages is completed in the same thread as much as possible, and thread switching is not performed during this period, thus avoiding multi-thread competition and synchronization locks.

In order to improve performance as much as possible, Netty uses a serial lock-free design to perform serial operations inside the IO thread to avoid performance degradation caused by multi-threaded competition. On the surface, the serialization design seems to have low CPU utilization and insufficient concurrency. However, by adjusting the thread parameters of the NIO thread pool, multiple serialized threads can be started at the same time to run in parallel. This partial lock-free serial thread design has better performance than a queue-multiple worker thread model.

The working principle diagram of Netty's serialization design is as follows:

Figure 2-25 Netty serialization working principle diagram

After Netty's NioEventLoop reads the message, it directly calls ChannelPipeline's fireChannelRead(Object msg). As long as the user does not actively switch threads, it will always be called by NioEventLoop to the user's Handler. During this time, thread switching is not performed. This serialization method is avoided. The lock competition caused by multi-threaded operations is optimal from a performance point of view.

2.2.6. Efficient concurrent programming

Netty's efficient concurrent programming is mainly reflected in the following points:

1) Large and correct use of volatile;

2) The extensive use of CAS and atomic classes;

3) The use of thread-safe containers;

4) Improve concurrency performance through read-write locks.

If you want to know the details of Netty's efficient concurrent programming, you can read "Analysis of the Application of Multithreaded Concurrent Programming in Netty" that I shared on Weibo. In this article, we have a detailed description of Netty's multithreading skills and applications. Introduction and analysis.

2.2.7. High-performance serialization framework

The key factors affecting serialization performance are summarized as follows:

1) The size of the serialized code stream (occupation of network bandwidth);

2) Serialization & deserialization performance (CPU resource occupation);

3) Whether to support cross-language (heterogeneous system docking and development language switching).

Netty provides support for Google Protobuf by default. By extending Netty's codec interface, users can implement other high-performance serialization frameworks, such as Thrift's compressed binary codec framework.

Let's take a look at the comparison of byte arrays serialized by different serialization & deserialization frameworks:

Figure 2-26 Comparison of serialization code stream size of each serialization framework

As can be seen from the above figure, the code stream after Protobuf serialization is only about 1/4 of that of Java serialization. It is precisely because of the poor performance of Java's native serialization that a variety of high-performance open source serialization technologies and frameworks have emerged (poor performance is only one of the reasons, and there are other factors such as cross-language, IDL definitions, etc.).

2.2.8. Flexible TCP parameter configuration capabilities

Proper setting of TCP parameters can have a significant effect on performance improvement in certain scenarios, such as SO_RCVBUF and SO_SNDBUF. If it is not set properly, the impact on performance is very large. Below we summarize several configuration items that have a greater impact on performance:

1) SO_RCVBUF and SO_SNDBUF: Usually the recommended value is 128K or 256K;

2) SO_TCPNODELAY: The NAGLE algorithm automatically connects small packets in the buffer to form larger packets, preventing the sending of a large number of small packets from blocking the network, thereby improving the efficiency of network applications. However, the optimization algorithm needs to be turned off for delay-sensitive application scenarios;

3) Soft interrupt: If the Linux kernel version supports RPS (version 2.6.35 and above), soft interrupt can be realized after RPS is turned on to improve network throughput. RPS calculates a hash value according to the source address, destination address, and destination and source ports of the data packet, and then selects the cpu running by the soft interrupt according to the hash value. From the upper level, that means tying each connection to the cpu This hash value is used to balance soft interrupts on multiple CPUs and improve network parallel processing performance.

Netty can flexibly configure TCP parameters in the startup auxiliary class to meet different user scenarios. The relevant configuration interface is defined as follows:

Intelligent Recommendation

Netty features and thread model

Zero copy hard driver - kernel buffer - protocol engine only DMA copy avoids cpu copy There was actually a cpu copy of kernel buffer - socket buffer, but the copied information can rarely be ignored; ...

013. NETTY thread model

Introduction to Netty Netty is a high-performance, high-scalable asynchronous event-driven network application framework, which greatly simplifies network programming such as TCP and UDP clients and s...

Netty thread model and basics

Why use Netty Netty is an asynchronous event-driven web application framework for rapid development of maintainable high-performance and high-profile servers and clients. Netty has the advantages of h...

Netty thread model and gameplay

Event cycle group All I / O operations in Netty are asynchronous, and the asynchronous execution results are obtained by channelfuture. Asynchronously executes a thread pool EventLoopGroup, it ...

Netty - Thread Model Reactor

table of Contents Thread model 1, traditional IO service model 2, Reactor mode reactor Three modes: to sum up Netty model Excommissum Thread model 1, traditional IO service model Blocked IO mode Get i...

More Recommendation

Netty thread model [next]

Hey everyone, I amJava small white 2021。 The programmer of the halfway is in the development of aircraft, and the opportunity to find a more interesting thing under the development of a surveying cour...

【Netty】 thread model

content 1. Single Reactor single thread 2. Single Reactor Multi -thread 3. Reactor Main Strike Model Single -threaded model (single Reactor single thread) Multi -threaded model (single Reactor multi -...

Netty entry thread model

Single-threaded model: the boss thread is responsible for connection and data reading and writing Hybrid model: the boss thread is responsible for connection and data reading and writing, and the work...

Redis source code parsing: 09redis database implementations (key operation, key timeout function key space notification)

This chapter of the Redis database server implementations are introduced, indicating achieve Redis database-related operations, including key-value pairs in the database to add, delete, view, update a...

PAT B1041-B1045 Question

1、b1041 2、b1042 3、b1043 4、b1044 5、b1045...