Linux kernel network packet processing flow

Linux kernel network packet processing flow

from kernel-4.9:

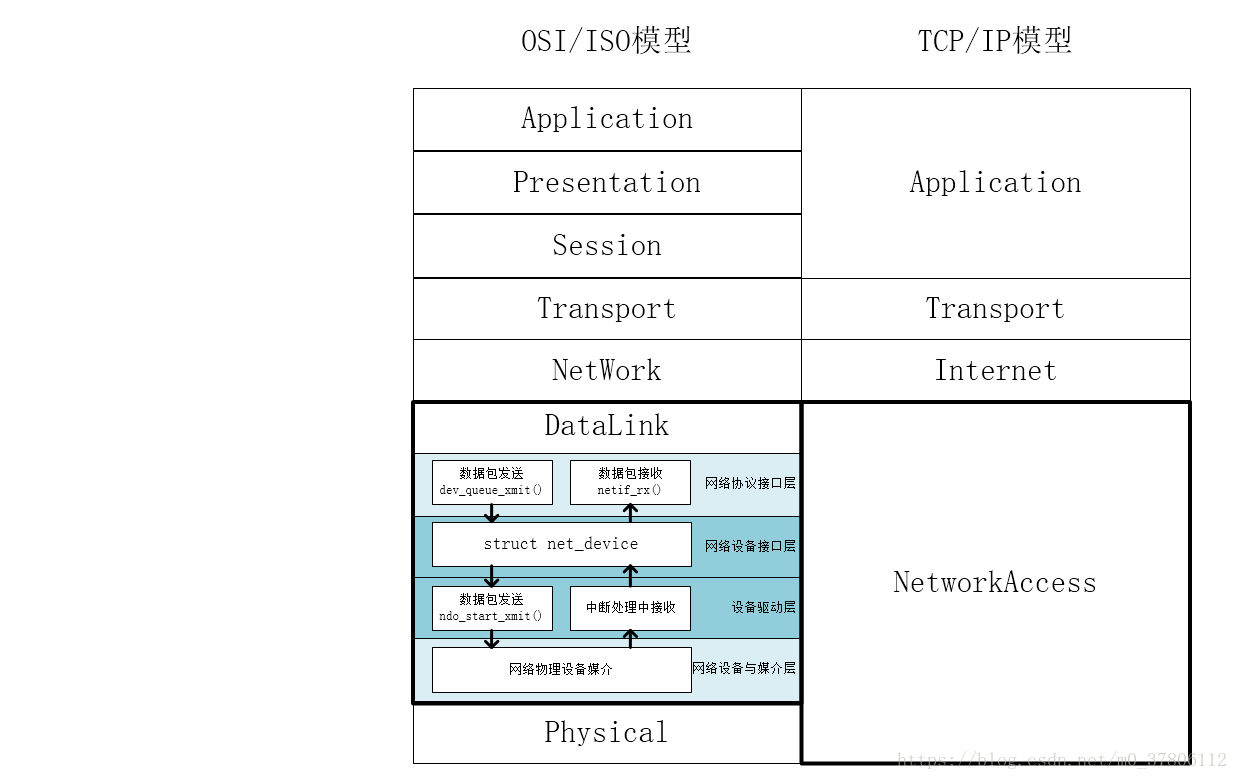

0. Linux kernel network packet processing flow-network hardware

The network card works at the physical layer and the data link layer, and is mainly composed of PHY/MAC chip, Tx/Rx FIFO, DMA, etc., where the network cable is connected to the PHY chip through the transformer, the PHY chip is connected to the MAC chip through the MII, and the MAC chip is connected to the PCI bus

PHY/MAC chip

The PHY chip is mainly responsible for: CSMA/CD, analog-to-digital conversion, codec, serial-to-parallel conversion

The MAC chip is mainly responsible for:

-

Bit stream and frame conversion: 7 bytes of preamble and 1 byte of frame delimiter SFD

-

CRC check

-

Packet Filtering:L2 Filtering、VLAN Filtering、Manageability / Host Filtering

Intel's Gigabit network card is represented by 82575/82576, and 10 Gigabit network card is represented by 82598/82599

1. Linux kernel network packet processing flow-network card driver

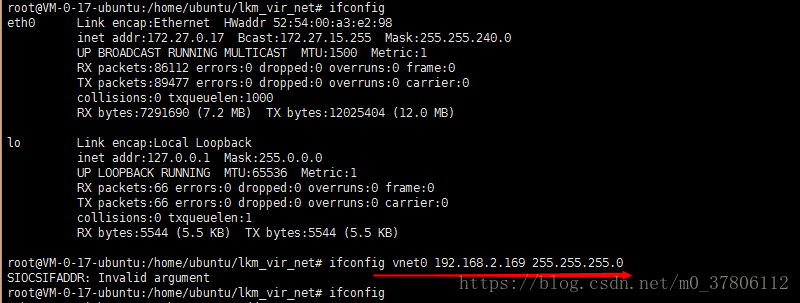

Network card driver ixgbe initialization

The NIC driver inserts a data structure into a global network device list for each new interface. Each interface consists of a structurenet_deviceTo describe it, it is in<linux/netdevice.h>Definition. This structure must be allocated dynamically.

Each network card, whether physical or virtual, must have one:net_device, This struct is created by allocating in the network card driver, different network cards, corresponding to different drivers of manufacturers, then look at the driver initialization of ixgbe; createnet_deviceThe function is:alloc_etherdev, Or:alloc_etherdev_mq

pci equipment:

In the kernel, a PCI device, usestruct pci_driverThe structure is described, because the PCI device has been recognized when the system boots, when the kernel finds a device that has been detected and the driver is registeredid_tableWhen the information in

it will trigger the driverprobefunction,

For example, look at the ixgbe driver:

static struct pci_driver ixgb_driver = {

.name = ixgb_driver_name,

.id_table = ixgb_pci_tbl,

.probe = ixgb_probe,

.remove = ixgb_remove,

.err_handler = &ixgb_err_handler

};#vim drivers/net/ethernet/intel/ixgbe/ixgbe_main.c

module_init

ixgbe_init_module

pci_register_driverwhenprobeThe function is called, which proves that the network card we support has been found, so that it can be calledregister_netdevThe function registers the network device with the kernel. Before registration, it is generally calledalloc_etherdevAssign onenet_deviceAnd then initialize its important members.

ixgbe_probe

struct net_device *netdev;

struct pci_dev *pdev;

pci_enable_device_mem(pdev);

pci_request_mem_regions(pdev, ixgbe_driver_name);

pci_set_master(pdev);

pci_save_state(pdev);

netdev = alloc_etherdev_mq(sizeof(struct ixgbe_adapter), indices); // struct net_device is allocated here

alloc_etherdev_mqs

alloc_netdev_mqs(sizeof_priv, "eth%d", NET_NAME_UNKNOWN, ether_setup, txqs, rxqs);

ether_setup // Initial struct net_device

SET_NETDEV_DEV(netdev, &pdev->dev);

adapter = netdev_priv(netdev);refs:

alloc_etherdev_mqs() -> ether_setup()void ether_setup(struct net_device *dev)

{

dev->header_ops = ð_header_ops;

dev->type = ARPHRD_ETHER;

dev->hard_header_len = ETH_HLEN;

dev->min_header_len = ETH_HLEN;

dev->mtu = ETH_DATA_LEN;

dev->addr_len = ETH_ALEN;

dev->tx_queue_len = 1000; /* Ethernet wants good queues */

dev->flags = IFF_BROADCAST|IFF_MULTICAST;

dev->priv_flags |= IFF_TX_SKB_SHARING;

eth_broadcast_addr(dev->broadcast);

}

EXPORT_SYMBOL(ether_setup);

static struct pci_driver ixgbe_driver = {

.name = ixgbe_driver_name,

.id_table = ixgbe_pci_tbl,

.probe = ixgbe_probe, //Call ixgbe_probe() after the system detects the ixgbe network card

.remove = ixgbe_remove,

#ifdef CONFIG_PM

.suspend = ixgbe_suspend,

.resume = ixgbe_resume,

#endif

.shutdown = ixgbe_shutdown,

.sriov_configure = ixgbe_pci_sriov_configure,

.err_handler = &ixgbe_err_handler

};

static int __init ixgbe_init_module(void)

{

...

ret = pci_register_driver(&ixgbe_driver); // Register ixgbe_driver

...

}

module_init(ixgbe_init_module);

static void __exit ixgbe_exit_module(void)

{

...

pci_unregister_driver(&ixgbe_driver); // unregister ixgbe_driver

...

}

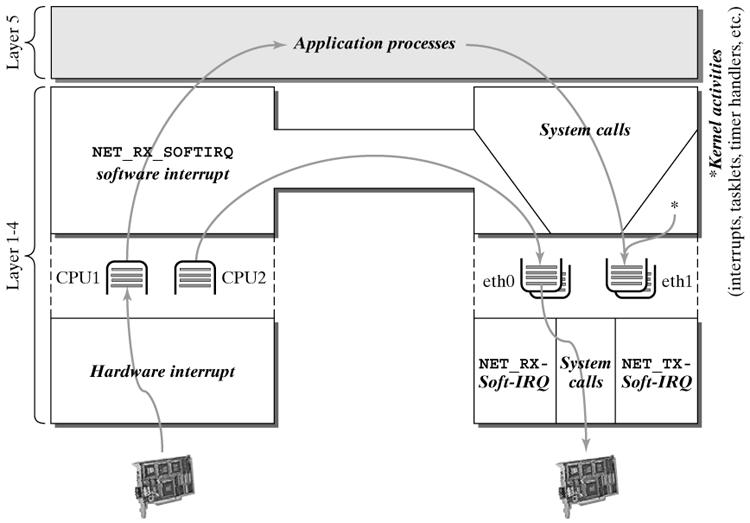

module_exit(ixgbe_exit_module);2. Linux kernel network packet processing flow-interrupt registration

enum

{

HI_SOFTIRQ=0,

TIMER_SOFTIRQ,

NET_TX_SOFTIRQ,

NET_RX_SOFTIRQ,

BLOCK_SOFTIRQ,

BLOCK_IOPOLL_SOFTIRQ,

TASKLET_SOFTIRQ,

SCHED_SOFTIRQ,

HRTIMER_SOFTIRQ,

RCU_SOFTIRQ, /* Preferable RCU should always be the last softirq */

NR_SOFTIRQS

};During kernel initialization,softirq_initWill registerTASKLET_SOFTIRQas well asHI_SOFTIRQThe associated processing function.

void __init softirq_init(void)

{

......

open_softirq(TASKLET_SOFTIRQ, tasklet_action);

open_softirq(HI_SOFTIRQ, tasklet_hi_action);

}

The network subsystem is divided into two types of soft IRQ.NET_TX_SOFTIRQwithNET_RX_SOFTIRQ, Separately deal with sending data packets and receiving data packets. These two soft IRQs arenet_dev_initfunction(net/core/dev.c) To register:

open_softirq(NET_TX_SOFTIRQ, net_tx_action);

open_softirq(NET_RX_SOFTIRQ, net_rx_action);The soft interrupt processing function for sending and receiving data packets is registered asnet_rx_actionwithnet_tx_action。

whereopen_softirqImplemented as:

void open_softirq(int nr, void (*action)(struct softirq_action *))

{

softirq_vec[nr].action = action;

}3. Linux kernel network packet processing flow-initialization of important structures

Each CPU has a queue to process the received frames, and each has its data structure to handle ingress and egress traffic, so there is no need to use a locking mechanism between different CPUs. The data structure of this queue issoftnet_data(Defined ininclude/linux/netdevice.hin):

/*

* Incoming packets are placed on per-cpu queues so that

* no locking is needed.

*/

struct softnet_data

{

struct Qdisc *output_queue;

struct sk_buff_headinput_pkt_queue;//List of devices with data to be transmitted

struct list_headpoll_list; // Doubly linked list, where the device has input frames waiting to be processed.

struct sk_buff*completion_queue;//Buffer list, where the buffer has been successfully transferred and can be released

struct napi_structbacklog;

}softnet_dataIs instart_kernelCreated in, and one for each cpusoftnet_dataVariables, the most important of this variable ispoll_listWhenever a data packet is received, the network device driver will send its ownnapi_structLink to CPU private variablesoftnet_data->poll_listUp, so that during a soft interrupt,net_rx_actionWill traverse cpu private variablessoftnet_data->poll_list, Execute the abovenapi_structStructuralpollThe hook function transfers the data packet from the driver to the network protocol stack.

Kernel initialization process:

start_kernel()

--> rest_init()

--> do_basic_setup()

--> do_initcall

-->net_dev_init

__init net_dev_init(){

//Each CPU has a CPU private variable _get_cpu_var(softnet_data)

//_get_cpu_var(softnet_data).poll_list is very important, you need to traverse it in the soft interrupt

for_each_possible_cpu(i) {

struct softnet_data *queue;

queue = &per_cpu(softnet_data, i);

skb_queue_head_init(&queue->input_pkt_queue);

queue->completion_queue = NULL;

INIT_LIST_HEAD(&queue->poll_list);

queue->backlog.poll = process_backlog;

queue->backlog.weight = weight_p;

}

//Hang the network to send the handler on the soft interrupt

open_softirq(NET_TX_SOFTIRQ, net_tx_action, NULL);

//Hang the network receiving handler on the soft interrupt

open_softirq(NET_RX_SOFTIRQ, net_rx_action, NULL);

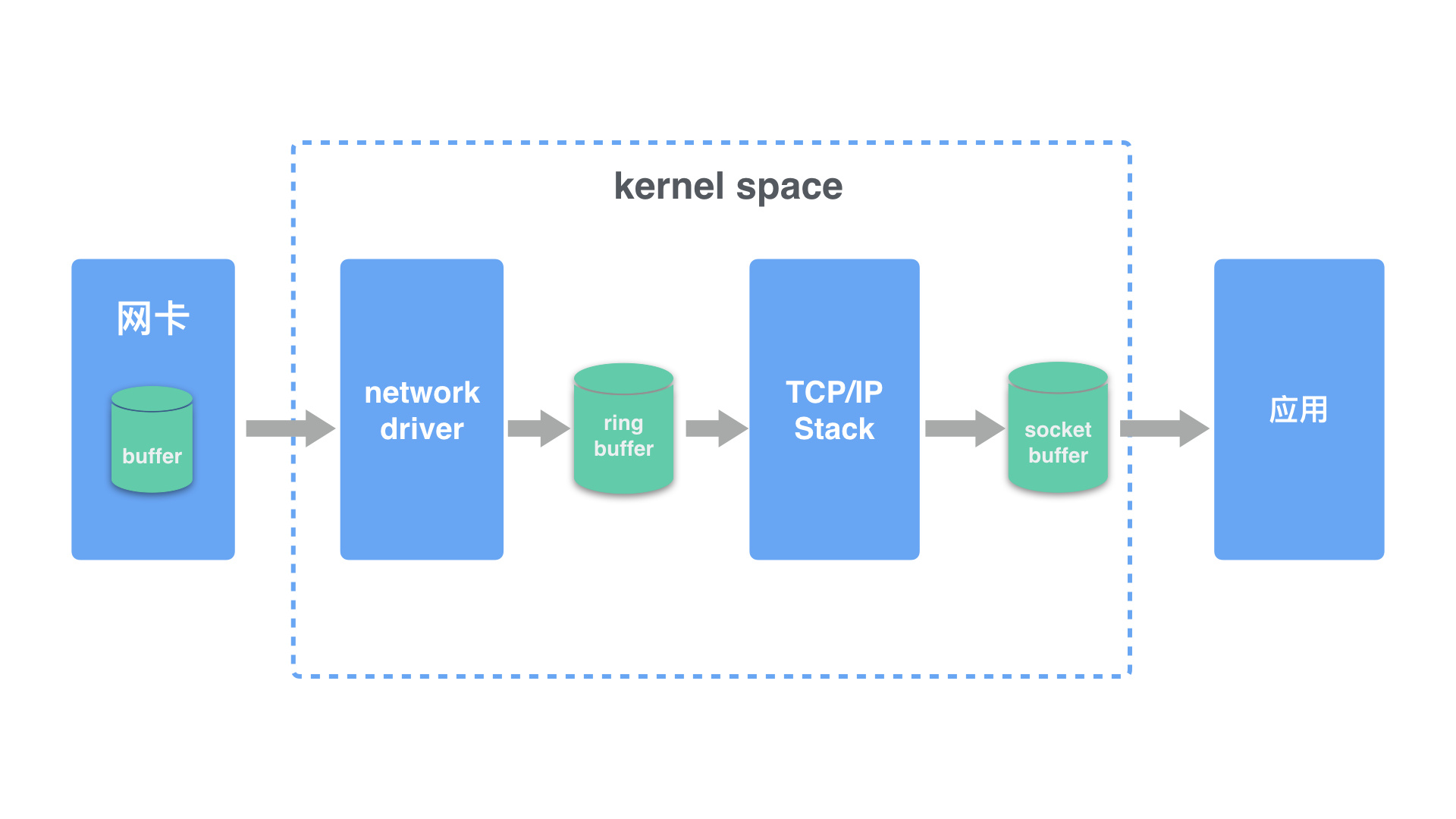

}4. Linux kernel network packet processing flow-process diagram of receiving and sending packets

ixgbe_adaptercontainixgbe_q_vectorArray (aixgbe_q_vectorCorresponding to an interrupt),ixgbe_q_vectorcontainnapi_struct:

Hard interrupt functionnapi_structJoin the CPUpoll_list, Soft interrupt functionnet_rx_action()Traversepoll_list,carried outpollfunction

Outsourcing process

1. Create NIC drivertx descriptor ring(Consistent DMA memory), willtx descriptor ringThe bus address is written into the network card register TDBA

2. The protocol stack is passeddev_queue_xmit()willsk_buffDownload network card driver

3. The network card driver willsk_buffput into atx descriptor ring, Update TDT

4. After DMA sensed the change of TDT, foundtx descriptor ringThe next descriptor to be used

5. The DMA copies the descriptor's data buffer to the Tx FIFO through the PCI bus

6. After copying, send the data packet through the MAC chip

7. After sending, the network card updates the TDH and starts a hard interrupt to notify the CPU to release the data packets in the data buffer

Receipt process

1. Create NIC driverrx descriptor ring(Consistent DMA memory), willrx descriptor ringThe bus address is written into the network card register RDBA

2. The network card driver allocates sk_buff and data cache area for each descriptor, and the streaming DMA maps the data cache area to save the bus address of the data cache area to the descriptor

3. The network card receives the data packet and writes the data packet to the Rx FIFO

4. DMA foundrx descriptor ringThe next descriptor to be used

5. After the entire data packet is written to the Rx FIFO, the DMA copies the data packet in the Rx FIFO to the data buffer of the descriptor through the PCI bus.

6. After copying, the network card starts a hard interrupt to notify the CPU that there is a new data packet in the data cache area, and the CPU executes the hard interrupt function:

-

NAPI (take e1000 network card as an example):

e1000_intr() -> __napi_schedule() -> __raise_softirq_irqoff(NET_RX_SOFTIRQ) -

Non-NAPI (take dm9000 network card as an example):

dm9000_interrupt() -> dm9000_rx() -> netif_rx() -> napi_schedule() -> __napi_schedule() -> __raise_softirq_irqoff(NET_RX_SOFTIRQ)

7. ksoftirqd executes the soft interrupt functionnet_rx_action():

- NAPI (take e1000 network card as an example):

net_rx_action() -> e1000_clean() -> e1000_clean_rx_irq() -> e1000_receive_skb() -> netif_receive_skb() - Non-NAPI (take dm9000 network card as an example):

net_rx_action() -> process_backlog() -> netif_receive_skb()

8. The network card driver passesnetif_receive_skb()willsk_buffUpload protocol stack

5. Interrupt the upper and lower parts

Hard interruptednetif_rx()Function: add skb to CPUsoftnet_data-> input_pkt_queuequeue

netif_rx(skb); // Handle skb in hard interrupt

netif_rx_internal(skb);

trace_netif_rx(skb);

preempt_disable();

rcu_read_lock();

cpu = get_rps_cpu(skb->dev, skb, &rflow); // Get cpu id through rps

enqueue_to_backlog(skb, cpu, &rflow->last_qtail);

struct softnet_data *sd;

sd = &per_cpu(softnet_data, cpu); //According to cpu id, get sd

rps_lock(sd);

__skb_queue_tail(&sd->input_pkt_queue, skb); // enqueue action

input_queue_tail_incr_save(sd, qtail);

rps_unlock(sd);

local_irq_restore(flags)

return NET_RX_SUCCESS

rcu_read_unlock();

preempt_enable();static int netif_rx_internal(struct sk_buff *skb)

{

int ret;

net_timestamp_check(netdev_tstamp_prequeue, skb);

trace_netif_rx(skb);

#ifdef CONFIG_RPS

if (static_key_false(&rps_needed)) {

struct rps_dev_flow voidflow, *rflow = &voidflow;

int cpu;

preempt_disable(); // Turn off preemption

rcu_read_lock();

cpu = get_rps_cpu(skb->dev, skb, &rflow);

if (cpu < 0)

cpu = smp_processor_id();

ret = enqueue_to_backlog(skb, cpu, &rflow->last_qtail); // join the queue

rcu_read_unlock();

preempt_enable();

} else

#endif

{

unsigned int qtail;

ret = enqueue_to_backlog(skb, get_cpu(), &qtail);

put_cpu();

}

return ret;

}enqueue_to_backlog()The main job is to hang skb under a cpusoftnet_data-> input_pkt_queueIn the queue,

static int enqueue_to_backlog(struct sk_buff *skb, int cpu,

unsigned int *qtail)

{

struct softnet_data *sd;

unsigned long flags;

unsigned int qlen;

sd = &per_cpu(softnet_data, cpu);

local_irq_save(flags);

rps_lock(sd);

if (!netif_running(skb->dev))

goto drop;

qlen = skb_queue_len(&sd->input_pkt_queue);

if (qlen <= netdev_max_backlog && !skb_flow_limit(skb, qlen)) {

if (qlen) {

enqueue:

__skb_queue_tail(&sd->input_pkt_queue, skb); // Add skb to sd-> input_pkt_queue queue

input_queue_tail_incr_save(sd, qtail);

rps_unlock(sd);

local_irq_restore(flags);

return NET_RX_SUCCESS;

}

/* Schedule NAPI for backlog device

* We can use non atomic operation since we own the queue lock

*/

if (!__test_and_set_bit(NAPI_STATE_SCHED, &sd->backlog.state)) {

if (!rps_ipi_queued(sd))

____napi_schedule(sd, &sd->backlog); // handle skb in napi mode

}

goto enqueue;

}

drop:

sd->dropped++;

rps_unlock(sd);

local_irq_restore(flags);

atomic_long_inc(&skb->dev->rx_dropped);

kfree_skb(skb);

return NET_RX_DROP;

}____napi_schedule

list_add_tail(&napi->poll_list, &sd->poll_list);The above is what hard interrupts need to do, and then, soft interruptsnet_rx_action()Will traverse this list for further operations.

Interrupt processing, processing skb, contains two ways:

The hard break is the upper half, in the upper half, there isnetif_rxJudging the napi in the middle, the softirq (net_rx_action()), also judged on napi and non-napi!

- Non-NAPI

- The non-NAPI device driver will generate an interrupt event for each frame it receives. Under high traffic load, it will spend a lot of time processing the interrupt event, resulting in waste of resources. The NAPI driver mixes interrupt events and polling, and its performance will be better than the old method under high traffic load.

- NAPI

- The main idea of NAPI is to mix interrupt events and polling, rather than just using interrupt event-driven models. When a new frame is received, the gateway is interrupted and all the entry queues are processed again. From a kernel point of view, the NAPI method reduces CPU load due to fewer interrupt events.

The default is napi? Is it non-napi?

During initialization, the default is non-napi mode, and the default poll function is:process_backlog,as follows:

net_dev_init

for_each_possible_cpu(i) {

sd->backlog.poll = process_backlog;

}net_rx_actionWill call the poll function of the device, if not, it is the defaultprocess_backlogfunctionprocess_backlogAfter skb is out of the queue in the function,netif_receive_skbProcess this skb

In soft interrupt, usenet_rx_action(), Handle skb:

7. ksoftirqd executes the soft interrupt function `net_rx_action()`:

* NAPI (take e1000 network card as an example): `net_rx_action() -> e1000_clean() -> e1000_clean_rx_irq() -> e1000_receive_skb() -> netif_receive_skb()`

* Non-NAPI (take dm9000 network card as an example): `net_rx_action() -> process_backlog() -> netif_receive_skb()`

8. The network card driver sends `sk_buff` to the protocol stack through `netif_receive_skb()`Finally, passnetif_receive_skb(), Send skb to the protocol stack;

In the soft interrupt, the treatment of napi and non-napi:process_backlog

net_rx_action

process_backlog

__netif_receive_skb

__netif_receive_skb_coreNon-NAPI vs NAPI

- (1) A network card driver that supports NAPI must provide a polling method

poll()。 - (2) The non-NAPI kernel interface is

netif_rx(),

The core interface of NAPI isnapi_schedule()。 - (3) Non-NAPI uses shared CPU queue

softnet_data->input_pkt_queue,

NAPI uses device memory (or the receiving ring of the device driver).

6. Reference:

- Illustration of the process of sending and receiving packets driven by network card:Network card driver transceiver package process diagram

- Receive soft interrupt and netif_rx (Linux network subsystem learning section 4)

: - ixgbe network card initialization and sending and receiving data overview:Ixgbe NIC initialization and sending and receiving data overview

Intelligent Recommendation

Network packet kernel exploration (1) - Linux kernel network hierarchy

Take the acceptance of the packet as an example: Network card--->network device driver netfilter----regulatory layer protocol stack (unpacking, waiting, control checking) session layer (sock) sessi...

Linux kernel network packet loss monitoring

August 11, 2020| Zun Jinrong | 800 words | Reading approximately 2 minutes | ArchiveKernel network | original:http://kerneltravel.net/blog/2020/network_ljr6/ 1 Introduction Familiar with the rece...

Linux kernel network packet transmission (1)

Linux kernel network packet transmission (1) 1 Introduction 2. Packet sends a macro perspective 3. Agreement layer registration 4. Send network data via Socket 4.1 `sock_sendmsg`, `__sock_sendmsg`, `_...

Linux kernel protocol stack IPv4 receives packet flow

Table of Contents 1 IP packet receive IP_RCV () 2 routing query ip_rcv_finish () 2.1 Packet distribution DST_INPUT 3 Packet input to this machine ip_local_delivery () 3.1 Check IP_LOCAL_DELIVER...

Network packet core expedition (2)-linux kernel network layered structure

Network device interface layer: struct net_device | struct net_d...

More Recommendation

Linux network protocol stack processing data packet

Original post address: https://blog.csdn.net/yedushu/article/details/52588412?ops_request_misc=&request_id=&biz_id=102&utm_term=%E7%BD%91%E7%BB%9C%E6%8A%A5%E6%96%87%E5%A4%84%E7%90%86%E8%BF...

Linux kernel network packet transmission (4) - Linux NetDevice subsystem

Linux kernel network packet transmission (4) - Linux NetDevice subsystem 1 Introduction 2. `dev_queue_xmit` and `__dev_queue_xmit` 2.1 `netdev_pick_tx` 2.2 `__netdev_pick_tx` 2.2.1 Transmit Packet Ste...

packet processing flow broadpwn

Entry point Parsing the beacon frame from the start wme Reference:freebuf blog: fully resolved Broadcom WiFi chip Broadpwn VulnerabilityFirst find vulnerabilities in the location of the firmware, as s...

Linux kernel packet capture function implementation basis (2) netfilter processing

The last blog mainly introduced the design ideas and effects of kernel packet capture, and did not give a detailed design implementation. It looks like a flower shelf, flashy. This blog will introduce...