Summary of the efficiency of lock-free programming and lock-free programming, the realization of lock-free queue (c language)

tags: Linux Multithreading queue

1. The efficiency of lock-free programming and locked programming

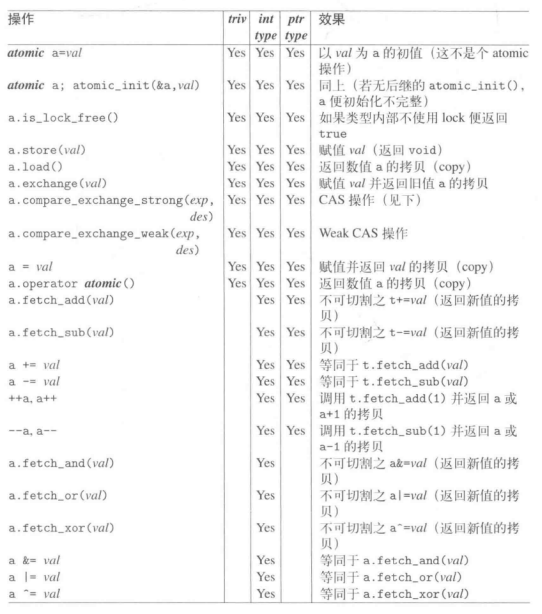

Lock-free programming is to control thread synchronization through CAS atomic operations. If you don't know what makes CAS atomic operation, it is recommended to check the relevant information first. There are many information on this aspect on the Internet.

CAS implements hardware-level mutual exclusion. In the case of low thread concurrency, its performance is more efficient than ordinary mutexes, but when threads are high concurrency, the cost of hardware-level mutual exclusion is caused by lock competition at the application layer. The price is also high. At this time, ordinary lock programming is actually better than lock-free programming.

Hardware-level atomic operations slow down the operation of the application layer and can no longer be optimized. If you have a good design for a multithreaded program with locks, you can achieve high concurrency without reducing the performance of the program.

2. The benefits of lock-free programming

Lock-free programming does not require programmers to think about thorny issues such as deadlocks and priority reversals. Therefore, in programs that are not too complicated for applications and have higher performance requirements, you can use lock programming. If the program is more complex and the performance requirement is not high, you can use lock-free programming.

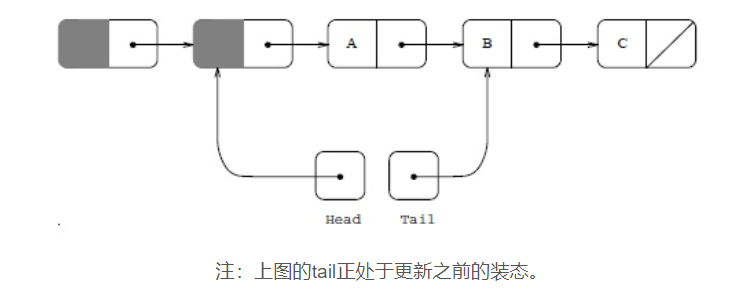

3. Implementation of lock-free queue

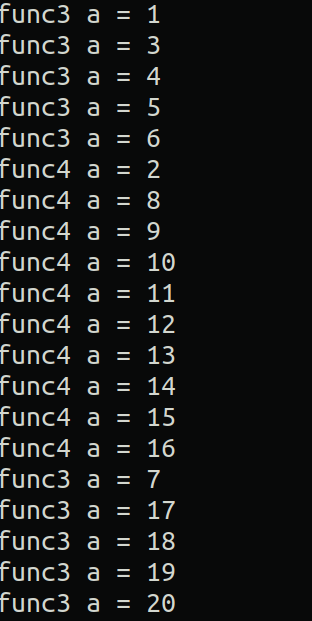

For the application of thread lock-free synchronization mode, I implemented a lock-free queue. First look at the results of the program:

The running result of the program conforms to the first-in first-out characteristic of the queue.

For some detailed questions, there are detailed comments in the code, please refer to the code:

#include <stdio.h>

#include<unistd.h>

#include<stdlib.h>

#include<pthread.h>

#include<assert.h>

//Use linked list to implement queue

//Node structure

typedef struct Node

{

struct Node *next;

int data;

}node;

//The definition of queue

typedef struct Queue

{

node* front;

node* rear;

}queue;

//Define a global queue

queue que;

//Initialization of the queue

void QueInit(queue *que)

{

//Apply for a new node

node *temp = (node*)malloc(sizeof(node));

assert(temp!=NULL);

temp->next=NULL;

que->front=que->rear=temp;

}

//Team empty judgment

int QueEmpty()

{

return __sync_bool_compare_and_swap(&(que.rear),que.front,que.front);

}

//Entry operation

void QuePush(int *d)

{

//Apply for a new node

node *temp = (node*)malloc(sizeof(node));

assert(temp!=NULL);

temp->data=*d;

//Insert the newly applied node into the queue using atomic operation

node* p;

do

{

p = que.rear;

}

while(!__sync_bool_compare_and_swap(&(p->next),NULL,temp));

//Reset the tail pointer

__sync_bool_compare_and_swap(&(que.rear),p,temp);

}

//Dequeue operation

int QuePop(int *d)

{

//temp is the element to be output

node *temp;

//Because temp may be NULL, we use P to record the value of temp->next, which will be used later

node *p;

do

{

if(QueEmpty())

return 0;

temp = que.front->next;

if(temp!=NULL)

p=temp->next;

else

p=NULL;

}

while(!__sync_bool_compare_and_swap(&(que.front->next),temp,p));

//Update the tail pointer

__sync_bool_compare_and_swap(&(que.rear),temp,que.front);

if(temp!=NULL)

{

*d = temp->data;

free(temp);

return 1;

}

return 0;

}

//Two thread functions: one enters the team, one leaves the team

void * thread_push(void *arg)

{

while(1)

{

int data = rand()%100;

QuePush(&data);

printf("Queue insert element: %d\n",data);

sleep(1);

}

}

void *thread_pop(void *arg)

{

int data;

while(1)

{

sleep(2);

if(!QuePop(&data))

printf("Queue is empty\n");

else

printf("Queue output element: %d\n",data);

}

}

int main()

{

//Initialize the queue

QueInit(&que);

//Create two threads

pthread_t id[2];

pthread_create(&id[0],NULL,thread_push,NULL);

pthread_create(&id[1],NULL,thread_pop,NULL);

//Wait for the end of the thread

pthread_join(id[0],NULL);

pthread_join(id[1],NULL);

//After this, the memory should be reclaimed due to the deletion of the queue

//Delete the queue does not involve multi-threaded operations, so I won’t repeat it

return 0;

}

Intelligent Recommendation

Understanding of lock-free programming

Multithreaded programming is moreCPUOne of the most widely used programming methods in the system. In traditional multithreaded programming, various lock mechanisms are generally used between multiple...

Thinking and summary of C++ atomic realization of lock-free queue

Regarding atomic operations such as CAS: Before we start talking about lock-free queues, we need to know that a very important technology is the CAS operation-Compare & Set, or Compare & Swap....

C++ realizes lock-free queue

Atomic operations such as CAS Before introducing lock-free queues, we need to know that an important technology is CAS operation.Almost all CPU instructions now support atomic operations such as CAS. ...

More Recommendation

c ++ lock-free programming implementation stack

CAS operation Highly concurrent servers are programmed and need to operate shared data. In order to protect the accuracy of the data, there is an effective method is the lock mechanism, but this metho...

C++ concurrent programming: lock-free data structure

1. The definition of lock-free data structure 1. Lock-free data structure More than one thread must be able to access the data structure at the same time, they do not have to perform the same operatio...

C ++ multi-thread - lock-free programming

Mutually exclusive lock: Multi-threaded room If you want to implement synchronous access to the shared resource, you need to use a mutex. The mutex lock is mainly for the process to lock, and the excl...

Lock-free programming practical exercise

Some time ago studied the lock-free programming for a while. Just write a few simple procedures to verify some of the concepts he learned under. Test scenario: Suppose an application: now there is a g...

[Translation] RUST lock-free programming

The content of this article is translated fromLock-freedom without garbage collection, There are a small amount of own modifications in the middle. It is generally believed that one advantage of garba...