Algorithm Analysis: Linear Least Squares Problem

This blog mainly explains the linear least squares problem, which mainly includes the following contents:

- Definition of least squares problem

- Normal equation solving

- Chomsky decomposition method

- QR decomposition method

- Singular value decomposition method

- Least squares of homogeneous equations

I. Definition of the problem

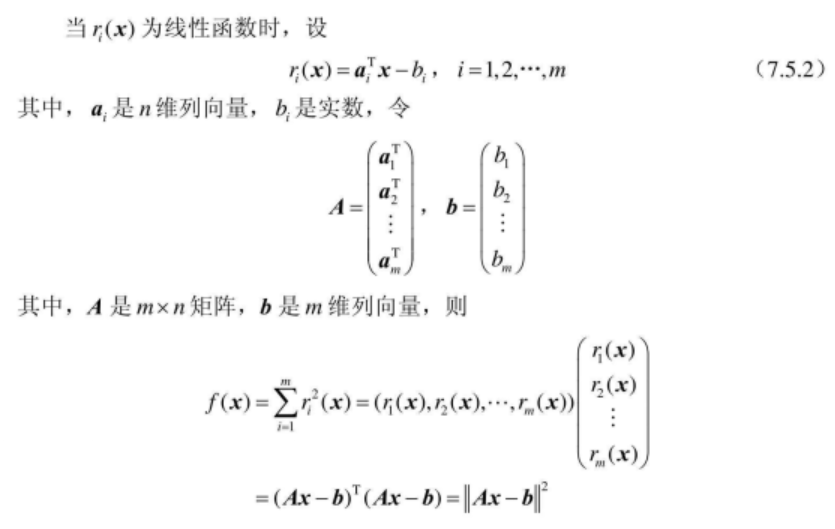

The least squares problem can usually be expressed as a fitting of a certain model by collecting some data (acquiring the obtained samples) and making the model results and samples reach a certain degree of best fit as much as possible:

Now suppose our model function is Ax, the sample is b and the number of equations is greater than the unknown number:

2. Solution method

2.1. Normal Equation

will

2.2. Chomsky Decomposition

The following content reference[1].

Since the simple normal equations calculate the amount of computation, for faster and more stable solutions, Chomsky decomposition can usually be used to solve.

The Chomsky decomposition of the left side of the normal matrix is:

This Chomsky decomposition generates an upper triangular matrix R ̄ and its transposed matrix, so we can calculate the solution vector by successive forward and backward substitutions:

2.3. QR decomposition

The following picture reference[4]

QR decomposition can refer to matrix decomposition as the product of a standard orthogonal square matrix and an upper triangular matrix:

There are many ways to calculate QR decomposition. The following is an example of Householder transformation. First, we make a simple analysis of Householder-based QR decomposition. Suppose A is a 5X4 matrix. We use X to represent the unchanged elements of this transformation. The elements that represent transformations with + are:

By analogy, matrix A can be converted to an upper triangular matrix by four Householder transformations, and if A is not correlated, then R is a non-singular matrix, then:

Then we use a practical calculation example to illustrate the QR decomposition of Householder. Case reference[2]. Suppose now we have the following five data:

(-1, 1), (-0.5, 0.5), (0, 0), (0.5, 0.5), (1.2)

And there is a linear system that satisfies this data as follows:

The √5 is

Then, and so on, the second and third columns are solved:

This completes the QR decomposition of matrix A.

Through the above method we can find the Q and R matrices, then how to solve the least squares problem through them.

For a least squares problem we have:

By performing QR decomposition on A, we can get:

Here we will

So further we have:

The result is an expression that solves the linear least squares for QR decomposition.

2.4. Singular Value Decomposition (SVD)

The least squares problem of the above form can also be solved by the SVD decomposition method. for

Since we have assumed that the problem is overdetermined (m>n), there are:

The solution method of SVD is summarized as follows, refer to [3].

The least squares of the homogeneous equation

The least squares problem we discussed earlier is in the form of Ax − b = 0. If the problem form changes to Ax = 0, how should such a least squares problem be solved?

Problems with the form Ax = 0 often appear in reconstruction problems. We expect to find a solution where the equation Ax = 0 and x is not equal to zero. Due to the special form of the equation we find that for a solution where x is not equal to zero, we multiply any scale factor k so that the solution becomes kx without changing the final result. Because we can convert the problem to solve x such that the ||Ax|| value is the smallest and ||x||=1.

Now the SVD decomposition of matrix A is:

Through the properties of the D matrix in the SVD decomposition we can find that D is a diagonal matrix and its diagonal elements are arranged in descending order. So the solution to the equation should be:

The solution has a non-zero entry with a value of 1 at the last position. Based on this result, according to the equation:

We can see that the value of x is the last column of matrix V.

-------------------------------------------------------------------------------------------------------------------------

Attached:

For Ax=b, A is a matrix of mXn, and the result has the following three possibilities:

- m<n, the number of unknowns is greater than the equation format, in which case there is no unique solution, but there is a vector set of solutions;

- m=n, if the matrix A is reversible, there is a unique solution;

- m>n, the number of equations is greater than the number of unknowns. Under normal circumstances, the equation will not have a solution, unless b is located in the column space of matrix A;

Please let me know if there is any delay in the above content.

Reference content:

[1]. Solving Linear and Nonlinear Least Squares Problems with Math.NET

[2]. Least Squares Approximation/Optimization

[3]. Multiple View Geometry in Computer Vision, Appendix 5, Least-squares Minimization

[4]. Matrix Method in Machine Learning 03: QR Decomposition

Intelligent Recommendation

And least-squares linear regression

And least-squares linear regression Brief introduction Linear Regression Least Squares Programming code 1 code 2 Brief introduction Linear regression is a simple to use and very powerful tool. Linear ...

Least squares linear regression

Today's first task is to fit three points. Three points. . I reviewed the least squares method (linear fitting) -Loss function: sum of squared residuals -Evaluation indicators: MSE (mean square error)...

Linear regression: least squares

Least squares The problem solved by the least square method is the model fitting problem. Given a set of samples and a hypothetical model, the parameters of the model are estimated through the samples...

Linear regression least squares

In reality, we have to study a problem, such as bank loans and personal income The above question is the simplest unary linear model, first look at a few definitions Classification problemIn supervise...

More Recommendation

Linear regression, least squares

Return definition: For a point set, use a function to fit the point set, minimize the error between the point set and the fitting function, if this function curve is a straight line, it is linear retu...

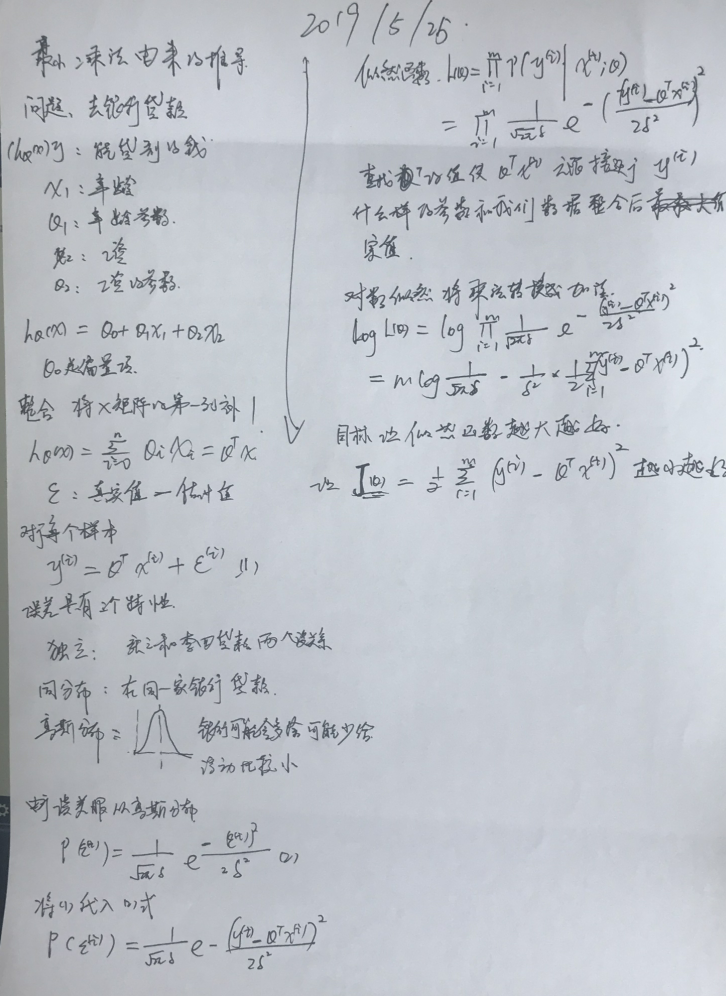

Linear Regression: Least-Squares

What is a linear model Premise assumption: the input variable has a linear relationship with the output variable What is Linear Regression Linear regression, arguably an instance of a linear model A l...

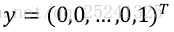

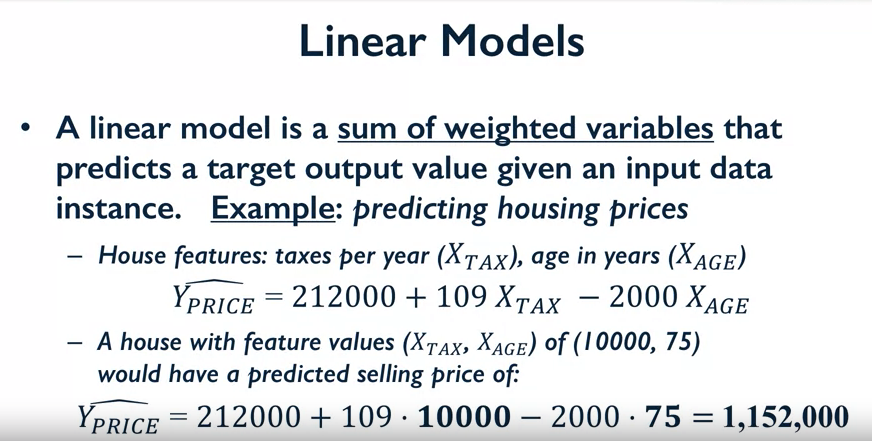

Gaussian Newton algorithm for nonlinear least squares problem

@Gaussian Newton algorithm for nonlinear least squares problem Nonlinear least squares and Gaussian - Newton algorithm I started to do this or because of a course design task in the school, I didn't h...

Linear least squares method and nonlinear least squares

Article catalog 1 least squares problem 2 linear least squares 3 nonlinear least squares 3.1 Gaussian - Newton 3.2 Levenberg-Marquardt method 4 reference books 1 least squares problem Optimization iss...